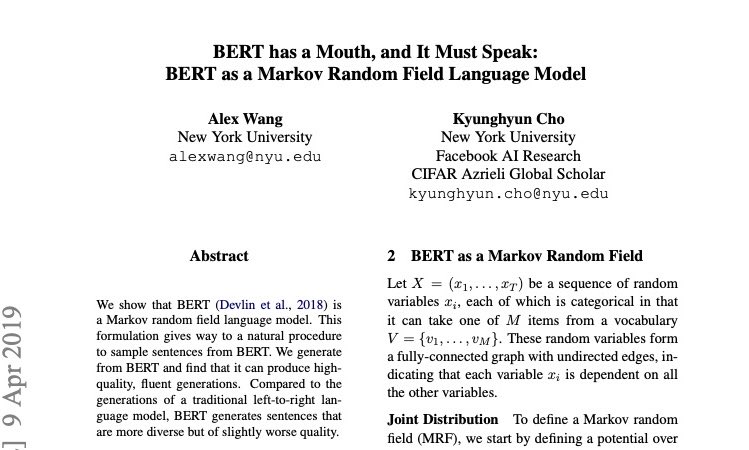

Playing around with training a tiny 11M parameter character-level text diffusion model! It's a WIP but the code is currently a heavily modified nanochat gpt implementation (to change from autoregressive decoding to diffusion) and trained on the Tiny Shakespeare dataset. The naive implementation of a masking schedule is having a uniform masking probability for each token for each iteration. Newer approaches mask in block chunks from left to right which improves output quality and allows some KVCache reuse. I realized you can actually apply masking in any arbitrary manner during the generation process. Below you can see I applied masking based on the rules of Conway's Game of Life. I wonder if there are any unusual masking strategies like this which provides benefits. Regardless, this a very interesting and mesmerizing way to corrupt and deform text.