/sesh/null

587 posts

@nerdsane

VP, Observability-Data@datadoghq | peripatetic | minimalist { engineer | athlete | artist } | I have opinions-of-my-own

“When AI Discovers the Next Transformer” Robert Lange (Sakana AI) joins Tim Scarfe (@MLStreetTalk) to discuss Shinka Evolve, a framework that combines LLMs with evolutionary algorithms to do open-ended program search. Full Video: youtu.be/EInEmGaMRLc

Use natural language to query logs, metrics, traces, and dashboards with the Datadog plugin.

Use natural language to query logs, metrics, traces, and dashboards with the Datadog plugin.

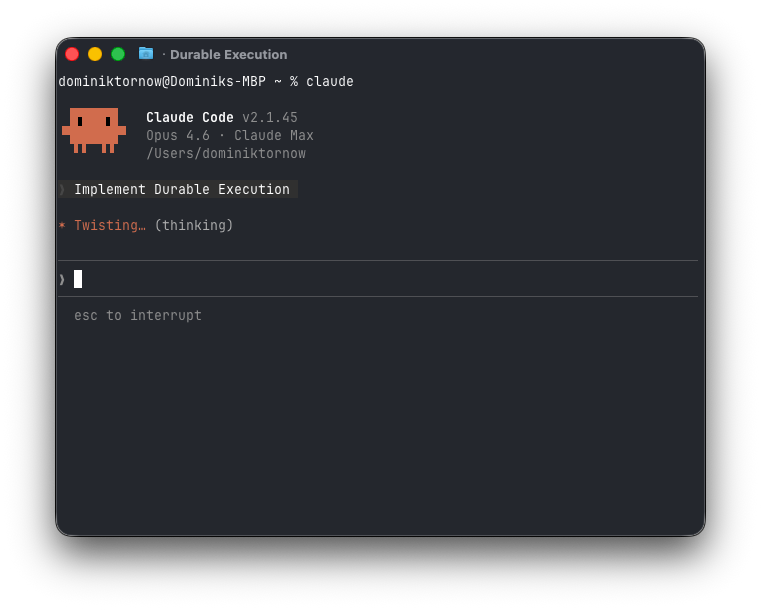

I wrote about what I uncovered while unpacking the tech, and what I think it all means: modular.com/blog/the-claud… ps, thanks to many humans for their feedback, judgement and improvements!

I think it must be a very interesting time to be in programming languages and formal methods because LLMs change the whole constraints landscape of software completely. Hints of this can already be seen, e.g. in the rising momentum behind porting C to Rust or the growing interest in upgrading legacy code bases in COBOL or etc. In particular, LLMs are *especially* good at translation compared to de-novo generation because 1) the original code base acts as a kind of highly detailed prompt, and 2) as a reference to write concrete tests with respect to. That said, even Rust is nowhere near optimal for LLMs as a target language. What kind of language is optimal? What concessions (if any) are still carved out for humans? Incredibly interesting new questions and opportunities. It feels likely that we'll end up re-writing large fractions of all software ever written many times over.