Xack4r

25 posts

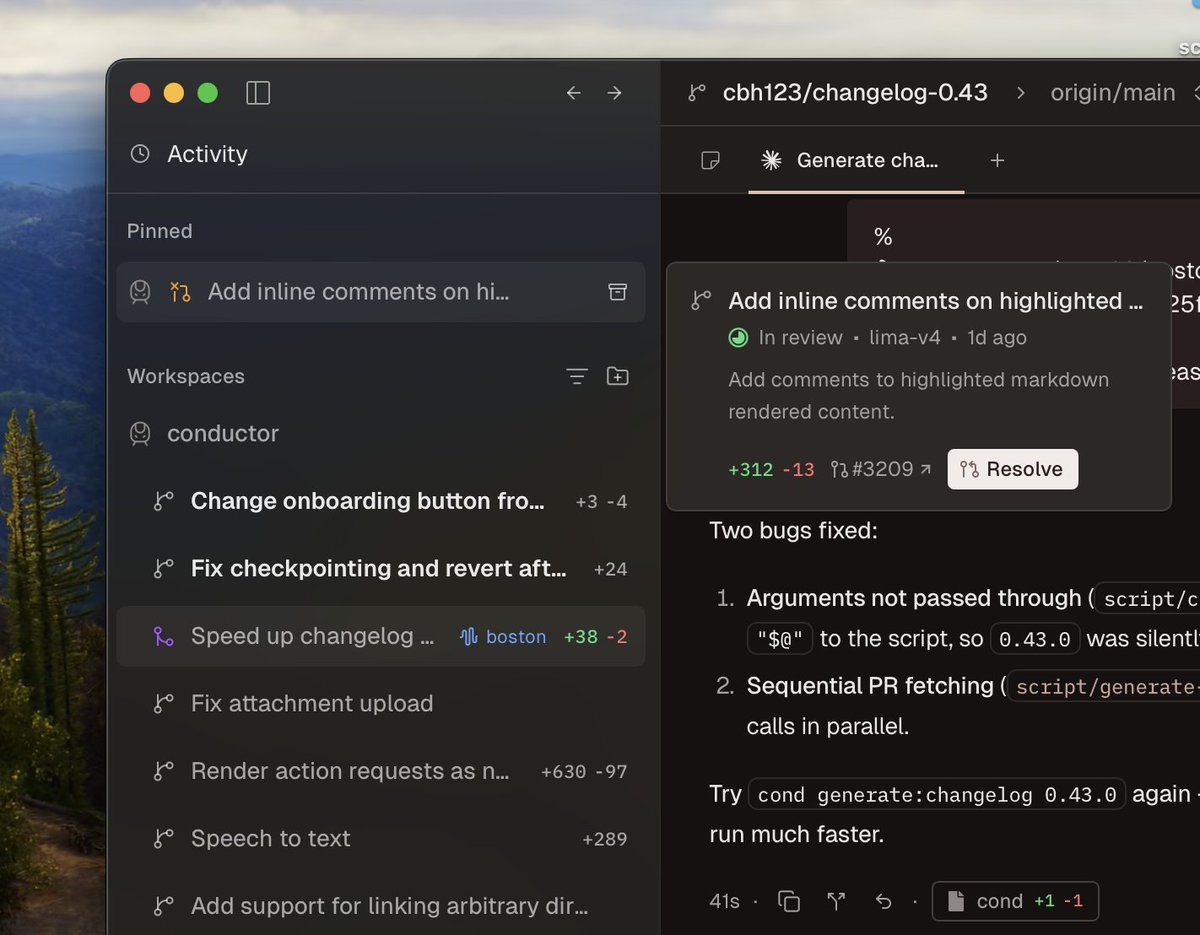

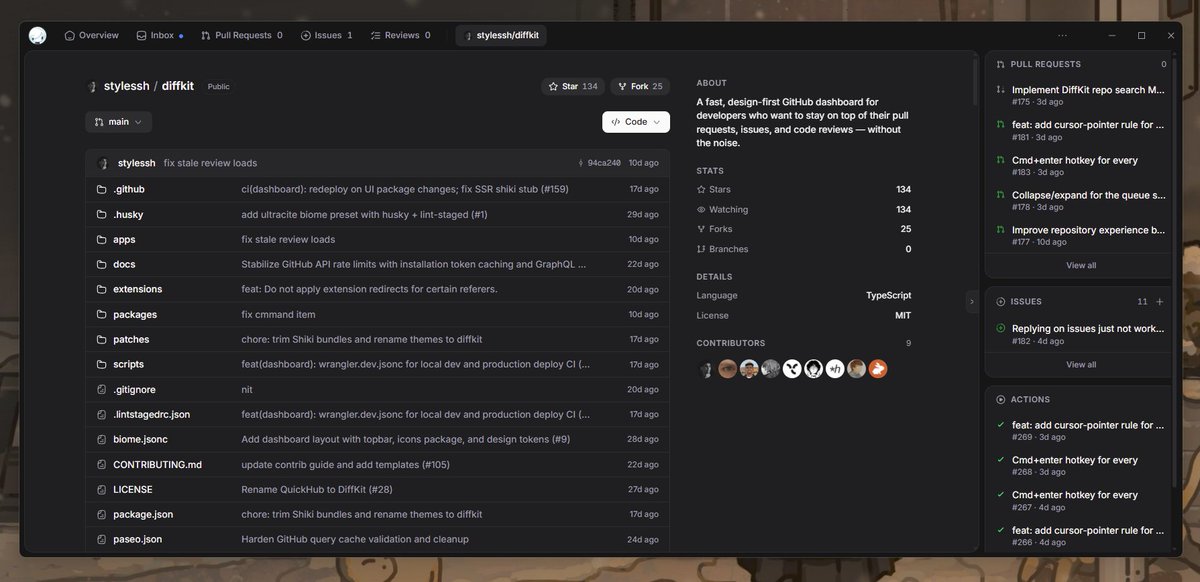

@stylesshDev built diffkit - a beautiful, design-first GitHub dashboard that makes PRs and code reviews actually enjoyable. Loved it so much I ported it to a native Electron app. Huge respect for the project and the quality of the codebase. Deserves way more stars than it has.

English

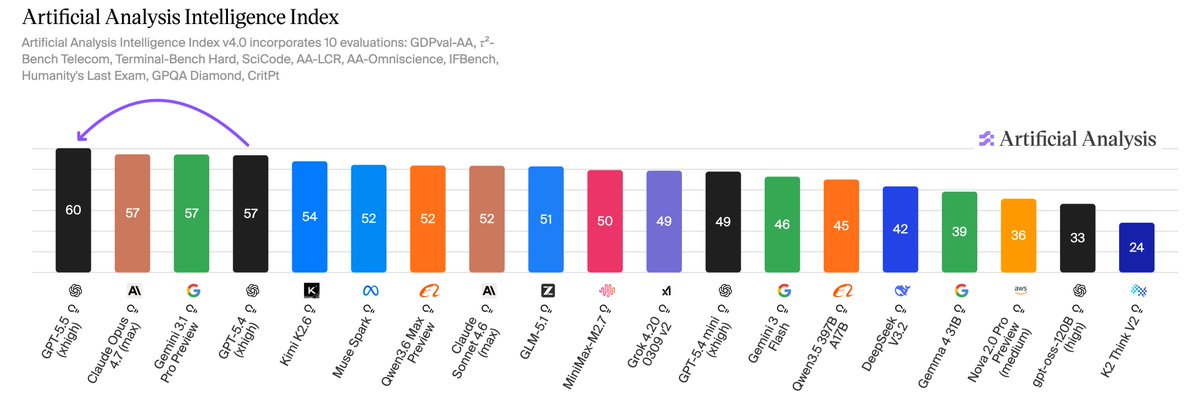

GPT-5.5 takes OpenAI back to the clear number one in AI. OpenAI’s new model tops the Artificial Analysis Intelligence Index by 3 points, breaking a three-way tie with Anthropic and Google

OpenAI gave us pre-release access to test all five reasoning effort levels: xhigh, high, medium, low and non-reasoning.

➤ OpenAI topping five headline evaluations: GPT-5.5 (xhigh) leads Terminal-Bench Hard, GDPval-AA and our newly hosted APEX-Agents-AA. The model trails only other OpenAI models in CritPt and AA-LCR, and comes second to Gemini 3.1 Pro Preview on three additional evaluations. The largest gains are on AA-Omniscience (+14 pts), our knowledge and hallucination benchmark, and τ²-Bench Telecom (+7 pts), a customer service agent benchmark.

➤ 20% more expensive to run our Intelligence Index: Per-token pricing has doubled from GPT-5.4 to $5/$30 per 1M input/output tokens. However, a ~40% token use reduction largely absorbs the hike - resulting in a net ~+20% cost to run our Intelligence Index.

➤ Effort a clear ladder for balancing intelligence and cost: GPT-5.5 (medium) scores the same as Claude Opus 4.7 (max) on our Intelligence Index at one quarter of the cost (~$1,200 vs $4,800) - although Gemini 3.1 Pro Preview scores the same at a cost of ~$900. GPT-5.5 (low) approximates Claude Opus 4.7 (Non-reasoning, high) on our Intelligence Index at half the cost to run (~$500 vs ~$1 ,000).

➤ Number one in GDPval-AA with an Elo of 1785: GPT-5.5 (xhigh) leads Claude Opus 4.7 (max) by ~30 pts and Gemini 3.1 Pro Preview by ~470 pts. GDPval-AA is Artificial Analysis’ benchmark that leverages OpenAI’s GDPval dataset to evaluate models on real-world economically valuable tasks.

➤ Top AA-Omniscience accuracy, but trailing the frontier on hallucination: Our private AA-Omniscience benchmark rewards factual knowledge across diverse topics, but punishes hallucination. GPT-5.5 (xhigh) has the highest accuracy at 57% - meaning the model can recall facts in the Omniscience corpus more effectively than any other model. However, it has a hallucination rate of 86% - vs Opus 4.7 (max) at 36%, and Gemini 3.1 Pro Preview at 50%. This makes it more likely to answer a question when it does not ‘know’ the answer. The 14 pt gain in AA-Omniscience from GPT-5.4 (xhigh) was largely driven by knowledge, with a modest improvement in hallucination.

Congratulations to the team at @OpenAI and @sama on the launch

English

$5 per mil in, $30 per mil out.

GPT-5.5 is smart. I've been using it for a bit. It's also weird, hard to wrangle, and too expensive IMO.

Double the price of GPT-5.4. 20% more expensive than Opus 4.7.

OpenAI@OpenAI

Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, check its work, and carry more tasks through to completion. It marks a new way of getting computer work done. Now available in ChatGPT and Codex.

English

My new cryptography puzzle is now live. Will pay $1,000 to the first person who DMs me the plaintext decryption of the first line.

2nd line is a hint. If you send me slop, AI hallucinations, or a decryption of the 2nd line, you are disqualified.

x.com/theo/status/20…

Theo - t3.gg@theo

For no reason in particular, I made my first crypto challenge. I will pay $1,000 to whoever solves it first. Winner is whoever gets the answer into my DMs first.

English

@bridgemindai @matthewmillerai idk, for me Opus 4.7 sucks in Frontend part. Not yet tested for backend, but keep using 4.5/4.6

English

And the circle is complete. MinIO is now archived.

1️⃣ MinIO deprecated the UI.

2️⃣ They stopped providing images and pre-built binaries, going source code only.

3️⃣ The documentation “disappeared”, available only to enterprise users.

4️⃣ MinIO started to only receive security updates and bug fixes - no new features.

5️⃣ MinIO gets archived and the CE version is no longer maintained.

English