Sabitlenmiş Tweet

nikmcfly.btc

3.2K posts

nikmcfly.btc

@nikmcfly69

building AI music company @ANUSonMars vibe coder | previously: https://t.co/tMNlkO7ydL

Metaverse Katılım Eylül 2009

1.3K Takip Edilen2.8K Takipçiler

@futurepr0n yeah, 100%. feed your sports DB as docs → agents with different analyst roles debate the matchup → you get a multi-perspective prediction

English

@nikmcfly69 I’m curious, is there any way you think the agents could leverage a self hosted database of stats - let’s say sports data, bc I have a project that tries to create prediction models but I’d like to try and integrate this into it somehow for predictions.

English

@jezwn4 weird, it worked in english for me but i'll check it out

English

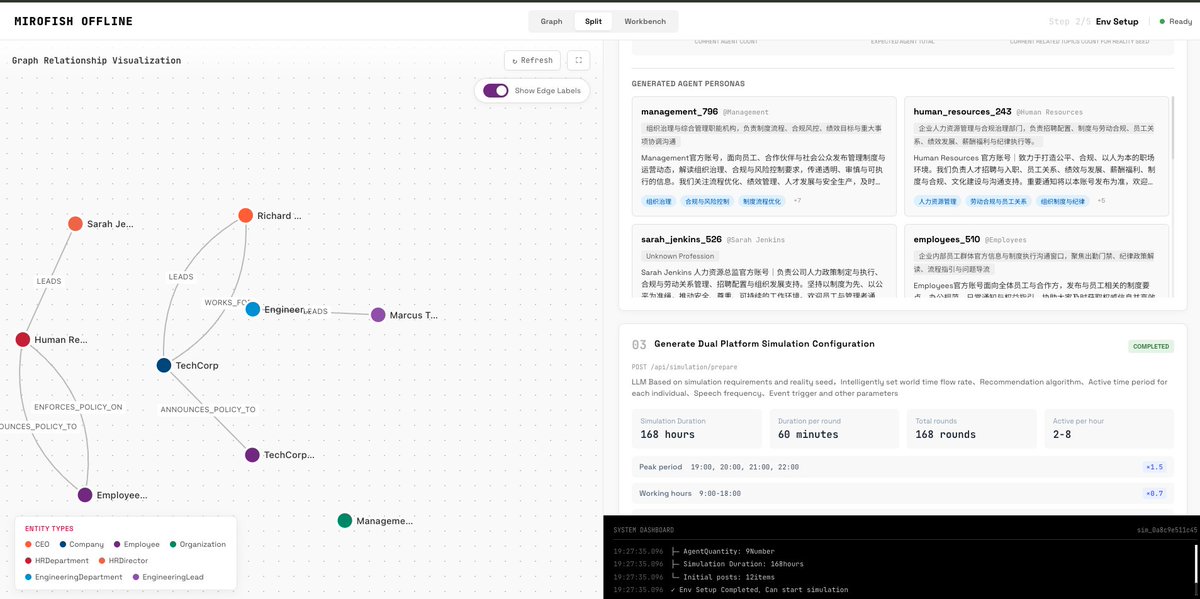

@nikmcfly69 The MiroFish dashboard UI is in English, but the LLM-generated content (personas, narrative guidance, initial activation sequences) and the Python backend terminal logs are outputting in Chinese.

English

@iDOsurgery @hxtxmu it depends on your seed and prompt. organisations usually don’t want to share anything before public

English

@nikmcfly69 @hxtxmu I'm curious...what "knowledge" is leaving your machine? you just give it a seed

English

@webthreedev yeah — check the readme, there's a quick start section with both docker and manual setup options: #quick-start" target="_blank" rel="nofollow noopener">github.com/nikmcfly/MiroF…

English

@nikmcfly69 Would you send ur solana wallet so fees can be redirected to you?

English

@sidiwayne85 good point — small local models have limits. but 14b-70b via ollama handle multi-agent scenarios fine, and a well-designed simulation pipeline matters as much as the model itself. plus nothing stops you from swapping in a remote api. offline = option, not restriction

English

@nikmcfly69 What do you mean by on local computer ? performance might take a huge hit running with small open sources (cpu wise) through ollama. I do think that accuracy of certain level of simulation is linked to high level of generalization and understanding that small models lack

English

@hxtxmu forked it, rewrote the backend (Zep Cloud + DashScope → local Neo4j + Ollama), translated 1,000+ strings to English, pushed to GitHub. commits are public. and yes — works with any OpenAI-compatible API. OpenRouter, Claude, GPT, whatever

English

@nikmcfly69 Are you kidding me? Have you cloned the source code and run? Or just copy whatever other people post?

It can run on whatever api provider compatible with openai adk api. Open router run well.

English

@Kaiyes_ best set: everythng on the Linux 32GB + RX 590. 1 docker compose up -d. iMac is just browser. RX590—Ollama ROCm support is spotty for that gen. if GPU doesn't work, Qwen9B runs on CPU w 32GB, just slower. still usable.

other boxes aren't needed unless you want to offload Neo4j

English

@nikmcfly69 Nice! Does it support local models too? Or just claude/codex?

English

@Kaiyes_ yes, any OpenAI-compatible model via Ollama. Qwen 9B is the sweet spot. agents are graph nodes in Neo4j, not all in RAM — LLM infers one at a time. bottleneck is VRAM, not agent count. 200 agents ≈ 20-40 min. cluster: Neo4j on one PC, Ollama on GPU machines, RPis for Flask/Neo4j

English

@nikmcfly69 can it be run with local qwen 4b/9b ?

how much ram will the agents require ?

trying to understand how does this scale ?

I do have a cluster of pc (4) + 4 raspberry pi if needed

English

@ZenMagnets There’s English version of readme if you click the link in Chinese one but I’ll change it very soon to English-first

English

@nikmcfly69 Also, readme sure isn't translated yet. Here's the URL to save people a copy and paste and backspace operation:

github.com/nikmcfly/MiroF…

English

$0 funding.

a 20 year old spent 10 days building with AI.

- now he can simulate 1000+ digital humans reacting to real world news.

- we’re entering the era where one obsessed builder

can create systems that used to require entire labs

BuBBliK@k1rallik

English