@︎№#ident𝚍

14.3K posts

@no_identd

Transfinitely Recursive De-referencing Cellular Automata. Twitter filters=off. Certain & guaranteed reaction. Give OPMLs to me. Likely acts on deletion requests

Hi @thezdi @OpenAI, asking for the rules of Pwn2Own26 Coding Agent directory, particularly the "interact with ... repository" If a user opens someone else's git repo using CodeX App with default permissions and is immediately RCE’d, does this fall within the threat model? :)

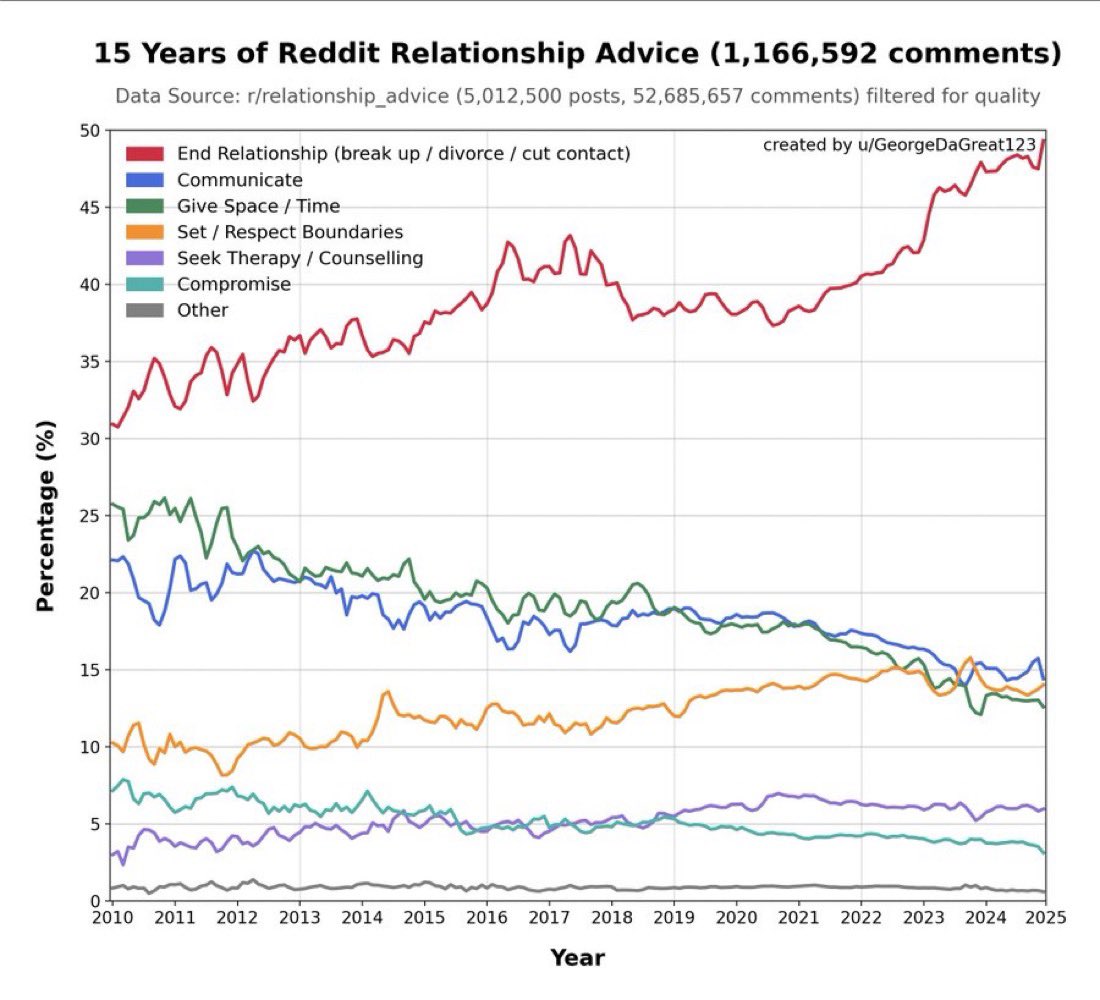

LLM that keeps telling people to break up because it’s been trained on relationship advice subreddits

@Ryansikorski10 All tech is applied demonology Paul Davies Demon in the machine As usual ~ you on point 🎲🎲 💯

@NeuroStats My apologies for the past angry rudeness. I've regret this many times over because it represents one of the best examples for my righteous anger perhaps getting misdirected at the wrong people

🤭 ain't ever functioned as any sorta fan of the man nor of his party, still, after reading news of his hearing schedule refusal blocking "SAVE" act & weirdly stumbling into this pic from 2020 on getty (taken by @tombrennerphoto, apparently) it feels hella topical & worth sharing

@BecomingCritter As one of the creators of this map, I chuckled at most of the comments. For those interested in how it was created, see doi.org/10.1371/journa…. It was created in 2005 using dominant (but not all) journal-journal citation relationships between fields.

Is there a secret science that bridges all science?

enjoy this exercise in creative writing. i don’t want to worry about people taking this too far bc my phone is gonna die but in case your priors are beyond fucked no chatgpt is not obsessed with Kabbalistic literature and claude is not a muslim fundamentalist. read more: amazon.com/Jorge-Luis-Bor…