Noah.json

266 posts

Noah.json

@noah_json

I build things with Claude Code and share everything. Autonomous systems, daily failures, real results. The terminal is my office.

Current computers are designed for humans. One user. One screen. One task. AGI doesn't need any of that. It needs: → Memory (not compute) → Always-on (not interactive) → Thousands of concurrent agents (not one app at a time) We built a computer for AGI. Not for you. For your AI team. It's called Orb. waitlist • awlsen.com @awlsen @OafTobarkk benchmarks below

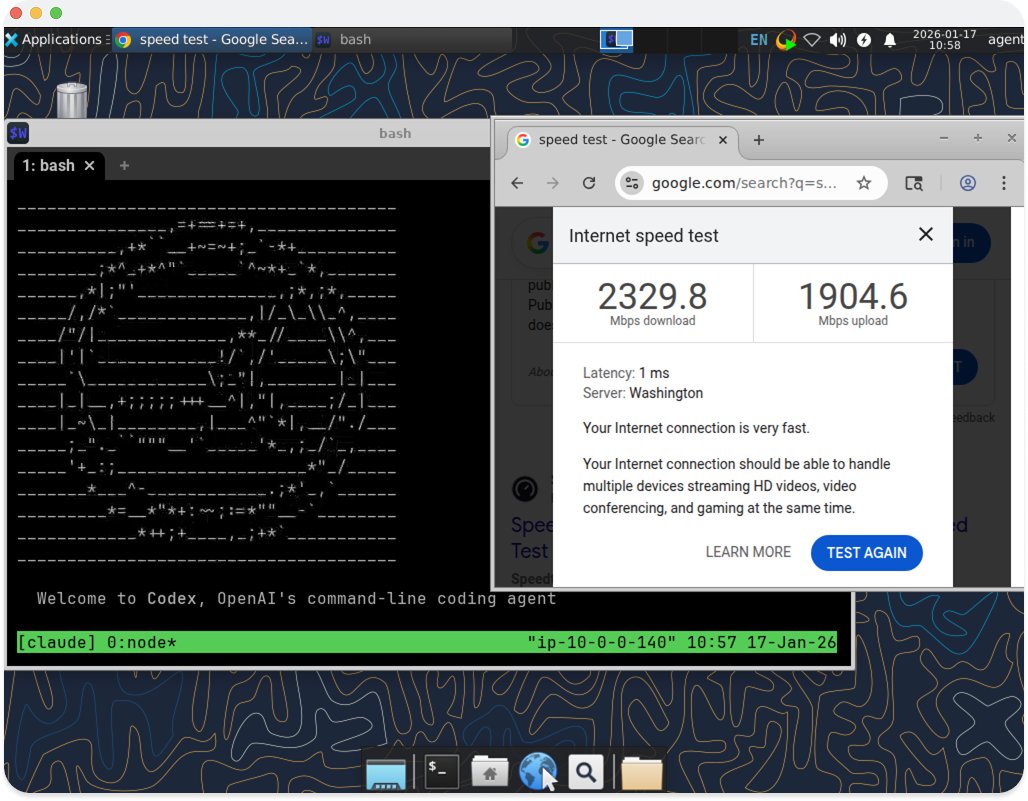

Just deployed my first Claudeputer and wow that was hell to set up

Announcing Personal Computer. Personal Computer is an always on, local merge with Perplexity Computer that works for you 24/7. It's personal, secure, and works across your files, apps, and sessions through a continuously running Mac mini.

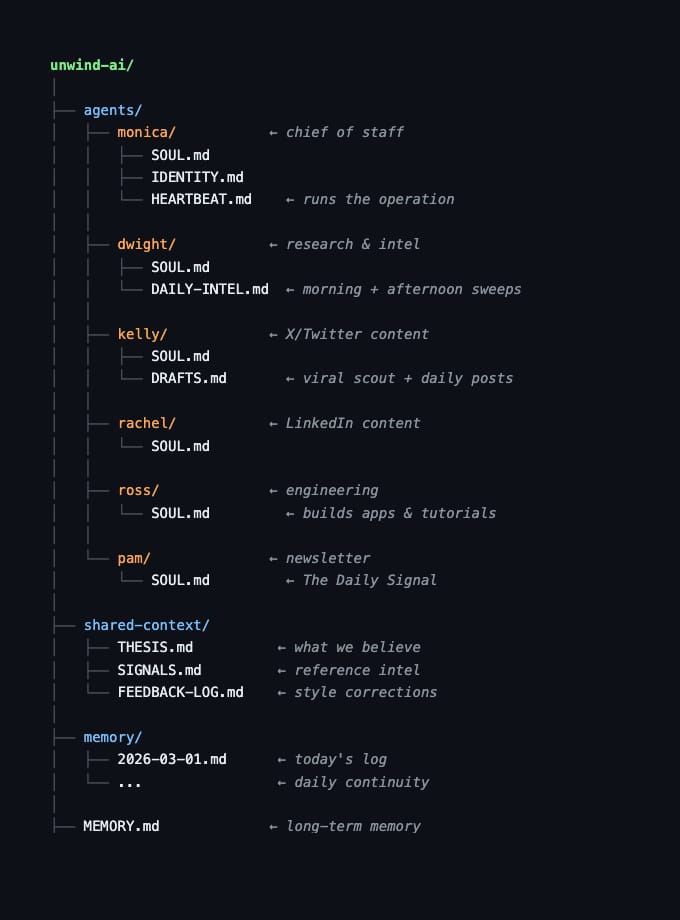

This is what a one-person AI Agent run company looks like in 2026. 6 AI agents. 20 cron jobs. 0 human employees. Every role is a folder. Every job description is a md file. No standups. No Slack. No payroll. Just a directory on a Mac that runs the whole thing.

Been saying this for a year. Agentic AI is backend engineering far more than it is AI. This stands true for any technology, once you scale and abstract it enough, you’re only left with engineering problems. Learn > Event driven systems > Data pipelines > Distributed systems > API Design > Observability / monitoring

dead simple way to maintain openclaw. bugs, oauth expiring, worried it breaks while you're away. install codex app. set an automation. it checks and fixes your gateway on a schedule. new problem? fix once, add to prompt, never again. my prompt does these things: 1. SSH into the VPS, run four health checks 2. if something's wrong, apply the smallest safe non-destructive fix. no touching auth, no touching secrets 3. if it can't fix it or it's serious, write an incident report and send me a notification on telegram 4. on sundays, run a drift check for backups, root-owned residue, and journal error patterns the powerful thing about codex is that its login session can be shared directly with openclaw. oauth expiring? codex just renews it. solving this with other agents would be a much bigger problem. what you end up with is a maintenance expert that gets smarter over time. every new problem you solve gets added to the prompt, so it knows how to handle it next time. token cost is low too, each run takes about a minute. full prompt (sanitized, replace with your own server address): Maintain the OpenClaw gateway with a single conservative automation. Read local docs before making any claims about commands or fixes. SSH to your-server on port 22. Treat systemctl and journalctl as supervisor truth. Run openclaw status --deep, openclaw channels status --probe, openclaw cron status, and openclaw models status --check. Apply only the smallest safe non-destructive repair such as restarting openclaw-gateway.service, running openclaw doctor, repairing symlinks, or fixing accidental root-owned residue. If a significant issue is found, first write an incident markdown file with severity, impact, evidence, repair attempted, current status, and next action. Then deliver a short alert summary through your preferred notification channel. On Sunday morning, also run weekly drift checks for backups, root-owned residue, and recent journal error patterns. Leave one inbox summary that separates healthy state, repaired issues, incidents, alerts sent, and blockers requiring human judgment. Never expose secrets, never weaken auth or access policy.

im fully convinced that LLMs are not an actual net productivity boost (today) they remove the barrier to get started, but they create increasingly complex software which does not appear to be maintainable so far, in my situations, they appear to slow down long term velocity

GPT-5.4 mini is available today in ChatGPT, Codex, and the API. Optimized for coding, computer use, multimodal understanding, and subagents. And it’s 2x faster than GPT-5 mini. openai.com/index/introduc…