Honored to announce that Yann LeCun @ylecun is joining Prior Labs’ Scientific Advisory Board.

Noah Hollmann

147 posts

@noahholl

Co-founder & CTO @ Prior Labs | Building AI for tabular data

Honored to announce that Yann LeCun @ylecun is joining Prior Labs’ Scientific Advisory Board.

Get a complete introduction to TabPFN, from theory to practice. @pandeyparul covers the model's transformer-based architecture and unique training pipeline. towardsdatascience.com/exploring-tabp…

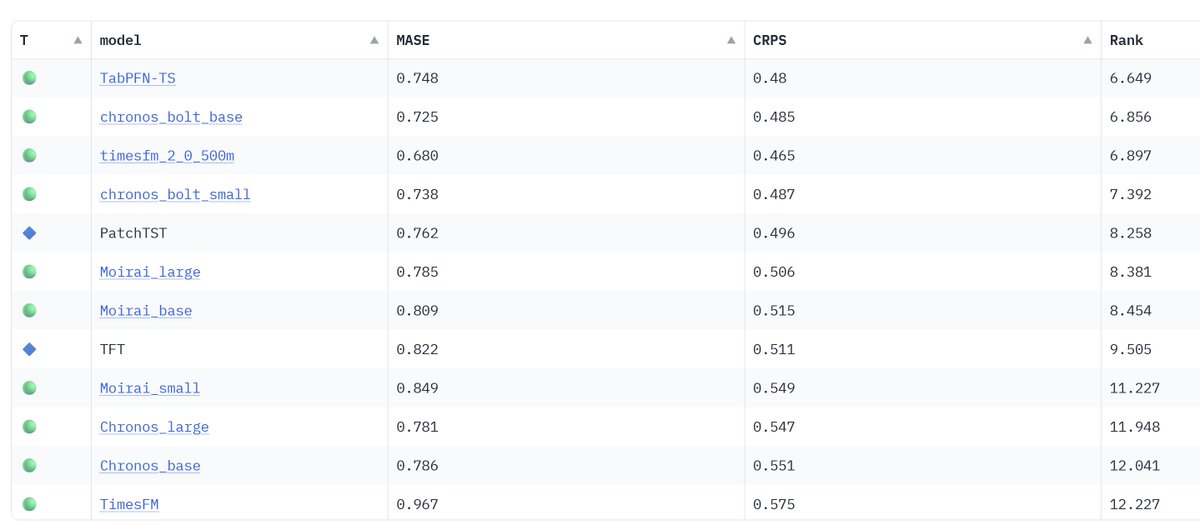

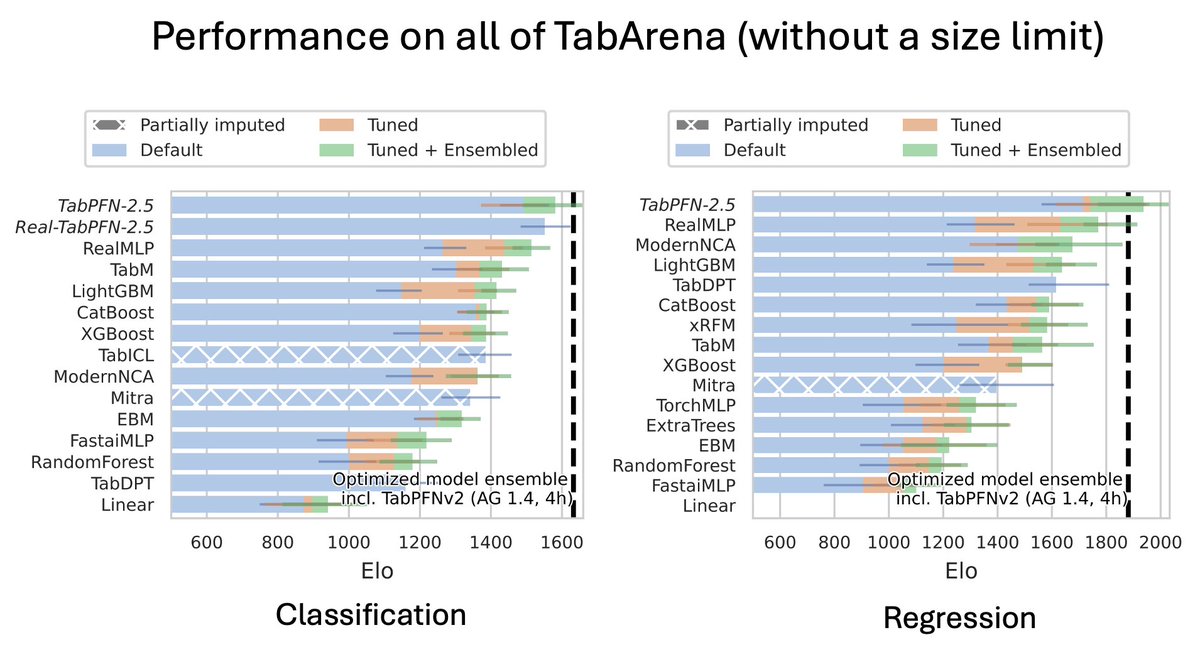

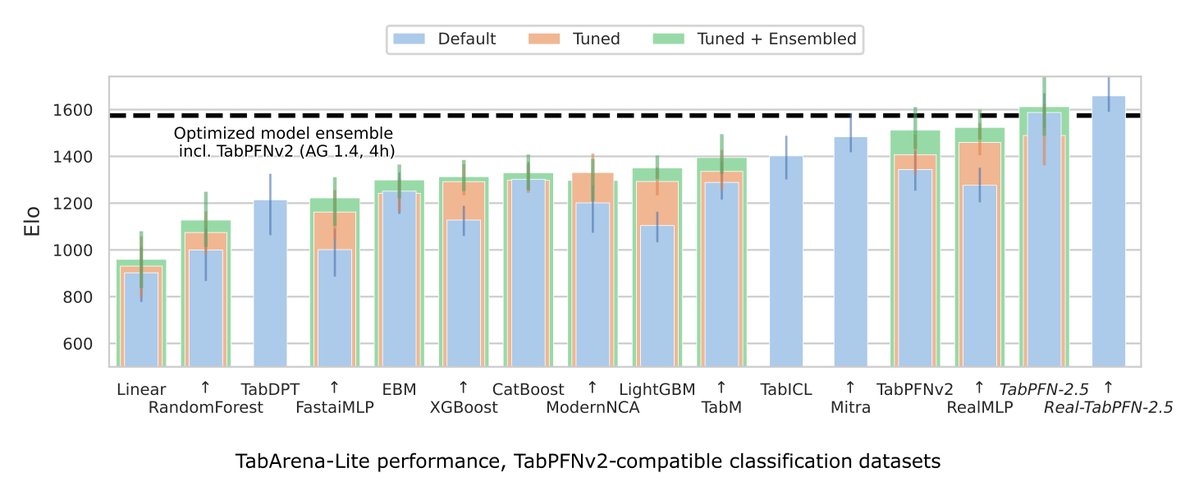

🚨What is SOTA on tabular data, really? We are excited to announce 𝗧𝗮𝗯𝗔𝗿𝗲𝗻𝗮, a living benchmark for machine learning on IID tabular data with: 📊 an online leaderboard (submit!) 📑 carefully curated datasets 📈 strong tree-based, deep learning, and foundation models 🧵

We believe that Do-PFN can provide causal insights on diverse and understudied problems where experimental data is scarce! This is joint work with @Arik_Reuter (shared), @syguoML, @noahholl, @FrankRHutter, @bschoelkopf Checkout the paper at: arxiv.org/abs/2506.06039 (7/7)

We present a new approach to causal inference. Pre-trained on synthetic data, Do-PFN opens the door to a new domain: PFNs for causal inference—we are excited to announce our new paper “Do-PFN: In-Context Learning for Causal Effect Estimation” on Arxiv! 🔨🔍 A thread: