Sabitlenmiş Tweet

noemon

9 posts

noemon

@noemon_ai

Changing how AI scales by changing how it learns.

Zürich Katılım Haziran 2024

2 Takip Edilen303 Takipçiler

@ysu_ChatData Our harness doesn't use any tools. That's probably one of the most interesting aspects. We will soon share a blog post that answers your question and others in more detail.

English

@noemon_ai Impressive delta. Curious what parts of the harness drove the 12 point gain: tool use, self critique, or a curriculum built from failures? And how do you prevent overfitting to the public eval set while iterating?

English

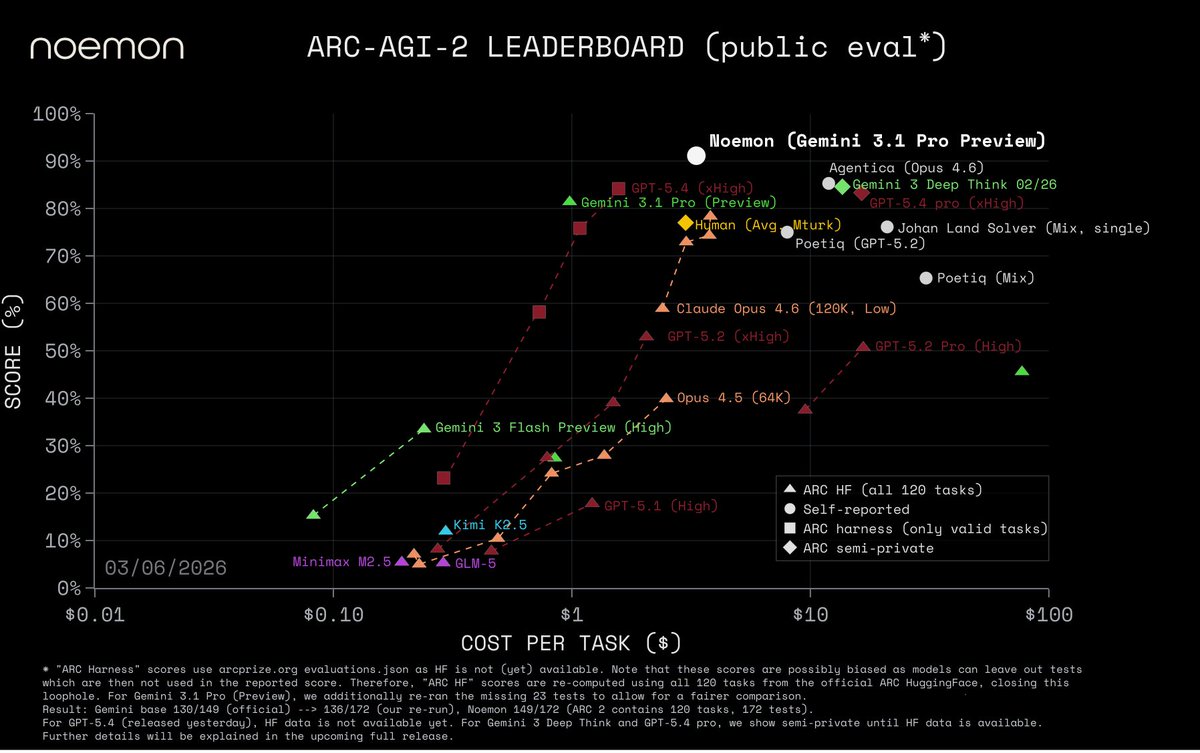

Announcing: ARC-AGI-2 top score and at a fraction of the cost through an agentic learning harness for iterative self-improvement.

Public Eval — 91% @ $3.3/task.

SOTA compared to even new models trained for extreme reasoning like GPT-5.4 Pro and Gemini 3 Deep Think, while also reducing their cost by more than 4x.

We used Gemini 3.1 Pro (Preview) and we increased its score by 12 percentage points.

@GregKamradt @fchollet @mikeknoop

English

In our system, it is already the case that, if the first round is not enough, then there are multiple iterations where the Validator provides feedback and the Reasoner updates the hypothesis based on all its past experience.

For longer-horizon and more complex tasks, more advanced types of continual learning are needed indeed. Our first real announcements beyond the ARC-AGI teaser are coming soon!

English

@noemon_ai Cool! Any thoughts on how to transfer this approach to ARC-AGI v3, where the validation can only happen after multiple exploratory steps instead of the application of a single hypothesis to be verified?

English

noemon retweetledi

Many understand this would be huge, but most current solutions are "let's write a summary" and call it a day. Backprop based training doesn't cut it though. Our founding team @noemon_ai has been building the alternative path for years, and we now know how to scale it.

Beff (e/acc)@beffjezos

I think everything will change once AI is stateful / have persistent memory at the weight level. It will have a theory of self in the world and a theory of its own mind. If that's not consciousness idk what is

English

The lessons from this SOTA and cost-efficient agentic approach are general and can be applied to other environments that are closed and verifiable.

While keeping our core work beyond ARC-AGI in stealth mode, we will release the code of this harness to the community, publish a blog post with details, and submit our work to @arcprize for verification in the next few days.

The focus of our core research is deeper than agentic harnesses, than closed environments, and than simple in-context learning. At @noemon_ai we are changing how AI scales by changing how it learns.

Stay tuned.

English

Our method's effectiveness and efficiency relies on learning, i.e. internalizing lessons from experience into the model, not only iterating on model output artifacts such as code.

In fact, differently from many recent approaches, we do not use any code execution at all.

Learning in this case where we don't have access to the model's internals, and where the horizon is relatively short, is achieved by simply conditioning the model on the history of its past attempts and their outcomes.

Learning and reasoning is grounded on the real task environment: hypotheses are tested on the grid, and feedback comes from the world, not just from the model's own reasoning trace.

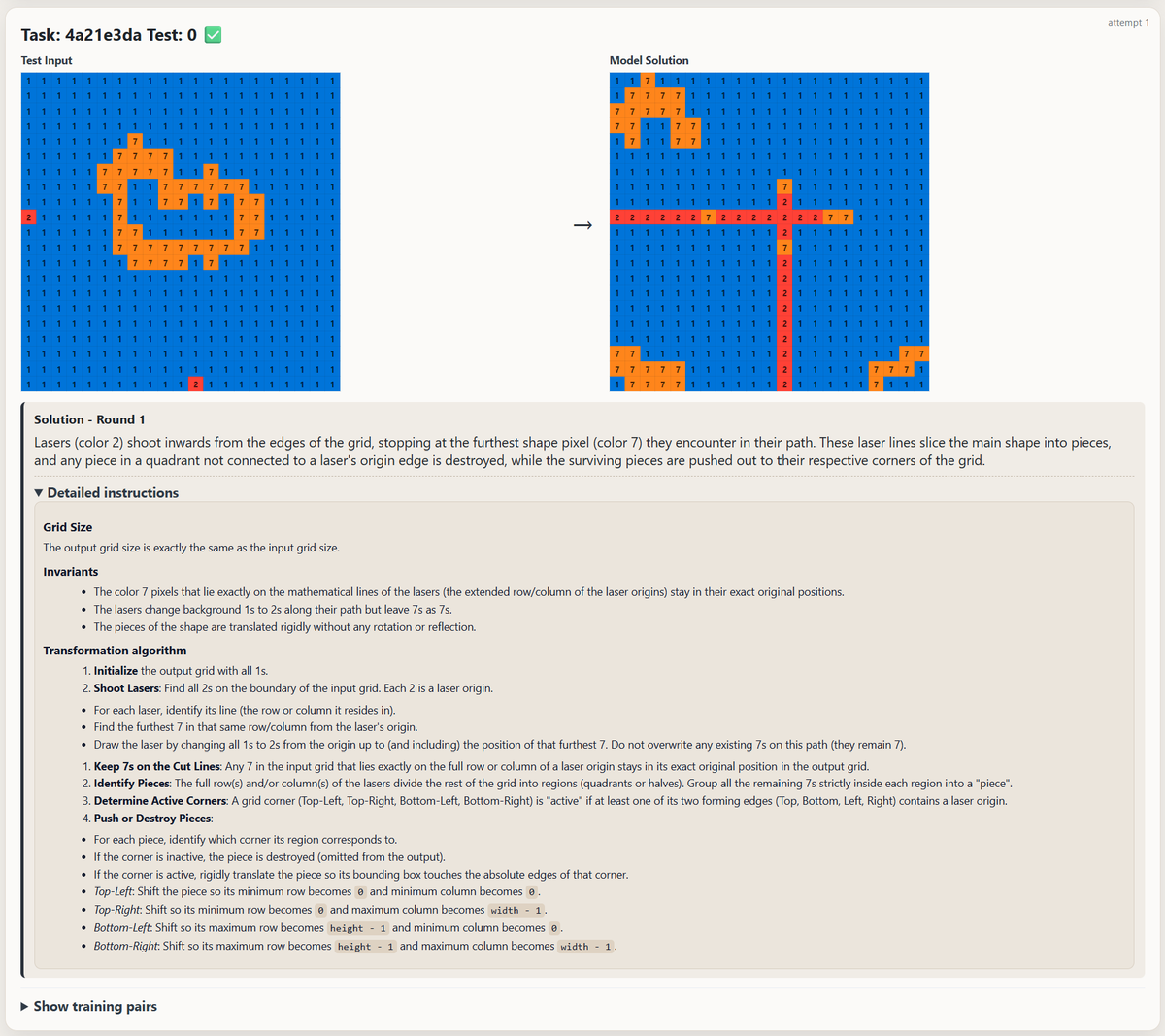

The core of our approach is a simple ReAct loop: We separate reasoning/planning from validating/acting: a dedicated Reasoner learns from full history to improve its natural-language instructions which it provides to a dedicated Validator that executes, validates, and returns feedback to the Reasoner.

The image shows an example problem along with the natural language solution that the Reasoner hypothesized and provided to the Validator. The Reasoner describes it as "Lasers" that "shoot inwards"

The meta-cognitive capabilities of the new Gemini model are also critical as they help decide when the (learning) process can be stopped.

English

@timos_m @noemon_ai what about sequence to sequence models? we still have ~ no clue on how to train them without backprop

English

It is hard. But doable.

Our 3-year-old work on local learning I believe is still the public SOTA. Links below.

Imagine where the SOTA might be today in stealth @noemon_ai

Yaroslav Bulatov@yaroslavvb

Backprop is non-local so it's a bad fit for GPUs. Or anything else involving atoms. It's hard to "evolve" out of this local minimum without breaking everything

English

noemon retweetledi