Trần Ngọc Sơn

12 posts

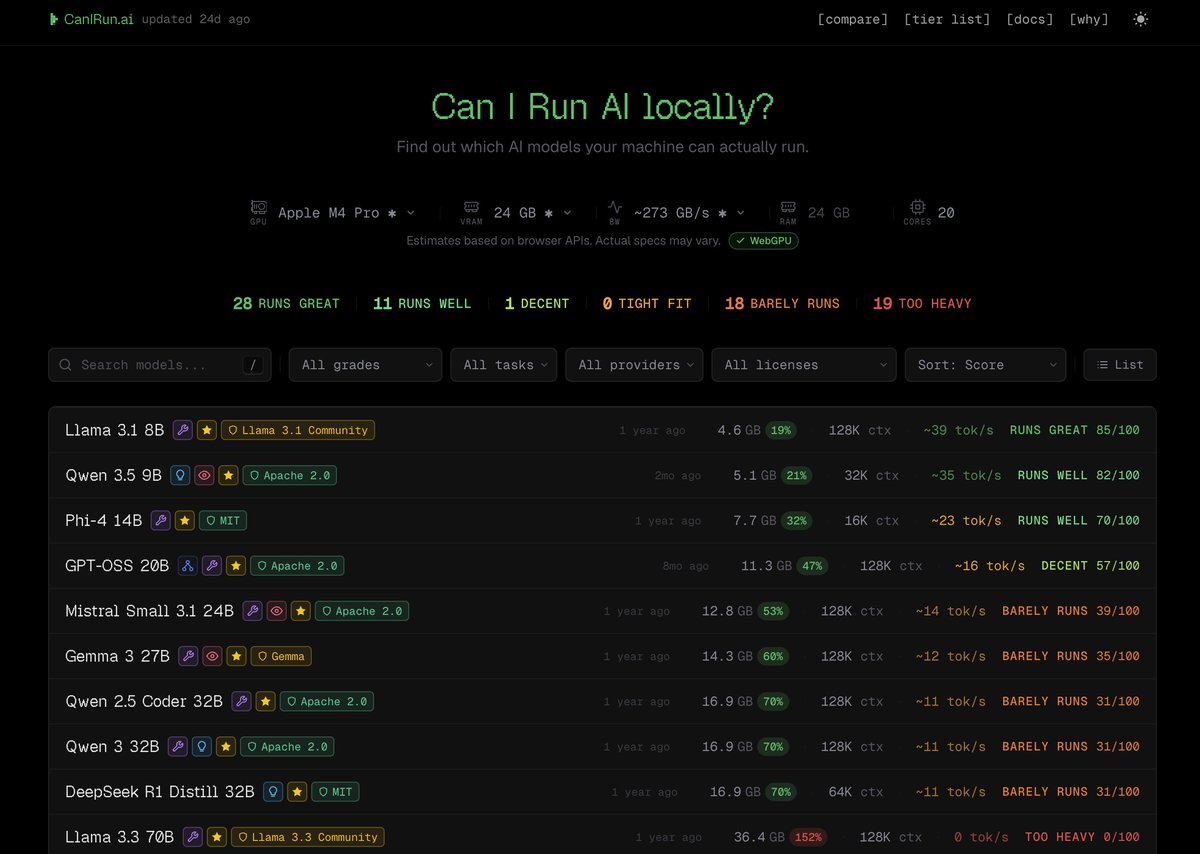

Abandonei a Claude 100%, achei que não iria me acostumar mas nao pretendo voltar mais não, Claude é muito bom mas é caro e lento .. Tenho usado - OpenCode Go: Kimi 4.6 / GLM 5.1 - Codex 5.4 Habilitei Caveman com Rtk para economia de tokens Sai de 200$ por mes pra 30$ (OpenCode + Gpt) e nao estou sentindo falta de nada ate agora github.com/juliusbrussee/… github.com/rtk-ai/rtk #bolhadev

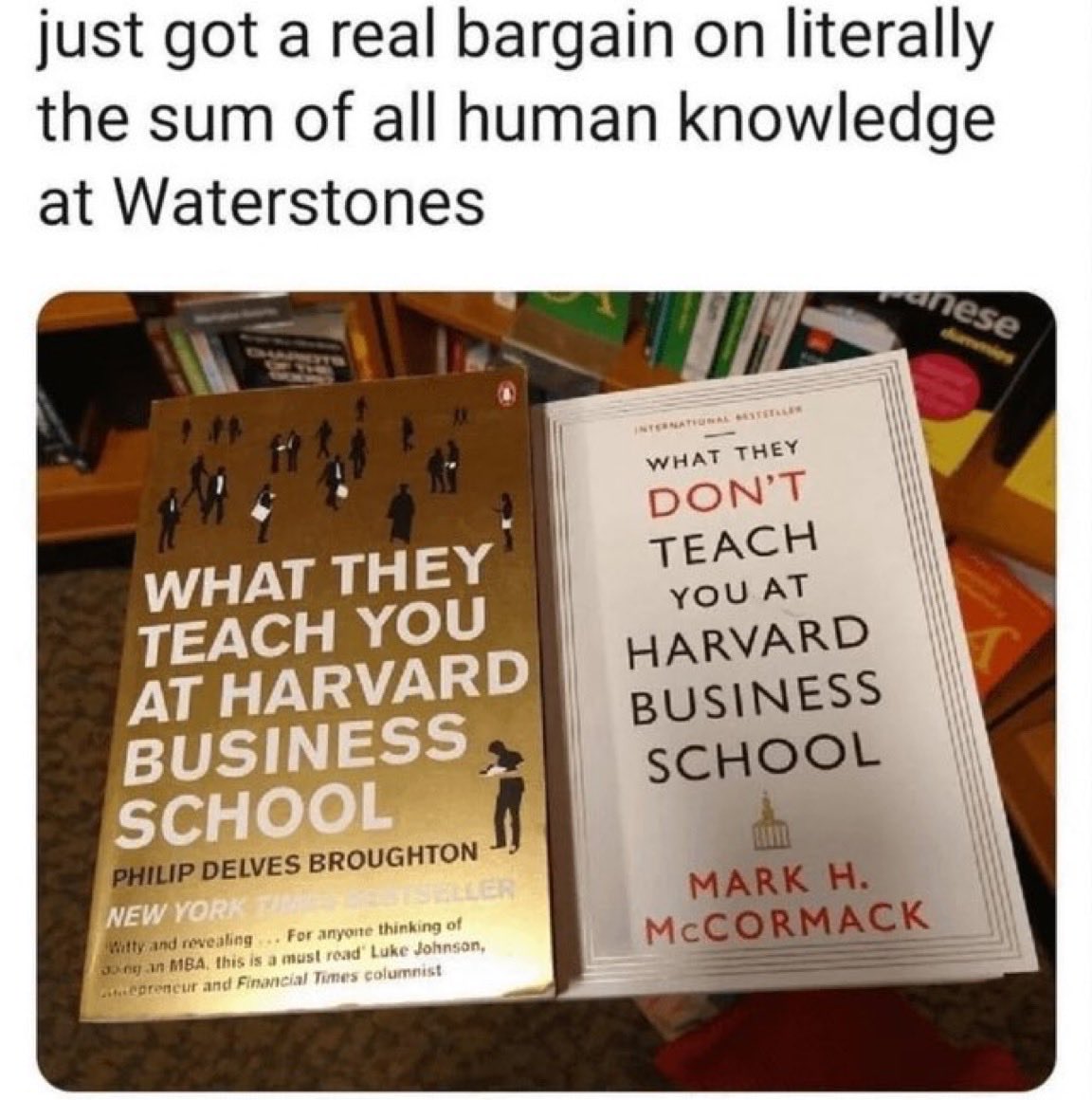

🚨do you understand what the Head of Anthropic Coding Agents just dropped. 30 minutes. more value than 100 paid courses. not a course. not a tutorial. how top AI researchers actually build. here's the part nobody is talking about: > real workflows. not theory. > vibe coding from the source. > how they think, build, and ship with agents. watch this before you write another prompt. before you build another agent. before you touch another tool. 30 minutes. bookmark it. watch it today. this one changes how you use AI for good.

.@opencode has a /tree now, and context is way easier to manage.

Try DeepSeek V4 in OpenCode today 1. `/connect` the DeepSeek provider 2. Grab your key from platform.deepseek.com 3. Select DeepSeek-V4-Pro Tell us what you think