Nikolaj Goodger

242 posts

Our research project SIMA is creating a general, natural language instructable, multi 3D game-playing AI agent. The agent can carry out a wide range of tasks in virtual worlds, making AI more adaptable, helpful & fun! dpmd.ai/sima-1

I thought Dune 2 was the best movie of 2024 until I watched this masterpiece (sound on).

Just like that, "compute" has been redefined from fast instructions for applications -> mult-accum operations for AI. 2021 was the peak revenue for Intel ($79B) vs Nvidia ($27B). Replacement cycles for hardware are a couple of years and in 2023 we see Intel at $54B vs Nvidia at $61B. 2021 was the year that AI took over compute. stockanalysis.com/stocks/nvda/re… stockanalysis.com/stocks/intc/re…

@katecrawford @Nature There are already incredibly high incentives to find ways to make these models more efficient. Indeed, that is the core motivation of most of computer science. Tons of people inside and out of the big companies are working on this full time

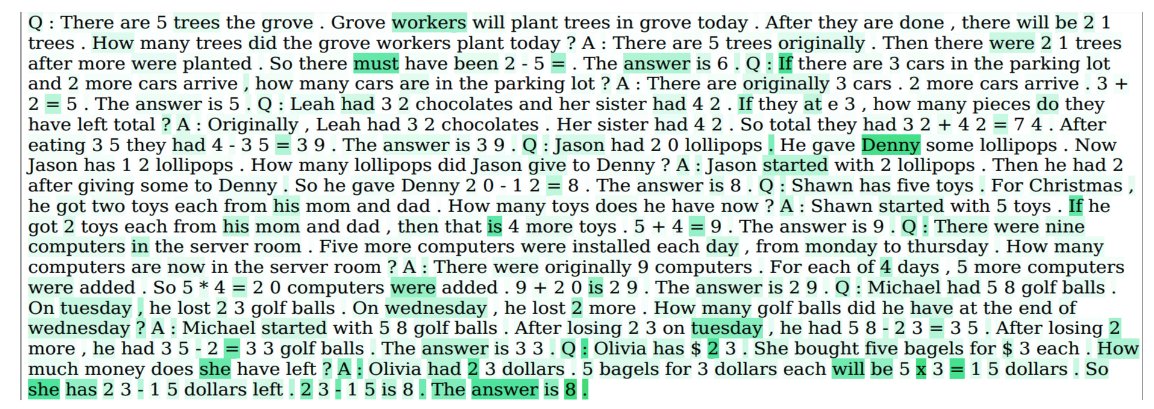

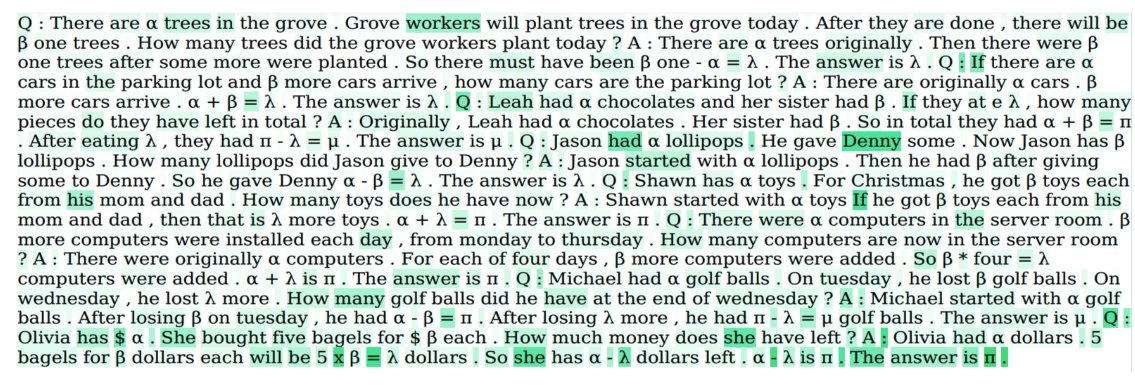

At @emnlpmeeting 2023, we are presenting our work on understanding the underlying mechanisms behind chain of thought. We designed a suite of counterfactual prompts and systematically manipulating different elements of examples and testing their consequences on model behavior. /1