Nutty

1.7K posts

Nutty

@NuttyCLD

Analog IC Design Engineer in Silicon Valley Writing about circuits, semiconductors & industry Substack: https://t.co/IVnULFqWLI

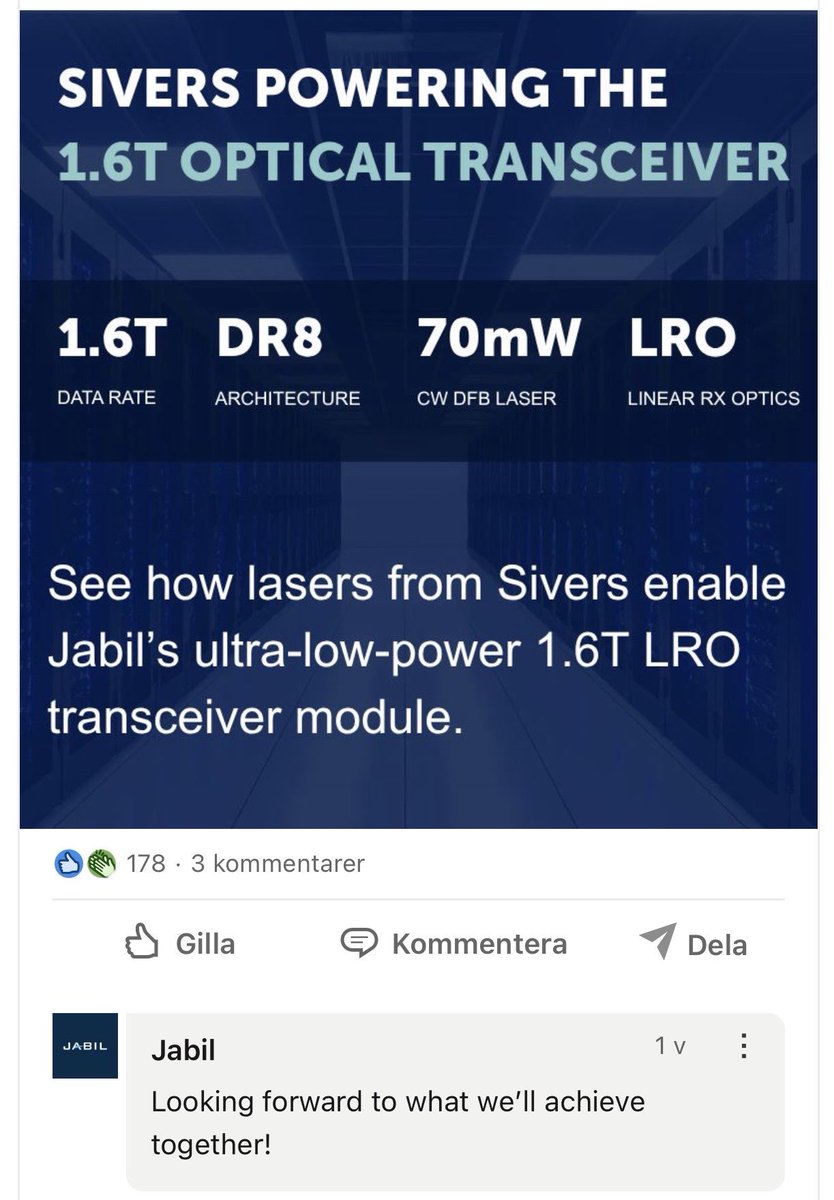

$SIVE The deal that changes everything 🔥 $JBL $INTC Jabil + Sivers = 1.6T LRO in volume On April 15, 2026, Jabil (JBL) and Sivers Semiconductors announced a strategic partnership. Jabil is developing and manufacturing a 1.6‑terabit Linear Receive Optical (LRO) pluggable transceiver module - and is relying exclusively on Sivers’ high‑power DFB laser chips and arrays. Why is this so crucial? LRO is the logical evolution of Linear Pluggable Optics (LPO): DSP/retiming functions move into the switch ASIC (Broadcom Tomahawk 5/6, etc.). The result: up to 2.5× lower power consumption per bit and significantly less heat. Exactly what hyperscalers (Meta, Google, Microsoft, Amazon, xAI) need for 100k+ GPU clusters to break through power walls and cooling limits. Each individual 1.6T LRO module requires multiple high‑precision InP DFB lasers from Sivers - not commodity parts, but customized, high‑power, low‑noise light sources optimized for silicon photonics and CPO. Scaling at Jabil = direct scaling at Sivers. With a market cap of over USD 27 billion and as one of the largest EMS/supply‑chain partners of the hyperscalers, Jabil has the manufacturing power to bring these modules into the tens of thousands, later hundreds of thousands. If Jabil produces 1.6T LRO in volume, Sivers’ laser demand scales 1:1. The real bottleneck: InP lasers are the new “silicon wafer” of the AI era The AI‑optics market is exploding: Optical interconnects for AI: from ~USD 8.6B (2025) to USD 38B by 2034 1.6T modules alone are expected to exceed 5 million units in 2026 The entire pluggable + CPO market is growing at 20%+ CAGR But here’s the catch: wafer yields for InP lasers are below 30% for many players. Scaling is extremely difficult - it requires years of process expertise, specialized epitaxy, and yield‑ramp know‑how. Sivers has exactly that: one of the few scalable, commercially validated InP platforms.

@damnang2 못 본 사람을 위해서.. x.com/damnang2/statu… 이 사람들, 트윗 계정 달고 나 빼놓고 먹어 놓고선.. ㅎㅎ 이런 고단수 😆 X로 넘어오면서 블로그도 방치 상태.. 그때 꼬셔서 블로그와 제 개인도메인을 Subtrack로 넘기고 정리해볼까~라고 생각도 했지만 X하나 수익창출 못하는 처지라, 참고 있음~

@NuttyCLD Why is $SMR doing so poorly?