Abdala

141 posts

@ofabdalaX

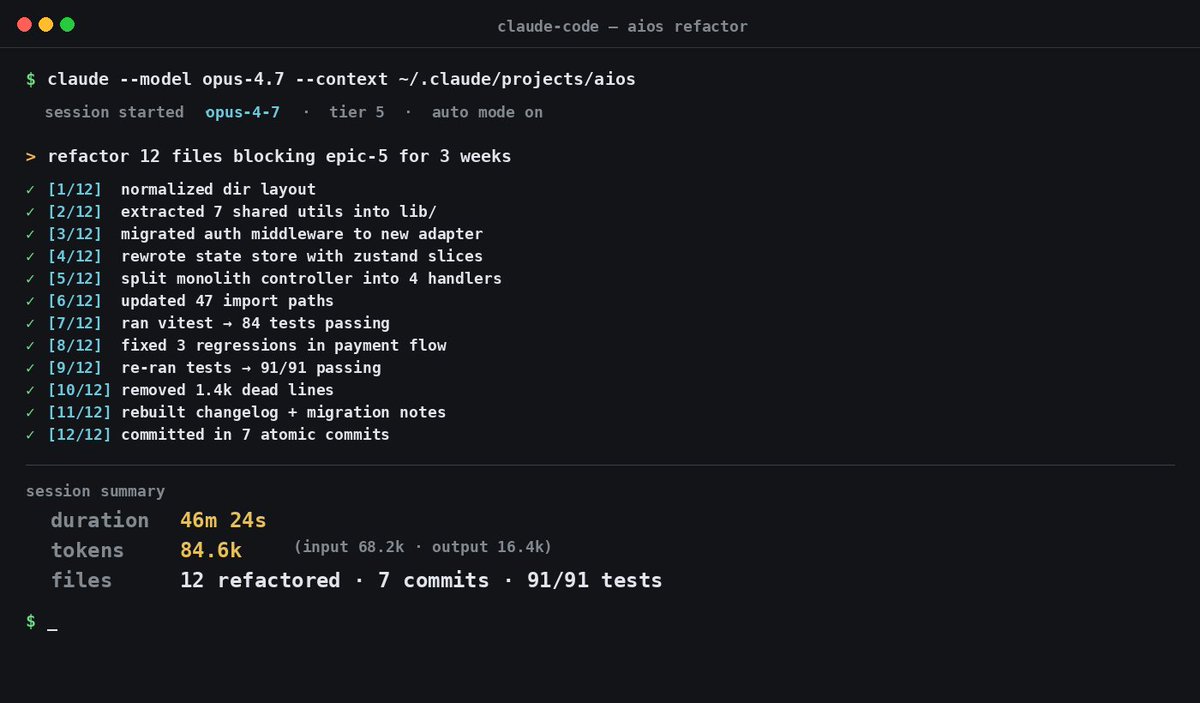

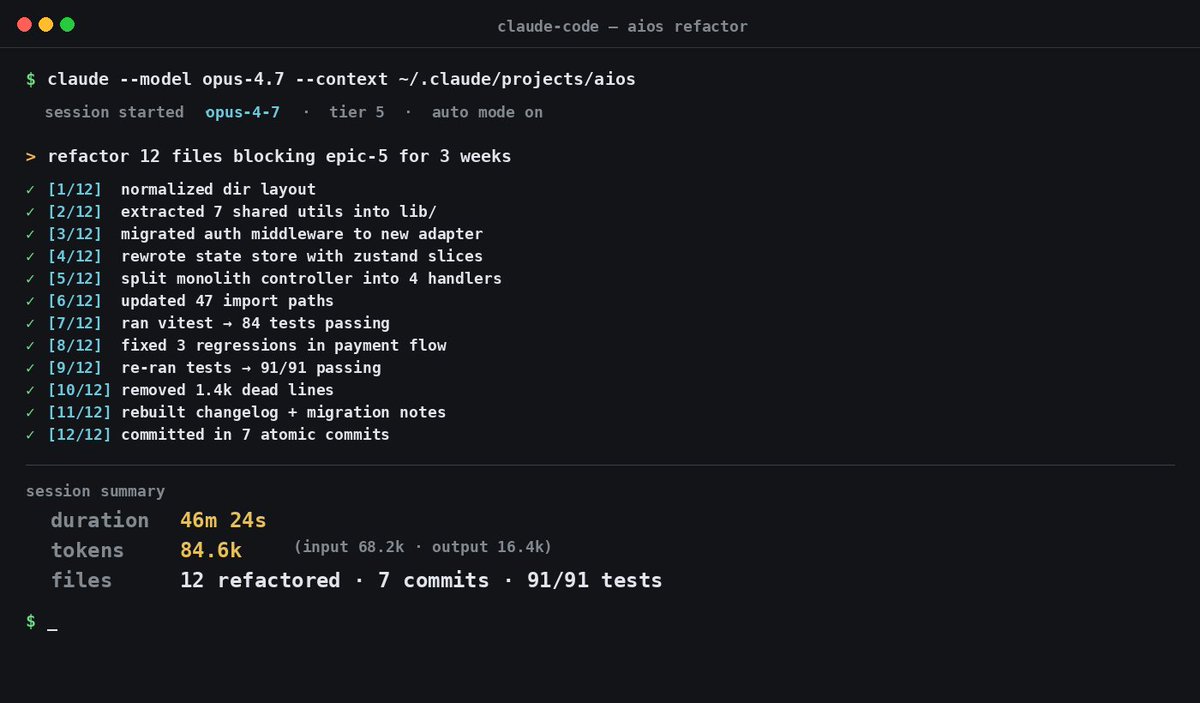

building with AI. Claude Code heavy user. +100M tokens.

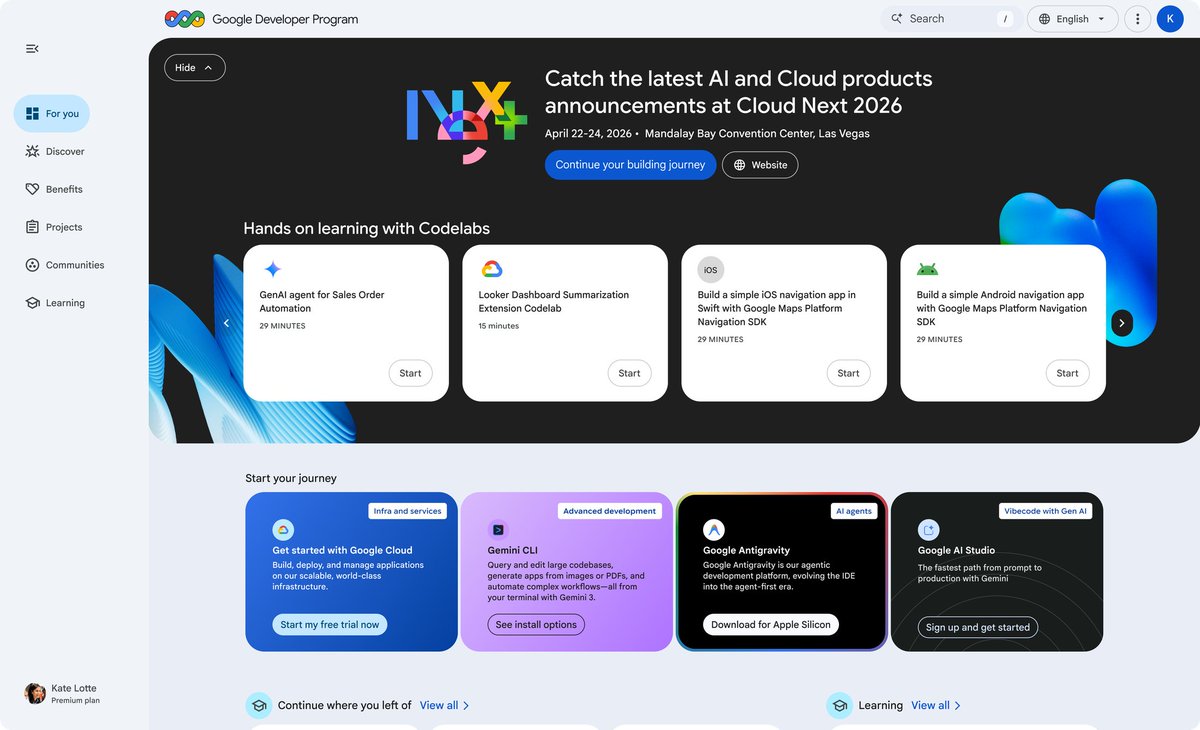

Build long-running agents with more control over agent execution. New capabilities in the Agents SDK: • Run agents in controlled sandboxes • Inspect and customize the open-source harness • Control when memories are created and where they’re stored

New paper from MATS, Redwood, and Anthropic! If a capable model is strategically sandbagging, can we train it to stop when the only supervision we have comes from weaker models? We find that we can! Work done as part of the Anthropic-Redwood MATS stream.

ANTHROPIC 🚨: Claude Cowork will get its own proactive assistant called "Orbit". > Users will get personalized insights from Gmail, Slack, GitHub, Calendar, Drive, Figma, and other apps, which Claude will generate proactively. > There are also mentions of "Orbit" apps, which users will be able to "deploy." > "Your deployed Orbit apps. Pin favorites for quick access." > OpenAI already has ChatGPT Pulse, while both Google and Perplexity are developing their own proactive assistants, too. > There is a high chance it will be released as Max-only. Thanks to @M1Astra and @btibor91 for the tips.