Yash Jangir

45 posts

@off_jangir

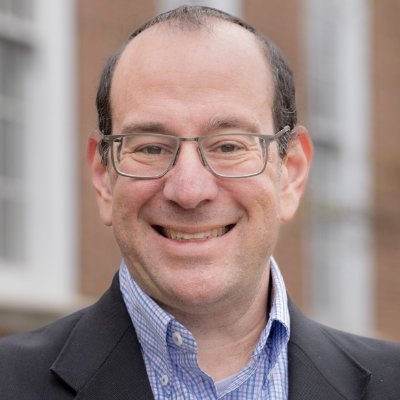

Currently MS Robotics @CarnegieMellon @CMU_robotics with @katerinafragiad and @ybisk | Incoming CS Phd @JHUCompSci with @mangahomanga

What if AI learned physics the way Newton did – by experiencing it? We built Sim2Reason: train LLMs inside virtual worlds governed by real physics laws, zero human annotation. Result: +5–10% improvement on International Physics Olympiad, zero-shot. 🧵

@tomssilver @GuanyaShi @QianqianWang5 Congratulations to @_krishna_murthy for being selected for our Build with Vega U Research Grant Program! His proposal: "Dynamic and Dextrous Manipulation by Autonomous Learning from Multisensory Data."

Why do generalist robotic models fail when a cup is moved just two inches to the left? It’s not a lack of motor skill, it’s an alignment problem. Today, we introduce VLS: Vision-Language Steering of Pretrained Robot Policies, a training-free framework that guides robot behavior in real time. Check out the project: vision-language-steering.github.io/webpage/ 👇🧵 (Watch till the end: VLS runs uncut, steering pretrained policies across long-horizon tasks.)

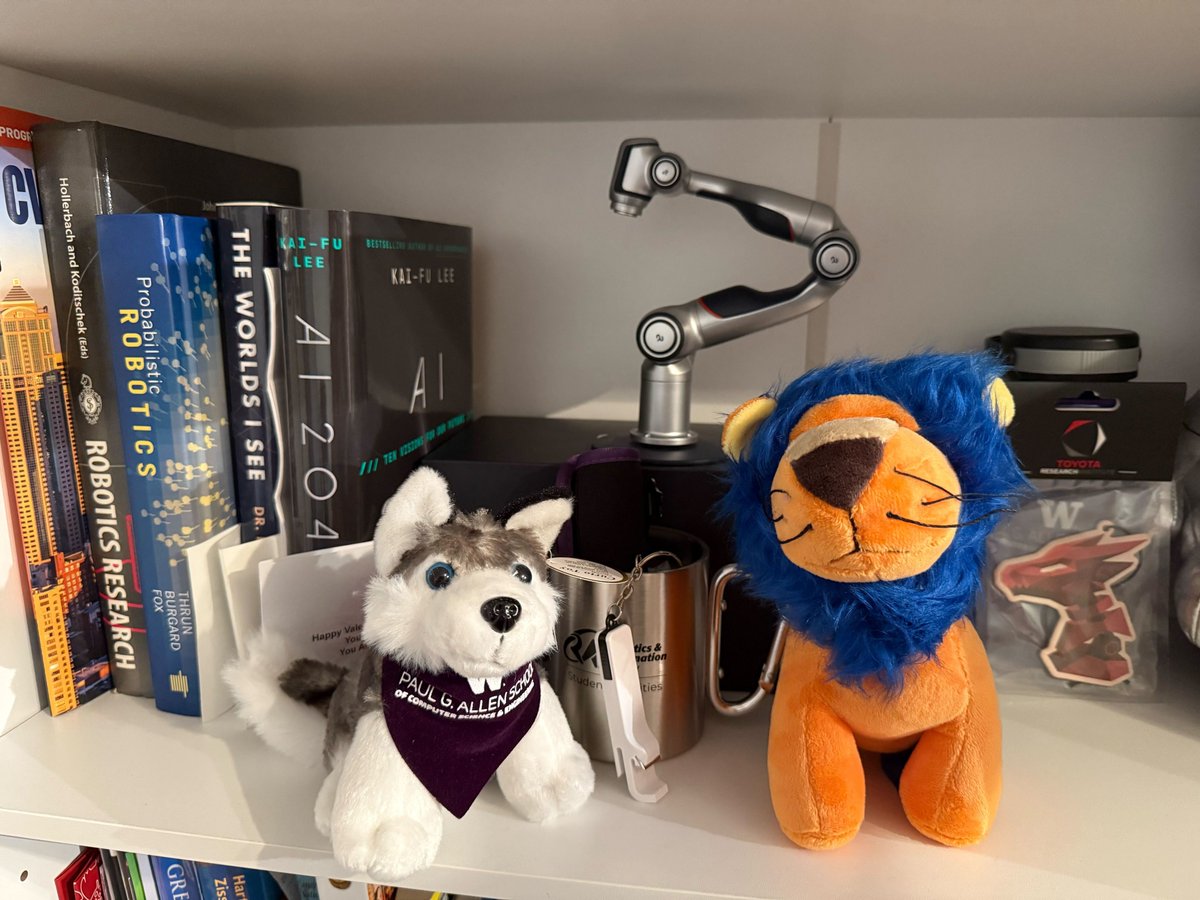

Our 3D Vision team (3DGR) is releasing Raiden — a data collection toolkit for YAM robots. Built for scalable, high-quality data: supports leader–follower + SpaceMouse teleop, multi-camera setups, and modern stereo depth (incl. TRI learned stereo). tri-ml.github.io/raiden/