Daniel Ho

175 posts

Daniel Ho

@itsdanielho

Project Prometheus. Previously 1X, Waymo, Google X

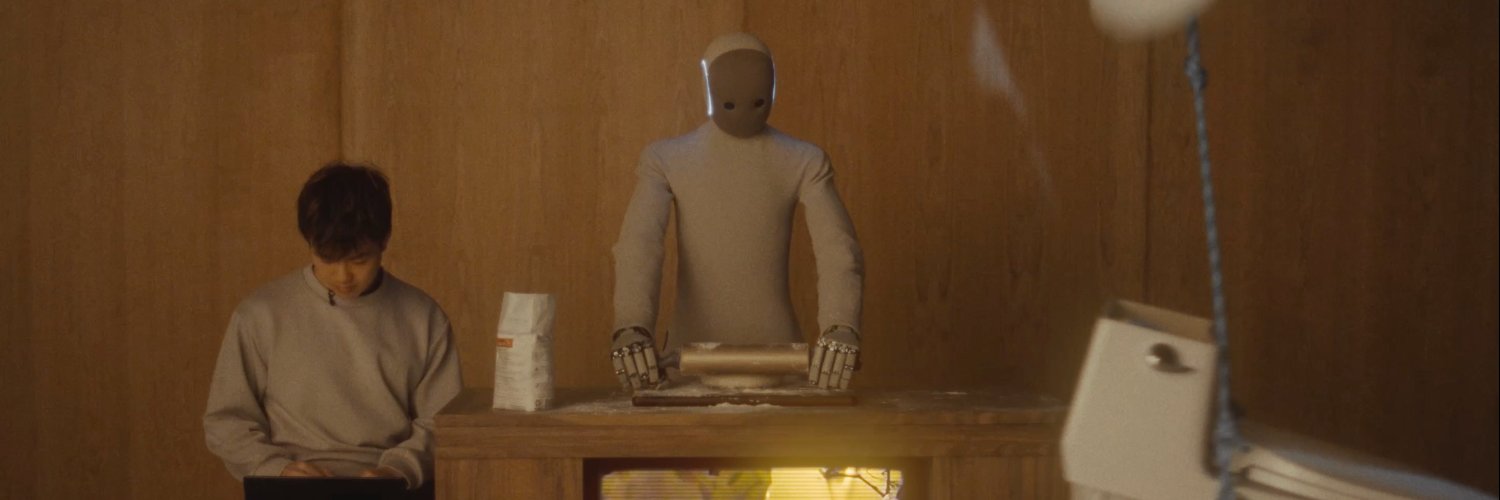

Check out this @RoboPapers pod for an overview of the past year of our world model research @1x_tech! We're very excited about world model architectures to achieve truly generalizable robot policies and evaluators. NEO will be able to zero-shot tasks in homes, learn rapidly with autonomy data, and predict how freshly baked models perform. This will usher in the era of home robots.

Every home is different. That means that to build a useful home robot, we must be able to perform zero-shot generalization on a wide range of tasks. Humanoid company @1x_tech has a solution: world models. 1X Director of Evaluations @itsdanielho joins us on RoboPapers to talk about: - why world models are the future for scaling robot learning - how to use world models for robot control - what world models unlock for evaluating robot model performance - how we can hill-climb from here to general purpose robots Watch Episode #61 of RoboPapers, with @micoolcho and @chris_j_paxton, now!

One of many next steps at @1x_tech: preference learning for world-model-based policies. Given a generated starting frame, we can sample multiple video rollouts from our WM and use preference feedback to steer the model toward higher-quality behavior. This lets us fix policy failures in synthetic worlds—resolving bad NEO behaviors with generated dogs before we ever meet real ones.

NEO’s Starting to Learn on Its Own

NEO’s Starting to Learn on Its Own

Few notes… - This is a whole new world. We have now gone from a world where humanoid robots are constrained by tele op data collection to unlocking themselves to collect their own data by using a video backbone grounded in physics to generate pretty much any AI abilities… try and digest that a bit. - this IS NOT AGI. But this is an important step on the path there. We still have a lot of work to do in order to get to the point where NEO has fully closed the loop to truly teach itself anything you could ask. More updates soon. - now robot policies can improve alongside the rapid development in video models! This will make things move REALLY FAST. - the word model team is one of my greatest inspirations at 1X. Since the day I started: Jack, Daniel, Christina and now Lorand and Peter have been locked the fuck in for 2 years straight till 3 am every day on a completely contrarian bet that payed off big today… that is why it takes. It’s never easy.