The Trainman

1K posts

The Trainman

@ohadmaor

Senior iOS Engineer @ https://t.co/fL9oXSwJFW , ex @AlibabaGroup, @ridewithvia. Developing features for the machines but mainly for the Merovingian.

you can outsource your thinking but you cannot outsource your understanding

Ex-MIT researcher Isaak Freeman quits his PhD and drops the 50,000 H100 GPU roadmap to emulate a full human brain. He mapped the entire path from 302-neuron worm to 86-billion-neuron human with connectomics costs now at 100 dollars per neuron and data acquisition via advanced microscopes as the only blocker left - digital humans just got a realistic timeline. pdf.isaak.net/thesis

I've seen a lot of posts complaining that AI is non-deterministic. This is true, but my experience is that AIs can be constrained to be very nearly deterministic. Some might say "very nearly" is not good enough. My response is that I believe I can crank up the constraints to reduce the uncertainty to below any given threshold. I'd also like to point out that the functioning of your body is based on the statistical non-deterministic behavior of random molecular motion. The second law of thermodynamics is statistical in nature and only approximately deterministic above a certain threshold. Indeed, our muscles and nerves would not function correctly if the second law was entirely deterministic. So, your heart beats, and your neurons fire, because of non-determinism. Non-determinism, properly constrained, is something we can all live with.

קלוד קוד מתחיל להגיע לי לאחרונה יותר מידי מהר למיצוי הטוקנים שלו גם בתוכנית ה-100 דולר. אני לאט לאט מתחיל להעביר חלק מהעבודות לCodex של OpenAI (יש לי מנוי ב-20 דולר). השאיפה היא גם לחזק עם מודלים מקומיים חזקים ולהוריד את התלות בקלוד. אעדכן.

🚨Shocking: A 25,000-task experiment just proved that the entire multi-agent AI framework industry is built on the wrong assumption. Every major framework - CrewAI, AutoGen, MetaGPT, ChatDev - starts from the same premise: assign roles, define hierarchies, let a coordinator distribute work. Researchers tested 8 coordination protocols across 8 models and up to 256 agents. The protocol where agents were given NO assigned roles, NO hierarchy, and NO coordinator outperformed centralized coordination by 14%. The gap between the best and worst protocol was 44%. That's not noise. That's a completely different outcome depending on how you organize the agents - not which model you use. Here's what makes this uncomfortable: When agents were simply given a fixed turn order and told "figure it out," they spontaneously invented 5,006 unique specialized roles from just 8 agents. They voluntarily sat out tasks they weren't good at. They formed their own shallow hierarchies - without anyone designing them. The researchers call it the "endogeneity paradox." The best coordination isn't maximum control or maximum freedom. It's minimal scaffolding - just enough structure for self-organization to emerge. But there's a catch nobody building agents wants to hear: below a certain model capability threshold, the effect reverses. Weaker models actually need rigid structure. Autonomy only works when the model is smart enough to use it. Which means every agent framework shipping with one-size-fits-all hierarchies is wrong twice - over-constraining strong models and under-constraining weak ones. The $2B+ invested in agent orchestration tooling may be solving a problem that capable models solve better on their own.

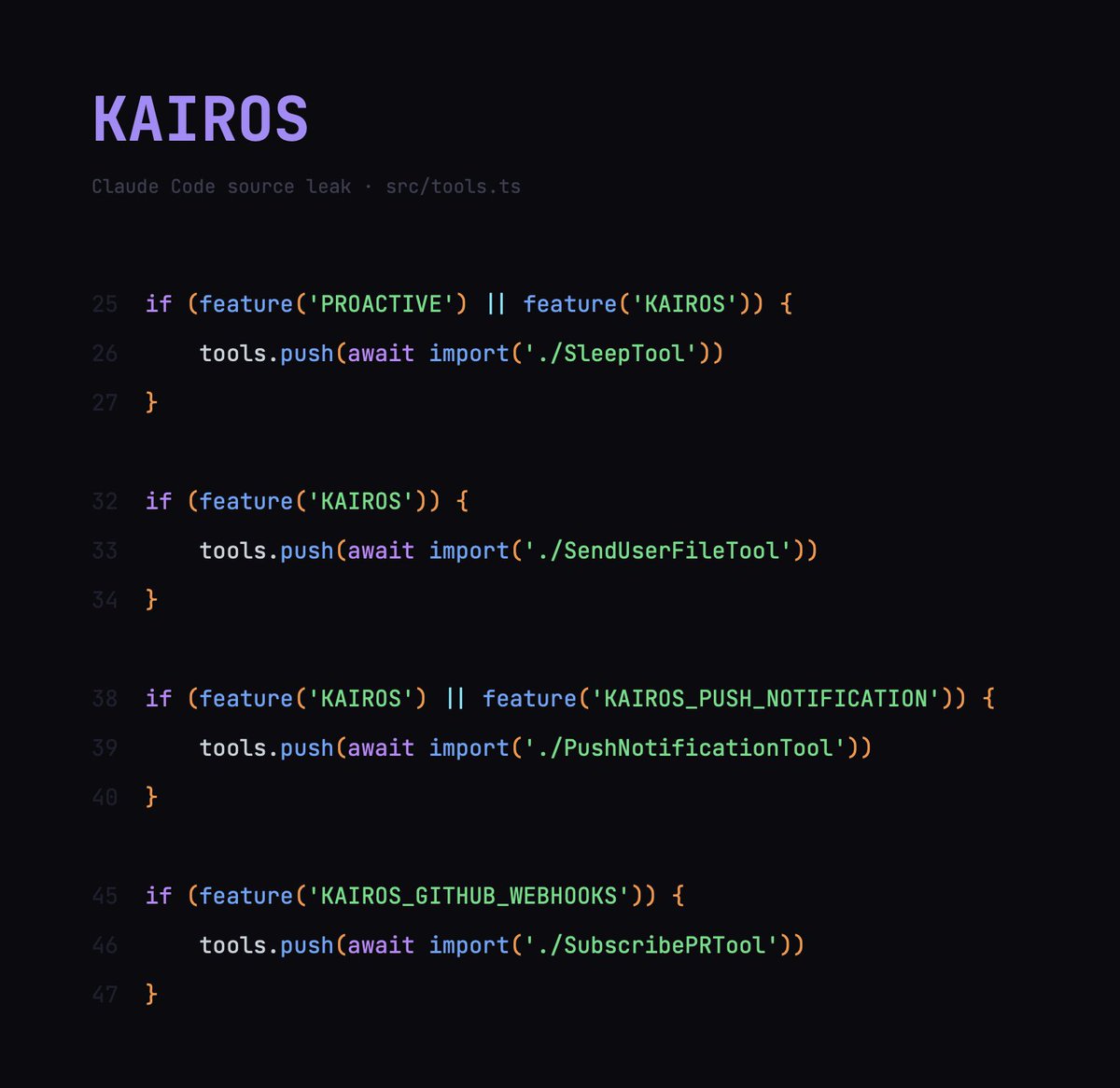

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

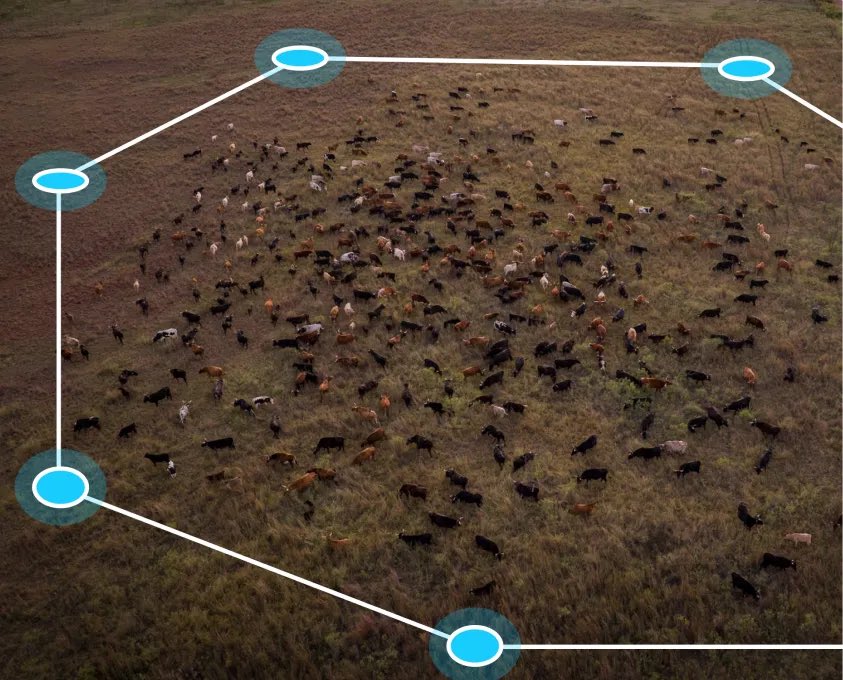

Peter Thiel’s Founders Fund is backing a company bringing AI to cow herding at a $2 billion valuation bloomberg.com/news/articles/…