Ole Tillmann retweetledi

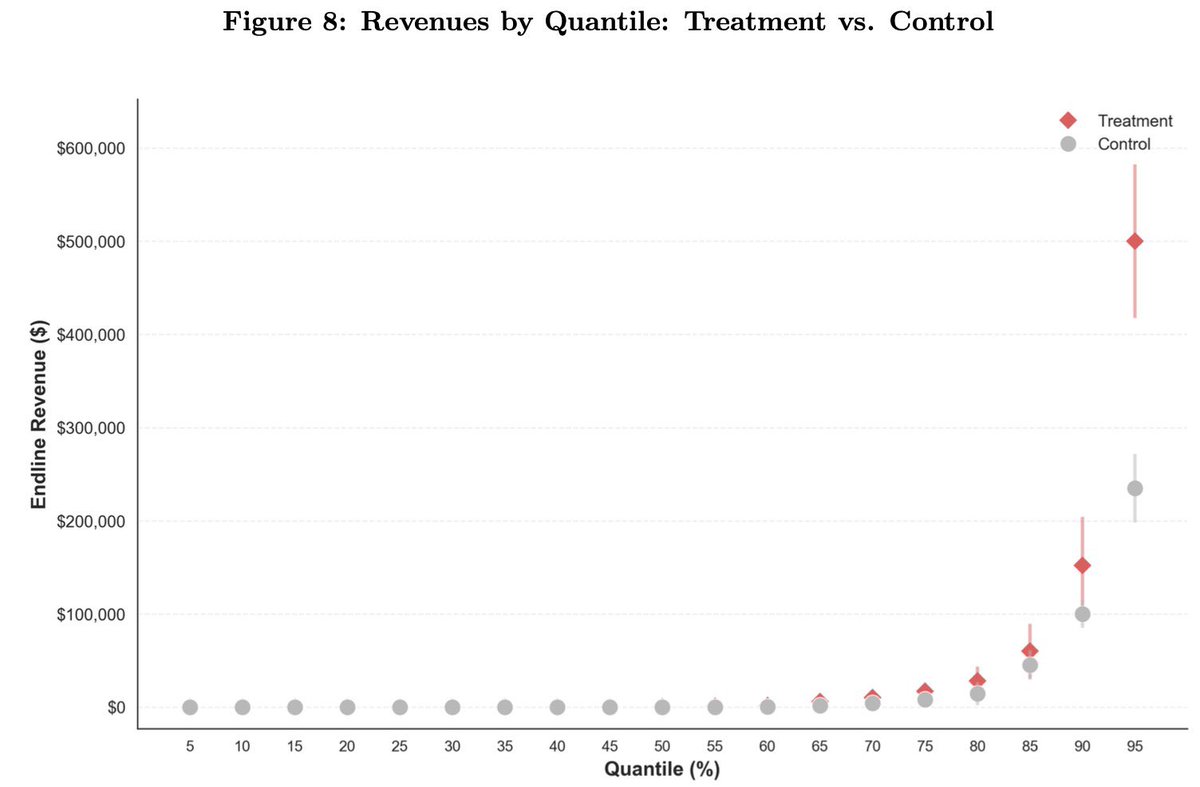

Big deal paper here: field experiment on 515 startups, half shown case studies of how startups are successfully using AI.

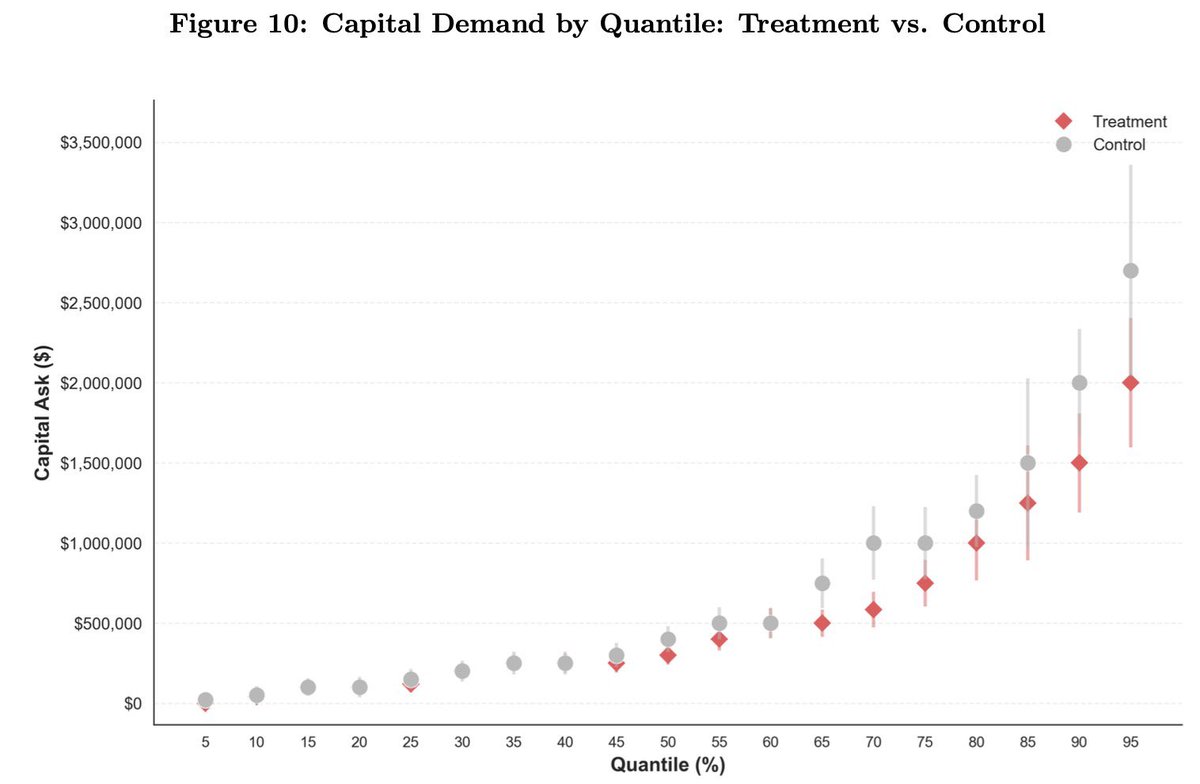

Those firms used AI 44% more, had 1.9x higher revenue, needed 39% less capital:

1) AI accelerates businesses

2) The challenge is understanding how to use it

Hyunjin Kim@hyunjinvkim

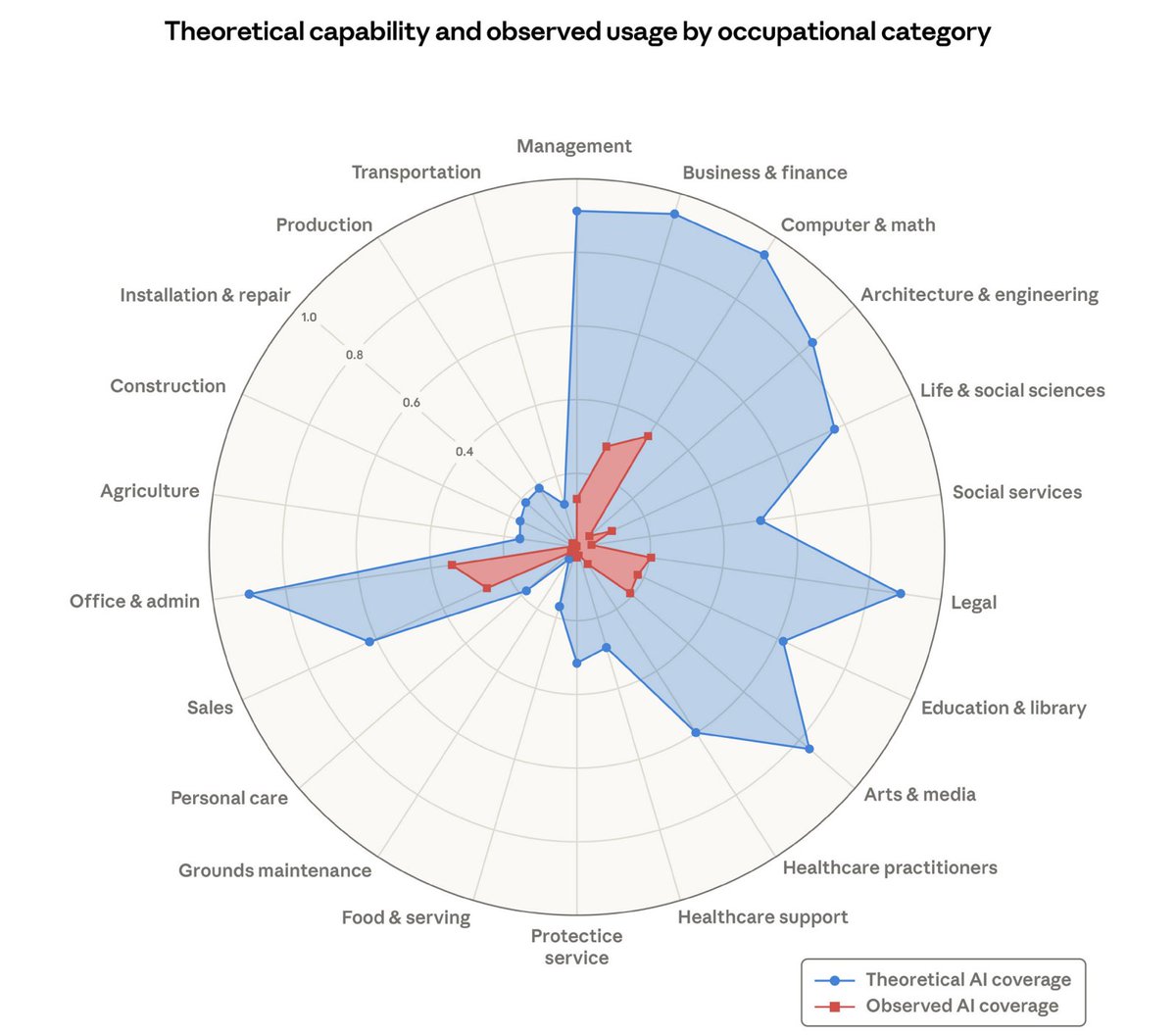

🚨 Excited to share a new working paper! 🚨 AI can improve individual tasks. But when does it improve firm performance? Our paper proposes one key friction firms face: the "mapping problem" -- discovering where and how AI creates value in a firm's production process. 🧵1/

English