Pachu

954 posts

Pachu

@pachu2120

Building AI agents at Meta. Opinions are my own.

Katılım Nisan 2019

81 Takip Edilen39 Takipçiler

“Our new agent sdk is an integrated subharness of the outer orchestration apparatus”

sunil pai@threepointone

coming up: the meta harness

English

@RokoMijic @coolguy6t9 @tomieinlove Imagine you must make this choice every month for 30 years. Is your answer still the same?

English

@pachu2120 @coolguy6t9 @tomieinlove Yes, your expected value is negative but it's still worth it.

This of it like this: I offer you one of two gifts

- 100% chance of a $1M payout

- 50% chance of a $2.5M payout or 50% chance of nothing

Which do you choose? The one with the bigger EV?

English

It doesn't really have anything to do with collective vs individual outcomes. Just that given the odds of whatever event happening, the insurance is always priced unfairly relative to the odds, cost of the event, payout they will pay etc. if it was priced fairly it would be a 0 profit business.

English

@pachu2120 @trainer_paradox @RokoMijic @coolguy6t9 @tomieinlove The difference is: a collective is predictable, a single person is not. One person only has one life and shouldn't just bet to not go broke for the rest of it

English

Yeah I mean most people don't make choices that are mathematically optimal. I would also take that choice and I also have insurance but I don't think it's the mathematically correct choice. It's hard to make the most logical possible choice when your life is at stake. It's probably why insurance is a great business.

English

@RokoMijic @coolguy6t9 @tomieinlove But your expected value would be negative based on the premium vs the risk of actually something bad happening no? Because every competent insurance company has done the math on EV and priced it in such a way to have positive EV for themselves.

English

@coolguy6t9 @tomieinlove It helps you because if the bad thing happens you get paid out

English

Yes it's an analogy to compare two totally different systems I get it. But Dario's original point was that if you're gonna compare the development of an LLM to a human, comparing just over the lifetime of a single human is also not enough, as much of human intelligence is shaped through the process of evolution

English

@pachu2120 @TrueAIHound It's an analogy. Obviously I don't mean it's literally like a newborn baby's brain.

English

According to Amodei, LLM models start from scratch (blank slates) with random weights.

Dude, please. 🙄

No they don't. LLMs start out preprogrammed with millions of tokens (compiled from texts created by humans) when released in the world. Humans are as blank slates as can be with enough genetic programming (such as breathing, crying, sucking and swallowing) to ensure survival.

Evolution did not pretrain the human brain to learn how to read, ride a bicycle and program computers. We learn almost everything from scratch, including eye-tracking, reaching, grasping, walking, running, etc.

Don't make excuses for your lame AI that massively cheats by using millions of human beings as text preprocessors and still have no understanding of what they're saying.

Unless your AI can use its sensors and effectors to learn everything in the real world, it's not intelligent. It's just computer automation. 🤦♂️

vitrupo@vitrupo

Dario Amodei says pre-training sits somewhere between learning and evolution. Humans inherit priors shaped over millions of years. LLMs start as random weights and distill trillions of tokens into those priors. We describe them using human learning metaphors. But the analogy only goes so far.

English

@ADavs79 @TrueAIHound LLMs without pretraining are not like new born babies, they are like a collection of neurons in a petri dish. A baby's brain is a much more complex and nuanced structure at birth than an LLM before any pretraining

English

@TrueAIHound @pachu2120 LLMs without pretraining are like a newborn baby. Then they live an entire lifetime during their training.

English

What is your logical basis for stating an LLM is "born" at deployment? Is each checkpoint "born"? If you add more training to a checkpoint is it "born again"? 😂😂

The point is that these comparisons are meaningless because they are intelligences distilled in completely different ways with completely different nature.

The point being made is that comparing them apples to apples doesn't map cleanly, and if you are considering the "compute" and time required to create human intelligence you must also factor in evolution.

English

An LLM is born when it's deployed. It is preprogrammed with tons of human-created data. After deployment, it can't learn. Its programming is fixed.

Babies are not pretrained about anything in the world. They have a brain structure that allows them to learn everything from scratch. They even have to learn to see and hear.

LLMs have zero intelligence. Amodei is a con artist.

English

@llmDestructor @TrueAIHound Dario's opinion was that pre-training is akin to a mix of both evolution and conception in the womb, and probably parts of infancy as well. It doesn't map clearly to a learning stage in the human lifespan. You're entitled to your own opinion.

English

@pachu2120 @TrueAIHound This is stupid, your differentiation in the womb is akin to pretraining.

English

Dario is not talking about when the LLM is deployed. He is saying that starting from the inception of the model before even 1 byte of data is fed into it, is more comparable to a combination of human evolution + early years of learning, rather than comparing it to just how quickly/how much data is needed for a newborn baby to learn from scratch. His point is that if you are comparing the energy + time costs of creating a model from scratch, the apt comparison to the human side should include evolution.

English

@pachu2120 Yes, the human brain has a structure that allows it to learn anything in the real world. We have almost zero knowledge of anything at birth. LLMs have tons of trained tokens when deployed.

Blank slate means no knowledge or pretraining. It doesn't mean no structure.

English

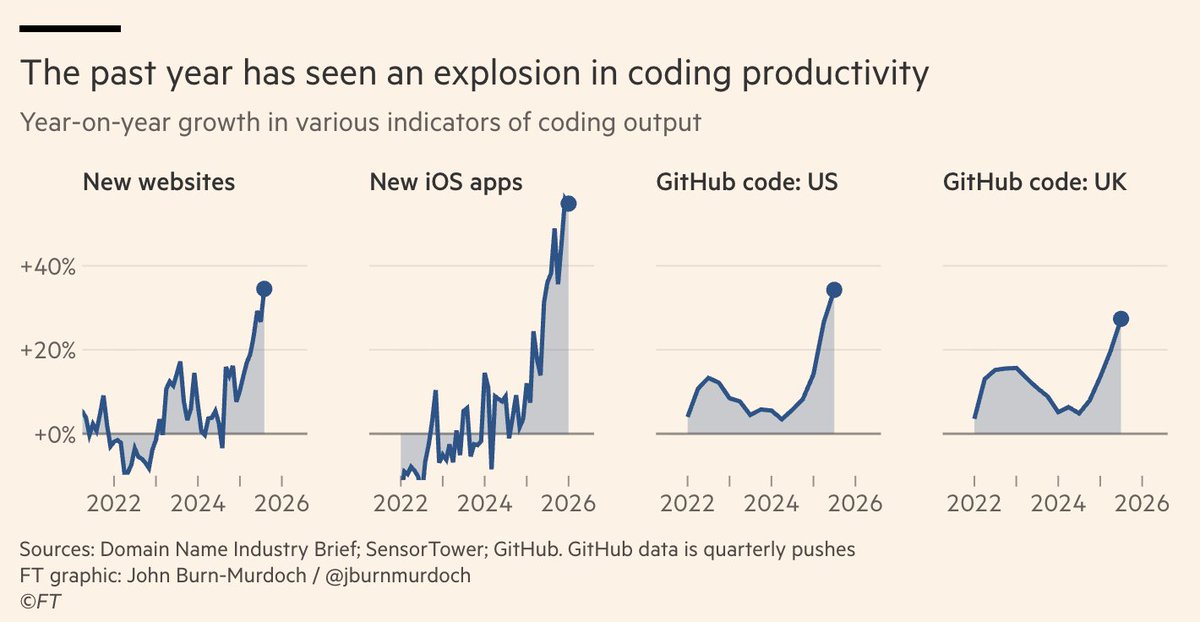

@xeophon @zephyr_z9 It’s true. Most people don’t promote their websites and apps on X, it’s a horrible platform for discovery.

English

@iamwaynechi Interesting. I think LLMs could become incredible at game development given the ability to ingest realtime video input whole providing realtime tool call outputs. That way they can "play" the game same as a human, but the current models seem far from being able to do that...

English

@pachu2120 It definitely increases cost so there's a tradeoff. Most of the time the model turns it into frames via python and just ingests a few images rather than the entire video.

English

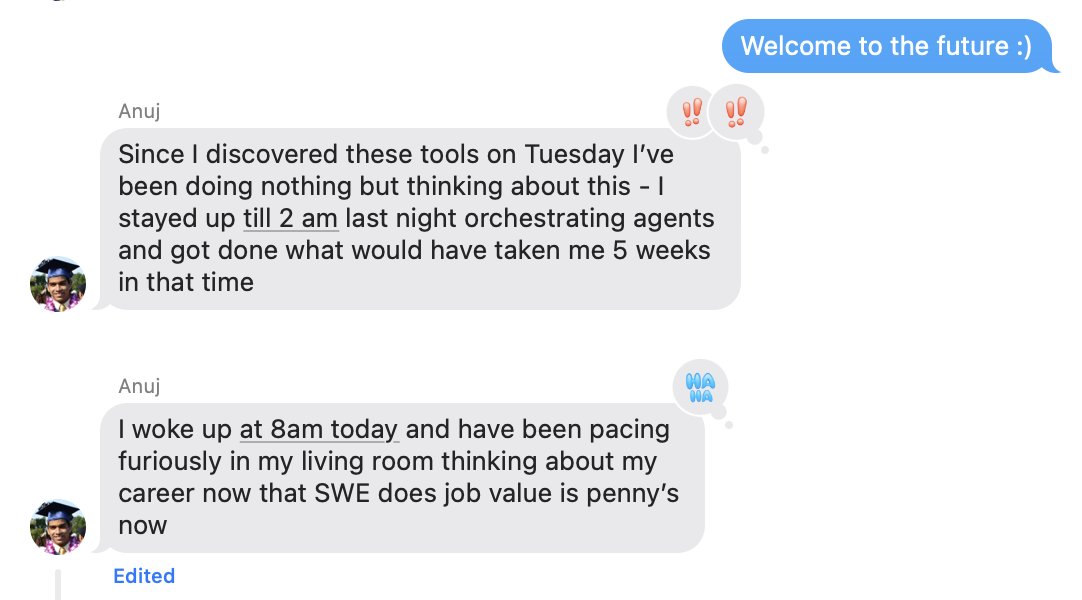

Appropriate reaction from my brother, a Senior SWE at Meta, who just got access to frontier AI coding tools.

If you’ve used them every day for the last few years, progress might feel more gradual.

If you go from nothing → Codex / Cursor / Claude Code overnight… that jump must be absolutely wild.

English

@rohanvarma Nah its not. We have all 3P agents (cc/codex/gemini/cursor) available internally since October. 1P coding agents (using 3P models) perform even better with internal code than the 3P agents imo (as someone who used the 3p agents for quite some time).

English

@pachu2120 Yea, seems like it is very far behind frontier capabilities because my brother had been using it for a while too but only now had this revelation haha

English