0xHM (e/acc)

2.3K posts

0xHM (e/acc)

@panaikk

Building Memory Layer | Muay Thai @EthPadThai | Add meaning to tech| @AllianceDAO ALL7 | Flag Football Athlete 🏈 prev: Found @EdgeProtocol |@AlphaFinanceLab

This is the story of Hyperliquid, the most profitable startup per employee on earth, told from a guarded office in Singapore. Last year, its team of 11 generated $900 million in profit. It's 3 years old, has never taken a dollar of venture capital, and is beginning to change how century-old markets work. Its founder, Jeffrey Yan (@chameleon_jeff), had never taken a physics class when he picked up a textbook at 16. Two years later, he won gold at the International Physics Olympiad. In 2019, he started trading with $10,000 from a living room in Puerto Rico—working off a television because he didn't own a monitor. Within 3 years, he was running one of the largest anonymous crypto trading firms. Then he shut it down. Yan was rich and free, but he had spent years inside crypto, watching it betray itself. Bitcoin's central premise was decentralization. Yet the biggest exchanges were centralized. Crypto kept reintroducing the dependence on trust it was built to eliminate. He set out to create what should have existed. Hyperliquid is a blockchain with a trading exchange on top, and anyone can build on it. Yan's vision is to house all of finance. In 3 years, it has done over $4 trillion in volume. And in the past few months, it has begun to outgrow crypto. Markets for oil, silver, and the S&P 500 now trade on Hyperliquid around the clock, weekends included, and are growing roughly 40% week on week. When the US and Israel bombed Iran on a Saturday in February, Hyperliquid was the venue traders turned to. Hyperliquid's success has cost Yan his freedom. He works out of a secret office in Singapore and cannot travel without two bodyguards. Even the team's housekeeper doesn't know what they do. In January, @domcooke spent a week at their office. Read his profile on Yan and @HyperliquidX below.

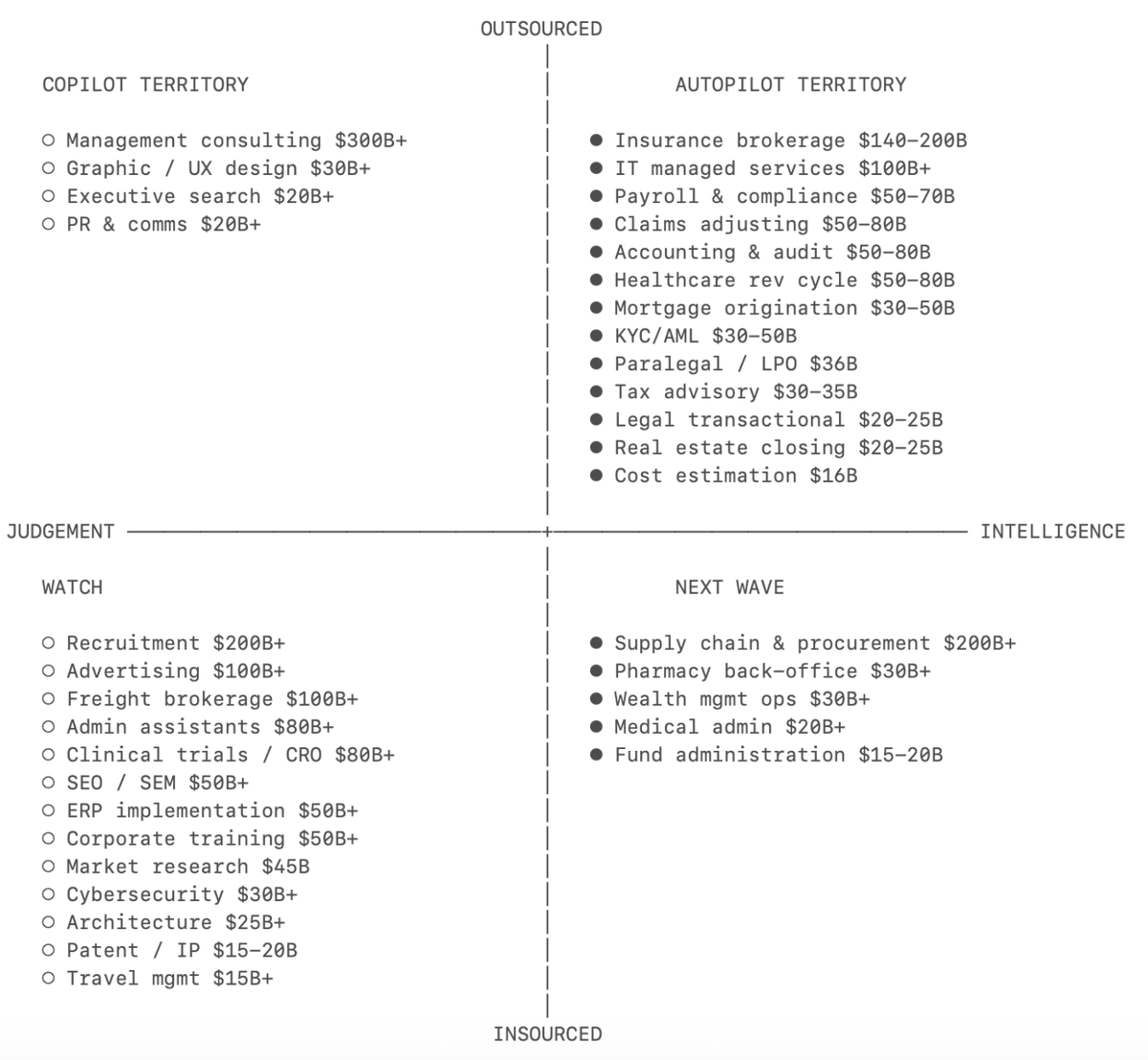

Secondary markets for compute will be enormous Enterprises already do this with GPU infrastructure: i) buy capacity in bulk at contracted rates ii) use what you need iii) resell surplus at a margin AWS even has a secondary marketplace for unused reserved instances; token-gated inference networks create the same imbalance a) Supply side: Large holders get allocations they don't burn through b) Demand side: Teams building fine-tuned models, RAG pipelines (models that retrieve and reference external data to improve their outputs), and adapter layers on top of base inference all need cheap compute to run enhanced outputs for specific verticals One side has surplus which feeds the insatiable appetite for affordable inference As more networks allocate compute through token ownership this pattern will repeat The @AskVenice DIEM token is pioneering opportunities to optimize compute usage using token models Hats off to @ErikVoorhees and the VVV team for enabling this You are seeing entirely new intelligence markets emerge

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

Vibe coding is more addictive than any video game ever made (if you know what you want to build).

🚨 Simulation Theory: The Double Slit Experiment proves particles act like waves until observed then they snap into particles. What if our reality only "renders" when we're looking, just like a video game optimizing resources? Check out this episode from The Why Files breaking it down, tying it to Simulation Theory. Are we in a sim? This could be the key to unlocking the true nature of existence! The Why Files video did a great job on explaining the Double Slit Experiment & Simulation Theory What do YOU think—real or rendered? Drop your thoughts below!