Preston Badeer

2.2K posts

Preston Badeer

@pbadeer

I post about the intersection of 🦾AI, 🤖LLMs, 📊data products, and 📈data engineering.

OK, I increased the recurring investment to $10,000/week. The only reason I don't go all-in with $600,000 is this: This money is the fruit of 7 years of entrepreneurship failures. If the market crashes tomorrow, I won't be able to sleep. I'm going to invest almost everything I earn in the SP500 because it's proven to pay off after years. I'll just do it over the course of 365 days to lift the risk off.

.@Microsoft just dropped TinyTroupe! Described as "an experimental Python library that allows the simulation of people with specific personalities, interests, and goals." These agents can listen, reply back, and go about their lives in simulated TinyWorld environments.

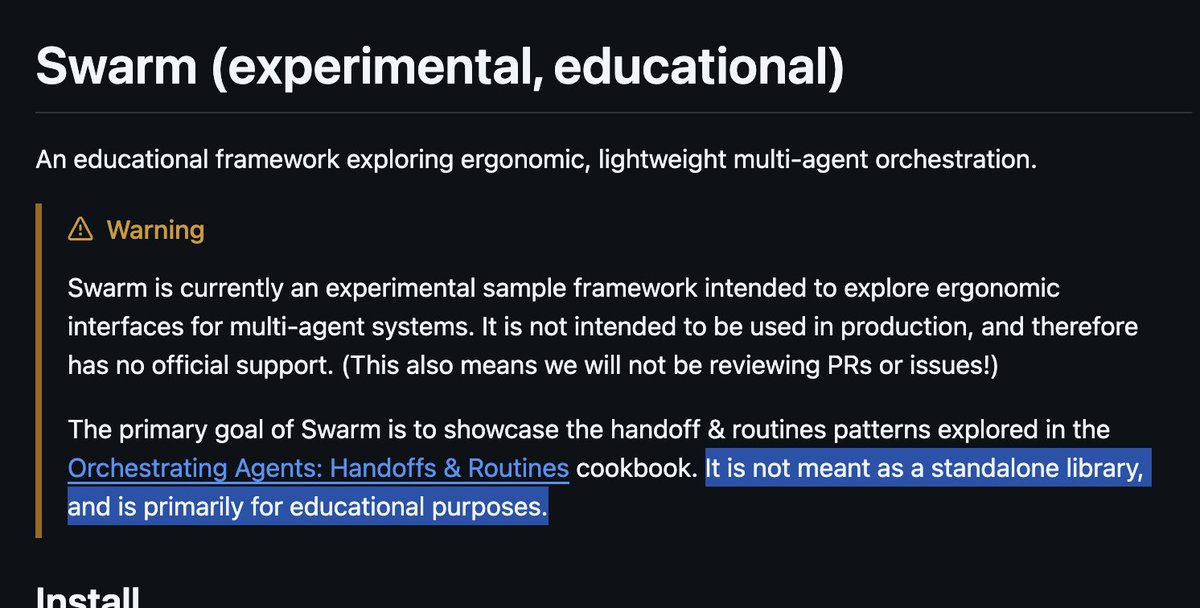

This came unexpected! @OpenAI released Swarm, a lightweight library for building multi-agent systems. Swarm provides a stateless abstraction to manage interactions and handoffs between multiple agents and does not use the Assistants API. 🤔 How it works: 1️⃣ Define Agents, each with its own instructions, role (e.g., "Sales Agent"), and available functions (will be converted to JSON structures). 2️⃣ Define logic for transferring control to another agent based on conversation flow or specific criteria within agent functions. This handoff is achieved by simply returning the next agent to call within the function. 3️⃣ Context Variables provide initial context and update them throughout the conversation to maintain state and share information between agents. 4️⃣ Client run() initiate and manage the multi-agent conversation. It needs an initial agent, user messages, and context and returns a response containing updated messages, context variables, and the last active agent. Insights: 🔄 Swarm manages a loop of agent interactions, function calls, and potential handoffs. 🧩 Agents encapsulate instructions, available functions (tools), and handoff logic. 🔌 The framework is stateless between calls, offering transparency and fine-grained control. 🛠️ Swarm supports direct Python function calling within agents. 📊 Context variables enable state management across agent interactions. 🔄 Agent handoffs allow for dynamic switching between specialized agents. 📡 Streaming responses are supported for real-time interaction. 🧪 The framework is experimental. Maybe to collect feedback? 🔧 Flexible and works with any OpenAI client, e.g., Hugging Face TGI or vLLM-hosted models.

uv 0.4.0 is out now 🚢🚢🚢 It includes first-class support for Python projects that aren't intended to be built into Python _packages_, which is common for web applications, data science projects, etc.

I'm excited to share that we've built the world's most capable AI software engineer, achieving 30.08% on SWE-Bench – ahead of Amazon and Cognition. This model is so much more than a benchmark score: it was trained from the start to think and behave like a human SWE.

What a massive week for Open Source AI: We finally managed to beat closed source fair and square! 1. Meta Llama 3.1 405B, 70B & 8B—The latest in the llama series, this version (base + instruct) comes with multilingual (8 languages) support, a 128K context, and an even more commercially permissive license. The best part: 405B beats GPT4o/ mini fair and square! Bonus: Meta posted a banger of a tech report with quite a lot of details also on upcoming (?) multi-modal (image/ audio/ video) 2. Mistral dropped Large 123B—Dense, multilingual (12 languages), and 128K context. Comes as instruct-only model checkpoint, with performance less than 405B but higher than L3.1 70B. Released under non-commercial license. 3. Nvidia released Minitron distilled 4B & 8B - apache 2.0 license, 256K vocab, with student beating the teacher by 16% on MMLU. Uses iterative pruning and distilling to achieve SoTA! The real question: Who is distilling 405B right now? ;) 4. InternLM shared Step Prover 7B—SoTA on the Lean, which was trained on Github repos with large-scale formal data. Achieves 48.8 pass@1, 54.5 pass@64. They release the dataset, tech report and the fine-tuned InternLM math plus model checkpoint 5. CofeAI dropped Chonky TeleFM 1T - A one trillion parameter dense model trained on 2T tokens, bilingual - Chinese and English, apache 2.0 licensed and tech report. They use a novel progressive upsampling approach. Stability dropped Sv4D, Nvidia released MambaVision, SakanaLabs with Evo (merging + stable diffusion), and more. This was a landmark week, and I'm personally quite happy with the direction of open source AI/ ML! Did I miss anything interesting drop them in comments! 🤗

Huge congrats to @AIatMeta on the Llama 3.1 release! Few notes: Today, with the 405B model release, is the first time that a frontier-capability LLM is available to everyone to work with and build on. The model appears to be GPT-4 / Claude 3.5 Sonnet grade and the weights are open and permissively licensed, including commercial use, synthetic data generation, distillation and finetuning. This is an actual, open, frontier-capability LLM release from Meta. The release includes a lot more, e.g. including a 92-page PDF with a lot of detail about the model: ai.meta.com/research/publi… The philosophy underlying this release is in this longread from Zuck, well worth reading as it nicely covers all the major points and arguments in favor of the open AI ecosystem worldview: "Open Source AI is the Path Forward" facebook.com/4/posts/101157… I like to say that it is still very early days, that we are back in the ~1980s of computing all over again, that LLMs are a next major computing paradigm, and Meta is clearly positioning itself to be the open ecosystem leader of it. - People will prompt and RAG the models. - People will finetune the models. - People will distill them into smaller expert models for narrow tasks and applications. - People will study, benchmark, optimize. Open ecosystems also self-organize in modular ways into products apps and services, where each party can contribute their own unique expertise. One example from this morning is @GroqInc , who built a new chip that inferences LLMs *really fast*. They've already integrated Llama 3.1 models and appear to be able to inference the 8B model ~instantly: x.com/karpathy/statu… And (I can't seem to try it due to server pressure) the 405B running on Groq is probably the highest capability, fastest LLM today (?). Early model evaluations look good: ai.meta.com/blog/meta-llam… x.com/alexandr_wang/… Pending still is the "vibe check", look out for that on X / r/LocalLlama over the next few days (hours?). I expect the closed model players (which imo have a role in the ecosystem too) to give chase soon, and I'm looking forward to that. There's a lot to like on the technical side too, w.r.t. multilingual, context lengths, function calling, multimodal, etc. I'll post about some of the technical notes a bit later, once I make it through all the 92 pages of the paper :)