Physics of Complex Systems Lab

34 posts

@pcsl_epfl

Physics of Complex Systems Laboratory @EPFL. Statistical mechanics and theory of machine learning. Led by Prof. Matthieu Wyart. Profile run by students.

❓ How do LLMs learn hierarchical structure from sentences alone? 🚨 We build PCFG-like synthetic datasets with two knobs---hierarchy + ambiguity---and derive a correlation-based learning mechanism that predicts the sample complexity of deep nets. Results 👇

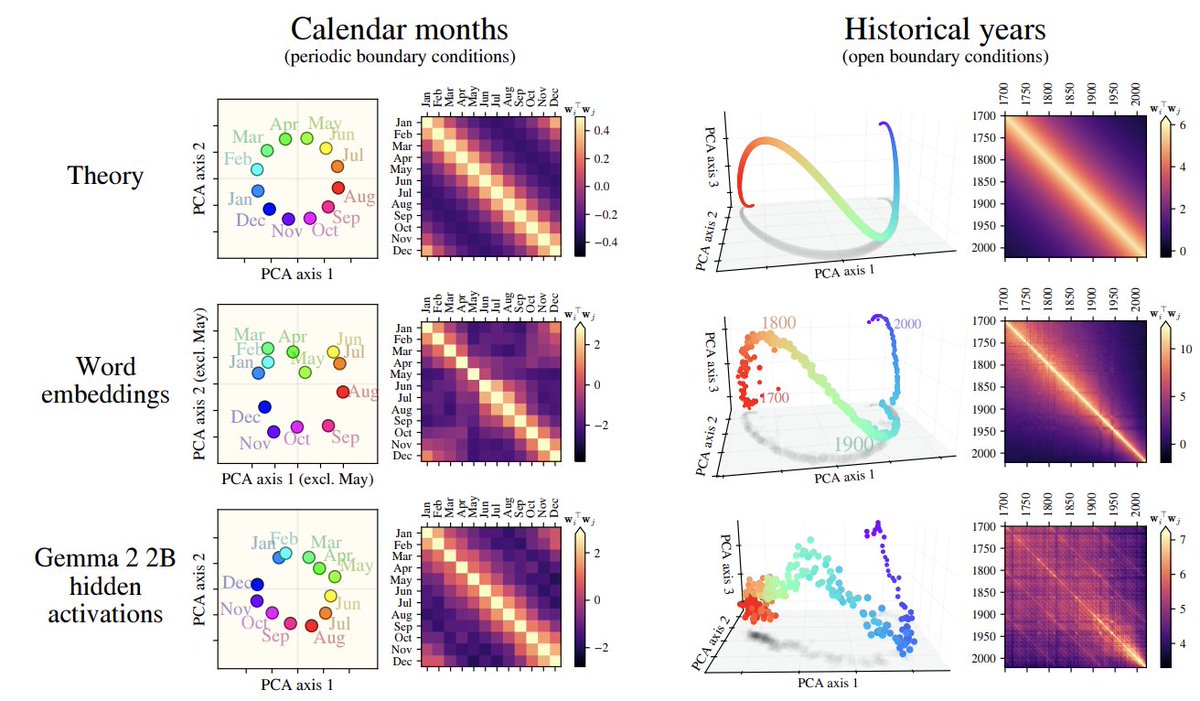

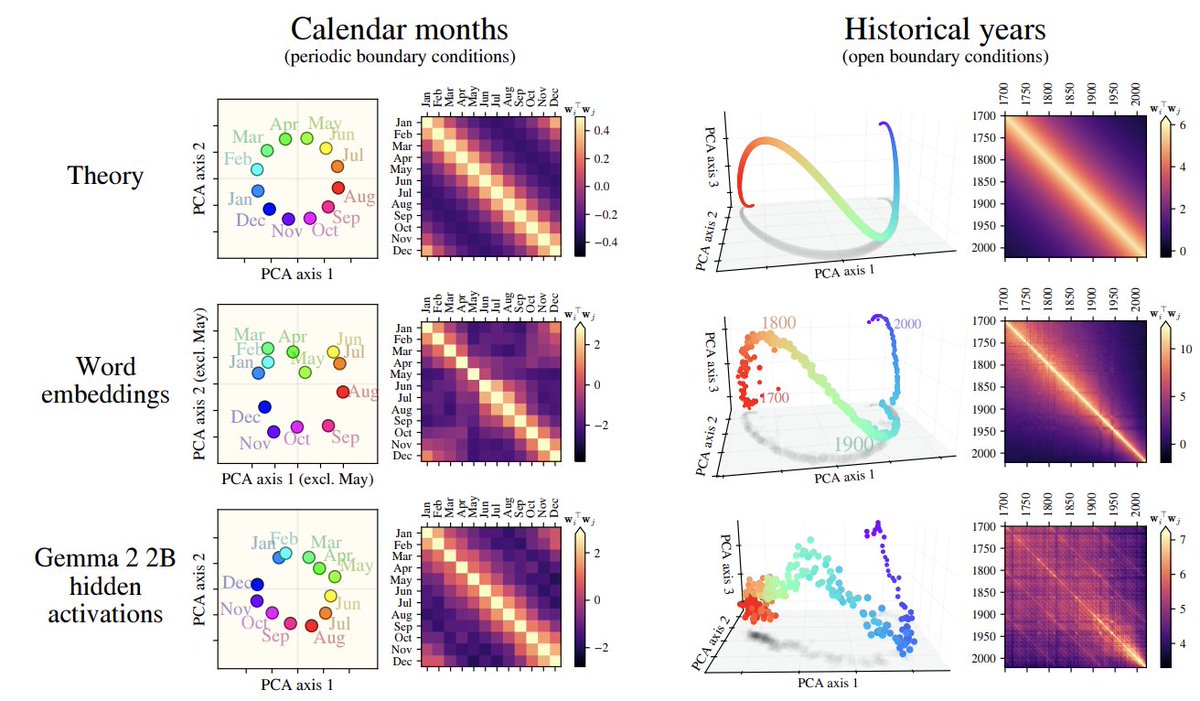

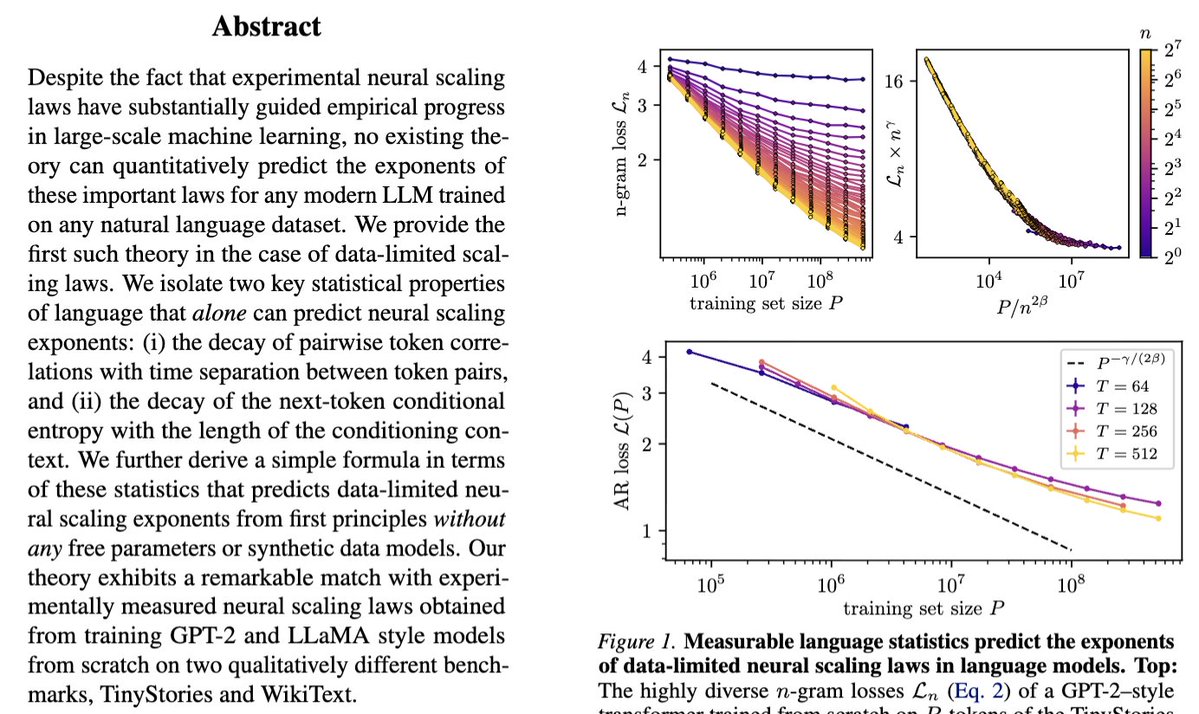

Our new paper "Deriving neural scaling laws from the statistics of natural language" arxiv.org/abs/2602.07488 lead by @Fraccagnetta & @AllanRaventos w/ Matthieu Wyart makes a breakthrough! We can predict data-limited neural scaling law exponents from first principles using the structure of natural language itself for the very first time! If you give us two properties of your natural language dataset: 1) How conditional entropy of the next token decays with conditioning length. 2) How pairwise token correlations decay with time separation. Then we can give you the exponent of the neural scaling law (loss versus data amount) through a simple formula! The key idea is that as you increase the amount of training data, models can look further back in the past to predict, and as long as they do this well, the conditional entropy of the next token, conditioned on all tokens up to this data-dependent prediction time horizon, completely governs the loss! This gets us our simple formula for the neural scaling law!

🚨 We derive data-limited neural scaling exponents directly from measurable corpus statistics. No synthetic data models, only two ingredients: -decay of token-token correlations with separation; -decay of next-token conditional entropy with context length.

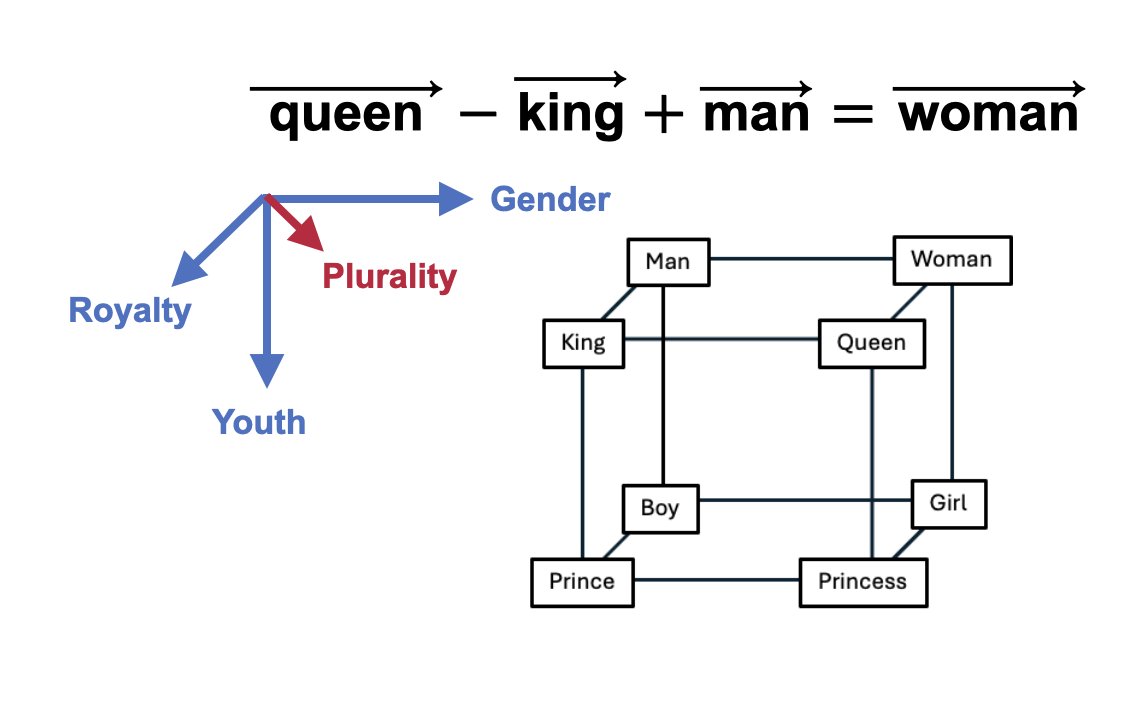

❓ How do LLMs learn hierarchical structure from sentences alone? 🚨 We build PCFG-like synthetic datasets with two knobs---hierarchy + ambiguity---and derive a correlation-based learning mechanism that predicts the sample complexity of deep nets. Results 👇

The Physics of Data and Tasks: Theories of Locality and Compositionality in Deep Learning ift.tt/9B0HFnC

Our new Simons Collaboration on the Physics of Learning and Neural Computation will employ and develop powerful tools from #physics, #math, computer science and theoretical #neuroscience to understand how large neural networks learn, compute, scale, reason and imagine: simonsfoundation.org/2025/08/18/sim…

There were so many great replies to this thread, let's do a Part 2! For scaling laws between loss and compute, where loss = a * flops ^ b + c, which factors change primarily the constant (a) and which factors can actually change the exponent (b)? x.com/_katieeverett/…

"The structure of data is the dark matter of theory in deep learning" — @SuryaGanguli during his talk on "Perspectives from Physics, Neuroscience, and Theory" at the Simons Institute's Special Year on Large Language Models and Transformers, Part 1 Boot Camp.

1/3 Check this ---> arxiv.org/abs/2307.02129. After years of dabbling in machine learning theory, we (finally) go back to our physics roots and introduce an idealised model of data that sheds light on a pressing question of the field: how do deep neural networks work?