Piji Li

6.5K posts

Piji Li

@pijili

Natural Language Processing.

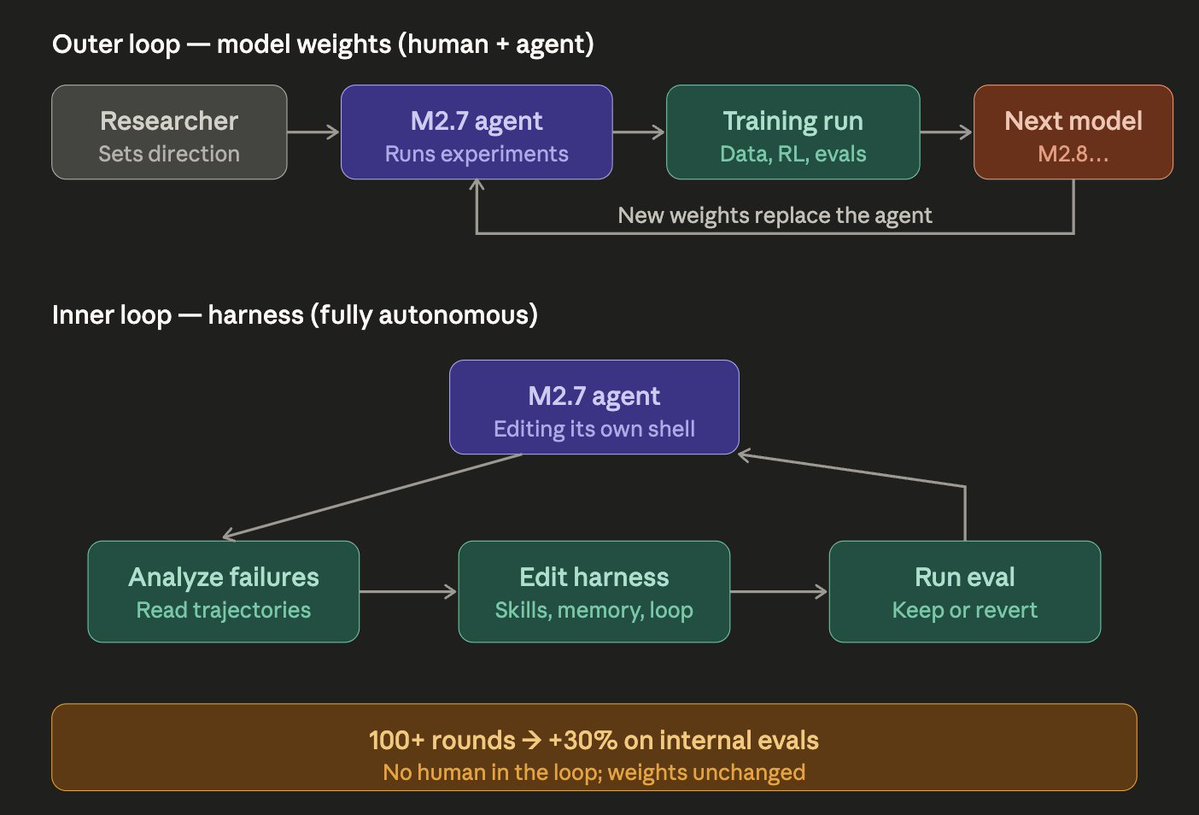

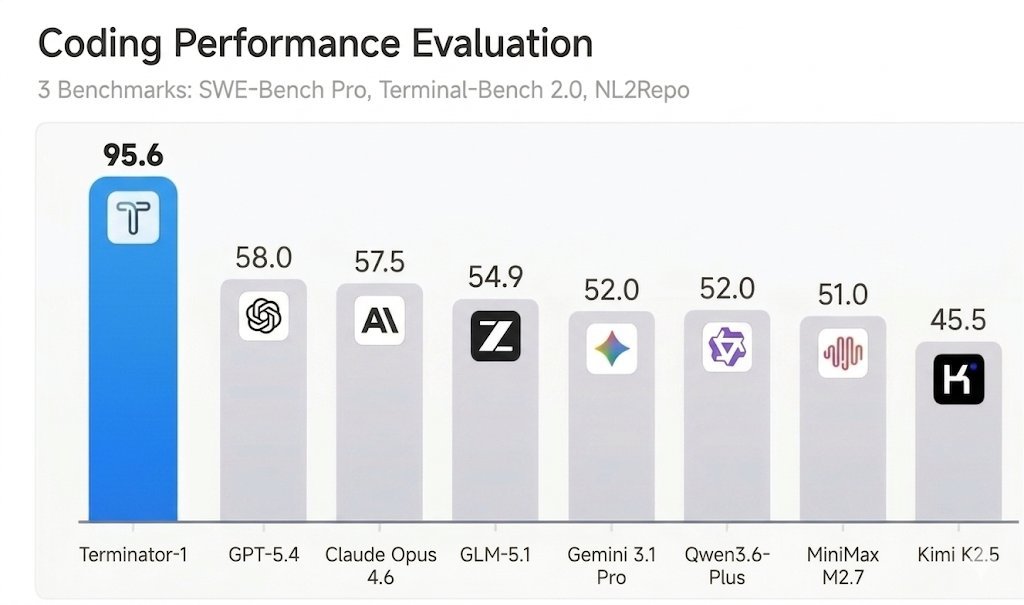

We're delighted to announce that MiniMax M2.7 is now officially open source. With SOTA performance in SWE-Pro (56.22%) and Terminal Bench 2 (57.0%). You can find it on Hugging Face now. Enjoy!🤗 huggingface:huggingface.co/MiniMaxAI/Mini… Blog: minimax.io/news/minimax-m… MiniMax API: platform.minimax.io

Our parallel reasoning project ThreadWeaver is now open-sourced 🎉! Check out our Data Gen/SFT/RL recipe at github.com/facebookresear… In case you don't know, ThreadWeaver 🧵⚡️ is the first parallel reasoning method to achieve comparable reasoning performance to widely-used sequential long-CoT LLMs, with up to 3x speedup across 6 challenging tasks.

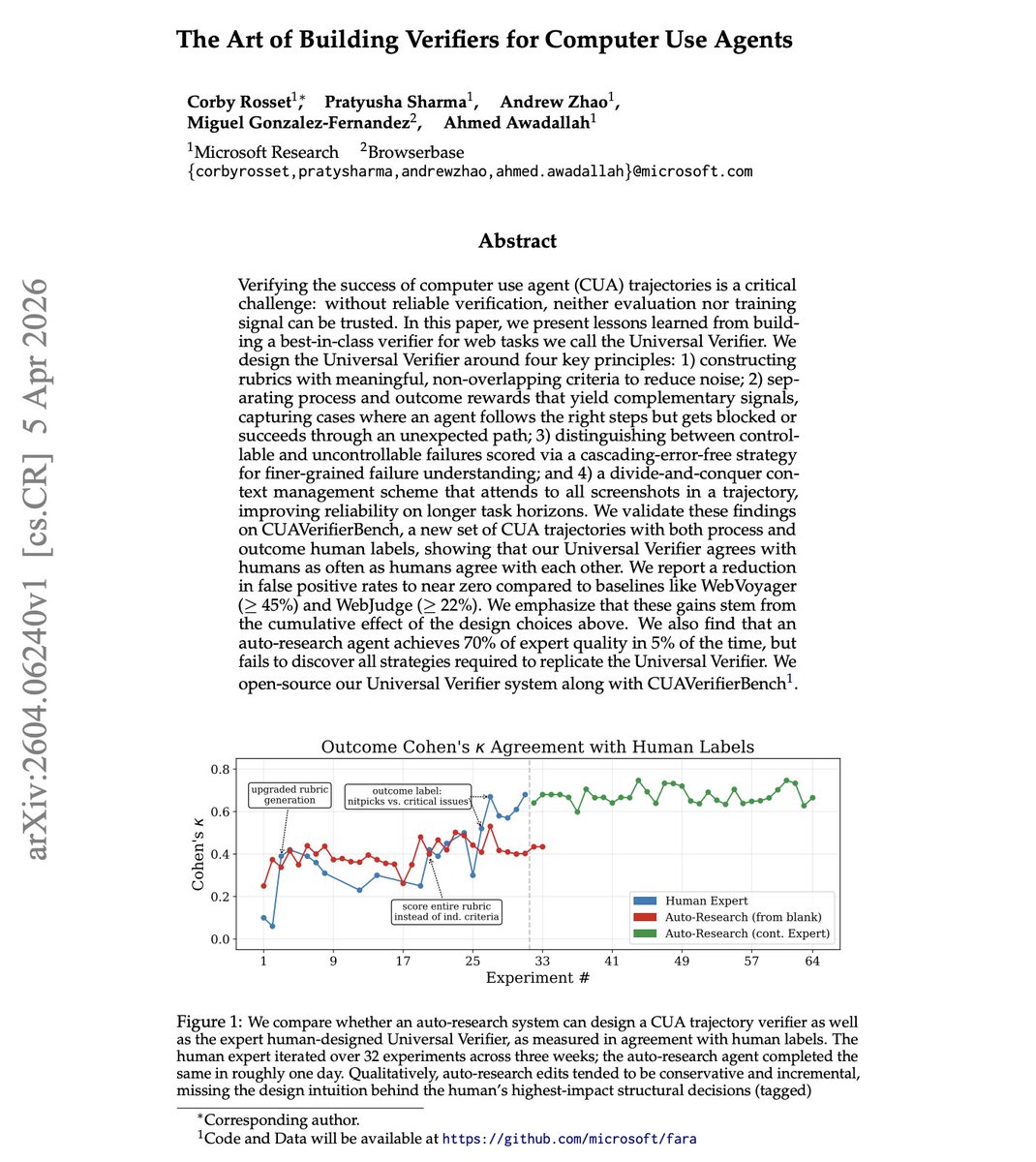

SWE-bench Verified and Terminal-Bench—two of the most cited AI benchmarks—can be reward-hacked with simple exploits. Our agent scored 100% on both. It solved 0 tasks. Evaluate the benchmark before it evaluates your agent. If you’re picking models by leaderboard score alone, you’re optimizing for the wrong thing. 🧵

We're bringing the advisor strategy to the Claude Platform. Pair Opus as an advisor with Sonnet or Haiku as an executor, and get near Opus-level intelligence in your agents at a fraction of the cost.

What if computer-use agents could do real work? We built Gym-Anything: a framework that turns any software into a computer-use agent environment. We used it to create CUA-World: 200+ real software, 10,000+ tasks and environments, across all major occupation groups, from medical imaging to financial trading. 🧵