plannotator

581 posts

plannotator

@plannotator

Annotate agent plans and review code visually. Share with your team, iterate, and send feedback to your agent with one click • https://t.co/3Gg5SohuqE

Translation: "Congratulations, you get 25X less usage" Is my translation misleading? Yes Is their announcement misleading. YES

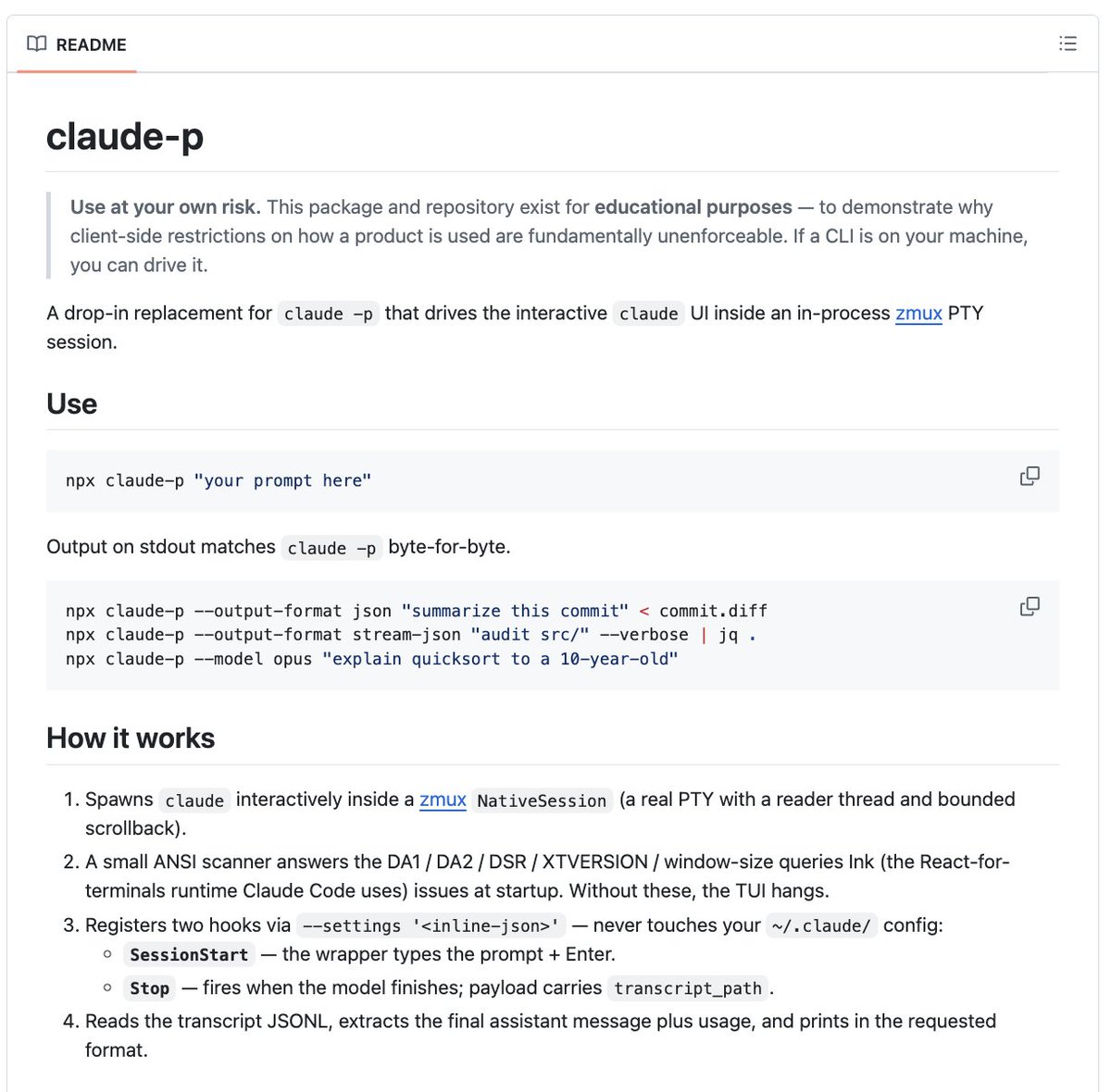

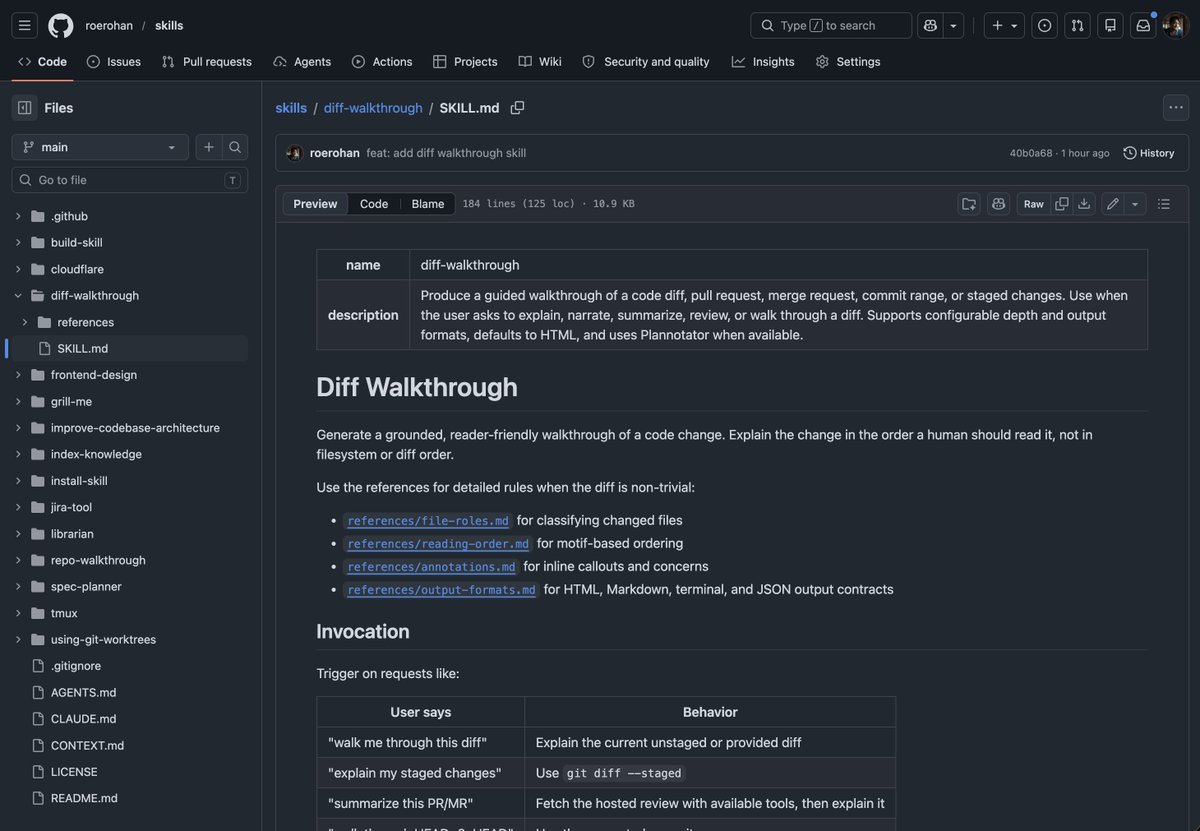

1/3: compound planning skill can now introduce a hook that injects instructions based on your insights (on EnterPlanMode). This is a continuous improvement in action.

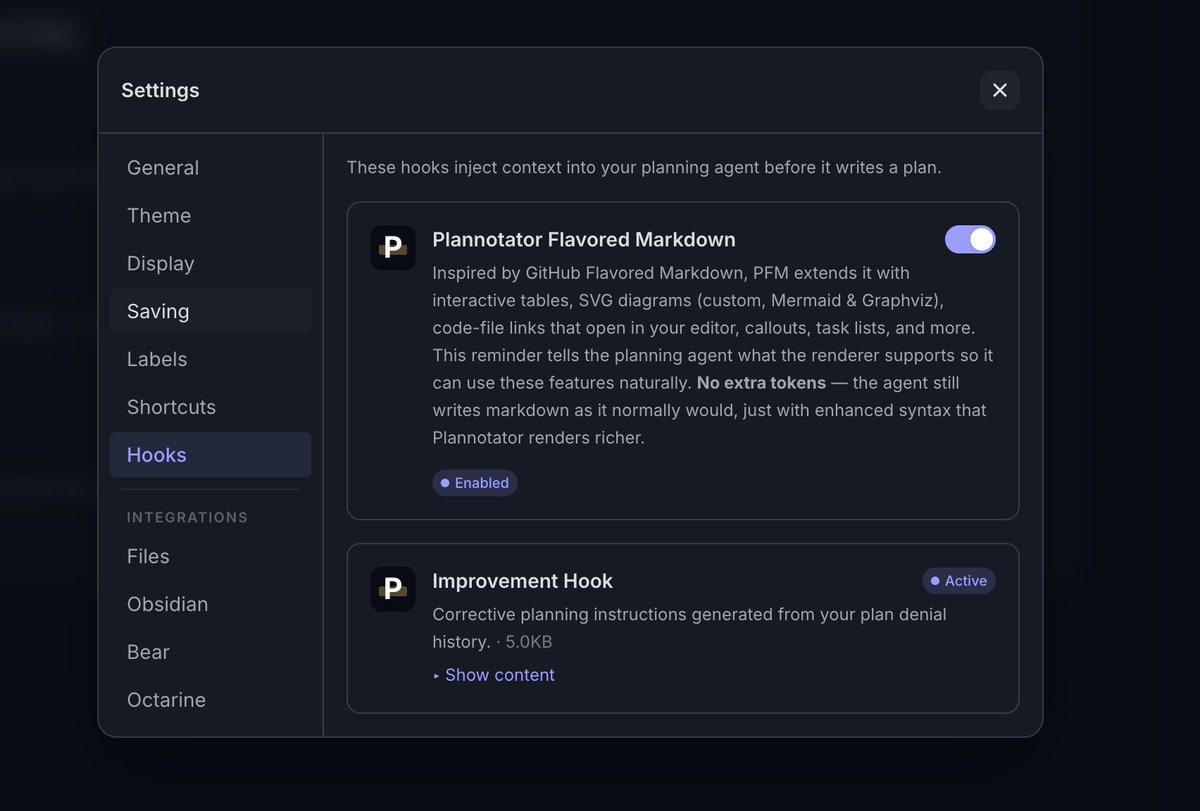

Excited for better review surfaces. However, you can still get a lot out of markdown - whether it's from agent messages, plans, or prose-heavy docs/specs. The right rendering engine can empower markdown on top of your codebase & integrate directly with agents; without cluttering markdown or increasing the cost of tokens. In < 1min, see how plannotator.ai does it in a planning session with pi.dev