Pedro Lopes

10.4K posts

@plopesresearch

Assoc. Prof. of Comp. Science @uchicagocs / Human Computer Integration Lab / XR, haptics, hw / HCI lab: https://t.co/lNuu6qMtRX / music: https://t.co/XcUgF18GAu

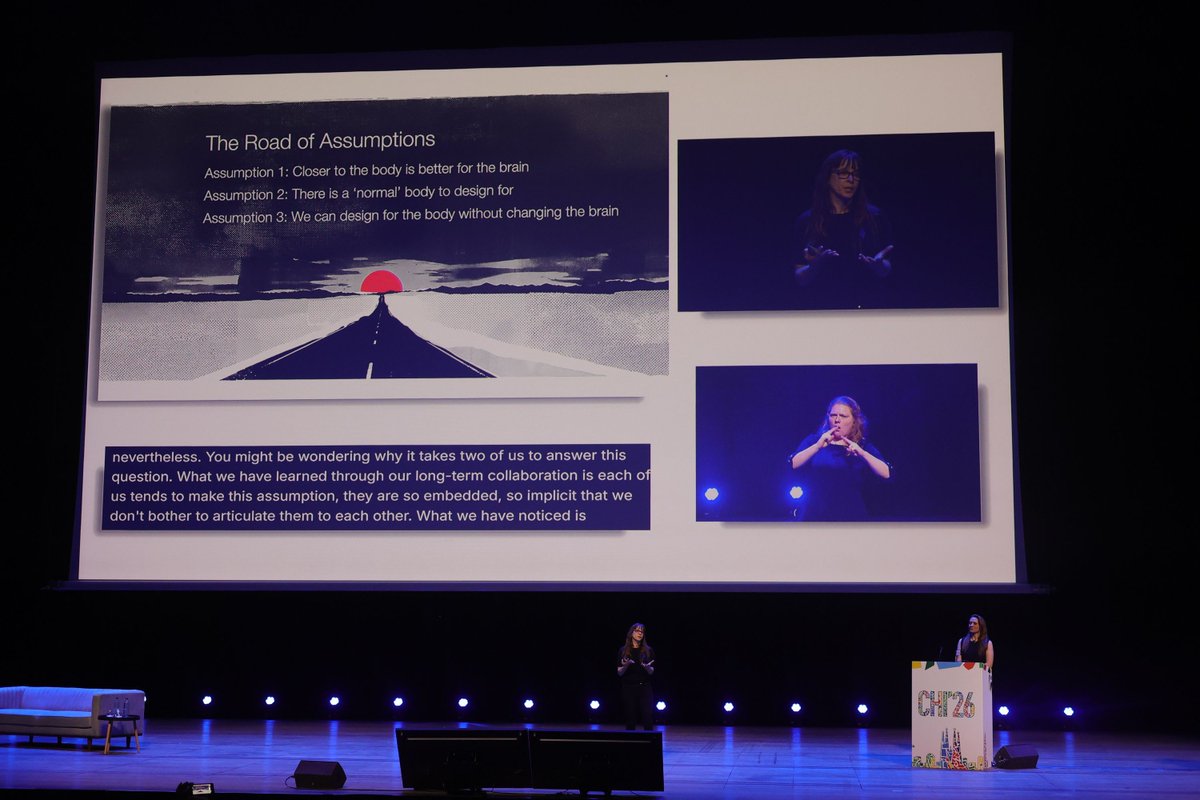

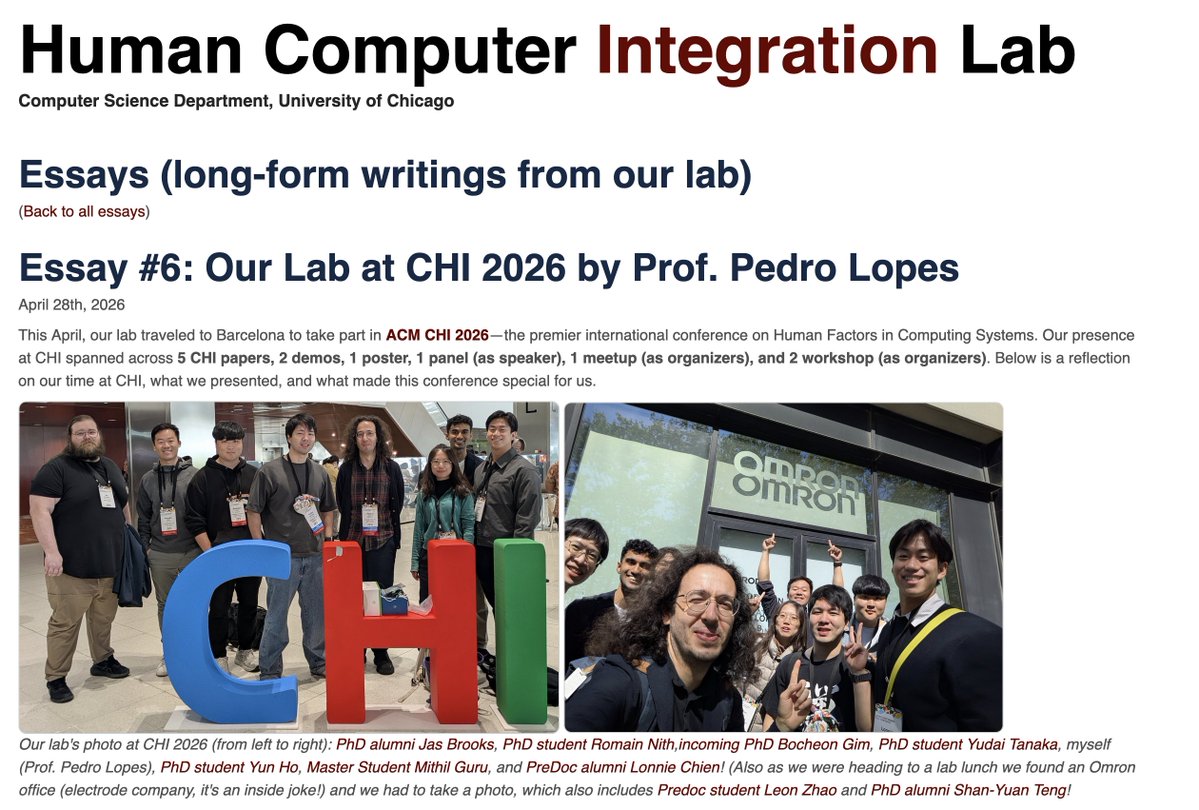

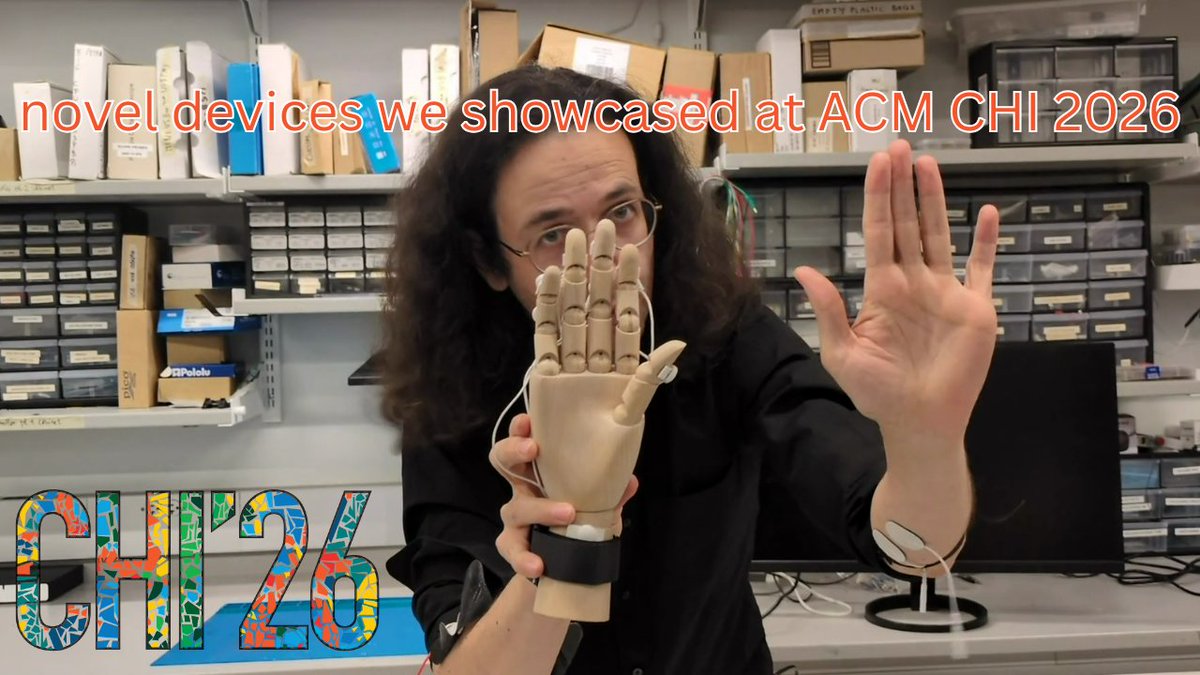

The most interesting question some folks asked me at #CHI2026 was: "did you not do a video with all the lab's paper this year?" Indeed, given the chairing work, I wasn't able too, but the enthusiasm of these folks helped me do it now 🙏! Here you go: youtube.com/watch?v=4VVtHG…

#UIST 2026 Student Innovation Contest CFP is out (#sic" target="_blank" rel="nofollow noopener">uist.acm.org/2026/cfp/#sic

)! This year, we partner with @emotibit to push the boundaries of prototyping with biosignals. The proposal deadline is June 15. Join the UIST SIC and turn your ideas into reality!

📣Apply or nominate someone for our new open calls! We're seeking volunteers for VP at Large on the SIGCHI Exec, the CHI Steering Committee, and SIGCHI Conference Futures (due May 5). We also have our rolling open call for SIGCHI committees. Apply at sigchi.submittable.com!