Plugyawn

1.6K posts

Plugyawn

@plugyawn

writing as a rehearsal of language. language as a rehearsal of logic.

we let opus 4.7 and gpt 5.5 run on the nanogpt optimizer speedrun: ~10k runs, 14k H200 hours, 23.9B tokens. opus hits 2930, codex 2950, both beating the human baseline of 2990. we cover claude autonomy failures, codex high compute usage, and much more primeintellect.ai/auto-nanogpt

We’re training models wrong and it’s due to chatGPT. Even the modern coding agents used daily still use message-based exchanges: They send messages to users, to themselves (CoT) and to tools, and receive messages in turn. This bottlenecks even very intelligent agents to a single stream. The models cannot read while writing, cannot act while thinking and cannot think while processing information. In our new paper, see below, we discuss LLMs with parallel streams. We show that multi-stream LLMs can … 🔵Be created by instruction-tuning for the stream format 🔵Simplify user and tool use UX removing many pain points with agents and chat models (such as having to interrupt the model to get a word in) 🔵Multi-Stream LLMs are fast, they can predict+read tokens in all streams in parallel in each forward pass, improving latency 🔵 LLMs with multiple streams have an easier time encoding a separation of concerns, improving security 🔵 LLMs with many internal streams provide a legible form of parallel/cont. reasoning. Even if the main CoT stream is accidentally pressured or too focused on a particular task to voice concerns, other internal streams can subvocalize concerns that would otherwise not be verbalized. Does this sound related to a recent thinky post :) - Yes, but I don’t feel so bad about being outshipped with such a cool report on their side by 23 hours. I’ll link a 2nd thread below with a more direct comparison. I actually think both are complementary in interesting ways.

Yesterday, I was giving an intro talk to our dept's new PhD students. Technical things aside, my number 1 suggestion has remained the same over the years: Treat your PhD like a job. - Avoid 1.5h lunch and three tea breaks. - Avoid gossiping and loitering at work. - Lab at 9 am and leave at 6 pm. Being productive till 11 pm in the lab is a lie people till themselves when their day starts at 1 PM. Everything worth doing can be done with high intensity focus during work hours. And having fun in life is the secret to being productive in a marathon.

Yesterday, I was giving an intro talk to our dept's new PhD students. Technical things aside, my number 1 suggestion has remained the same over the years: Treat your PhD like a job. - Avoid 1.5h lunch and three tea breaks. - Avoid gossiping and loitering at work. - Lab at 9 am and leave at 6 pm. Being productive till 11 pm in the lab is a lie people till themselves when their day starts at 1 PM. Everything worth doing can be done with high intensity focus during work hours. And having fun in life is the secret to being productive in a marathon.

I believe the kids call this "@thinkymachines just brutally framemogged gdm and oai". basically everyone's definition of "realtime" just got a massive frciking upgrade

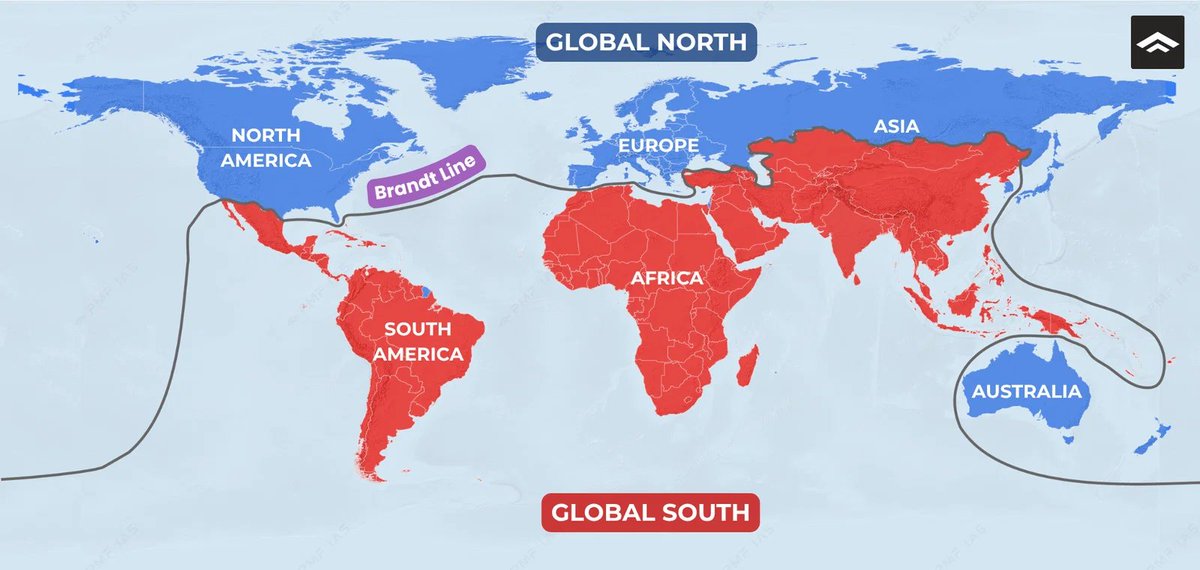

The South is amazing. Imagine making absolutely zero progress in basically any area for 160 years. That's almost a talent.

The Sam Altman and @miramurati texts from the day he got fired from @OpenAI in 2023 just became evidence in the @elonmusk v. @sama trial. It felt like a meaningful moment in AI history, so I turned it into a musical. The lyrics are the texts.

How do we make LLMs faster and lighter? Don’t force the GPU to adapt to sparsity. Reshape the sparsity to fit the GPU! ⚡️ Excited to share our new #ICML2026 paper in collaboration with @NVIDIA: "Sparser, Faster, Lighter Transformer Language Models". This work introduces new open-source GPU kernels and data formats for faster inference and training of sparse transformer language models: Paper: arxiv.org/abs/2603.23198 Blog: pub.sakana.ai/sparser-faster… Code: github.com/SakanaAI/spars… While LLMs are undoubtedly powerful, they are increasingly expensive to train and deploy, with a large part of this cost coming from their feedforward layers. Yet, an interesting phenomenon occurs inside these layers: For any given token, only a small fraction of the hidden activations actually matter. The rest approximate zero, wasting computation. With ReLU and very mild L1 regularization, this sparsity can exceed 95% with little to no impact on downstream performance. So, can we leverage this sparsity to make LLMs faster? The challenge is hardware. Modern GPUs are optimized for dense matrix multiplications. Traditional sparse formats introduce irregular memory access and overheads that cancel out their theoretical savings for GEMM operations. Our contribution is twofold: 1/ We introduce TwELL (Tile-wise ELLPACK), a new sparse packing format designed to integrate directly in the same optimized tiled matmul kernels without disrupting execution. 2/ We develop custom CUDA kernels that fuse multiple sparse matmuls to maximize throughput and compress TwELL to a hybrid representation that minimizes activation sizes. We used our kernels to train and benchmark sparse LLMs at billion-parameter scales, demonstrating >20% speedups and even higher savings in peak memory and energy. This work will be presented at #ICML2026. Please check out our blog and technical paper for a deep dive!

Nature has finally discovered autodiff and gradient descent via humans, and is now speedmaxxing towards birthing a new species.

What the SpaceX–Anthropic Deal Means Two weeks ago, we published a note laying out what GPT-5.5's release implied. The conclusion was simple: whoever secures compute first, in greater volume, and with greater reliability ultimately takes the win. With OpenAI's 30GW roadmap dwarfing Anthropic's 7–8GW, we closed by arguing that the structural advantage on compute sat with OpenAI. Less than a fortnight later, that conclusion is being tested. On May 6, Anthropic signed a single-tenant lease for the entirety of Colossus 1 with SpaceXAI — the infrastructure subsidiary that consolidates Elon Musk's xAI and SpaceX. The asset carries more than 220,000 GPUs and 300MW of power, and crucially, is scheduled to come online within this month. It served as the capstone of Anthropic's April blitz, which added 13.8GW of cumulative capacity over the span of a single month. On headline numbers alone, OpenAI took more than a year to stack 18GW; Anthropic has put 13.8GW in the ground in thirty days. The takeaways break down into three. First, the compute pecking order has been redrawn again. Anthropic has now swept up the AWS expansion (5GW, with $100B+ in spend commitments over a decade), Google + Broadcom (3.5GW of TPU), Google Cloud (5GW alongside a $40B investment), and now SpaceXAI's Colossus 1 (0.3GW). Cumulative committed capacity, inclusive of pre-April allocations, sits at 14.8GW. This is still only half of OpenAI's 2030 target of 30GW, but the fact that the SpaceX lease will be live inside a month makes "deliverability" a qualitatively different proposition. Second, Elon Musk is the plaintiff in an active lawsuit against OpenAI — and at the same time, the supplier handing 220,000+ GPUs and 300MW of power, in one block, to OpenAI's most formidable competitor. The timing matters: the deal was struck in the middle of the Musk–Altman trial. We read this as a deliberate pincer with OpenAI in the middle. In the courtroom, Musk works to dismantle the moral legitimacy of OpenAI's leadership; in the market, he arms Anthropic to absorb OpenAI's revenue and user base. Third, the structure is financial-engineering perfection — a clean win-win for both sides. xAI can recognize $6B of annual revenue from a single contract, an amount that almost precisely offsets its Q1 2026 annualized net loss of $6B. It also accelerates the cleanup of SpaceXAI's pre-IPO balance sheet, with the entity now being floated at around $1.75T. Anthropic, on the other side, converts roughly $5B of spend into what it expects to be $15B of ARR via the coming inference-revenue surge. (Mirae Asset Securities, May 8, 2026)