Jeff Preshing

2.6K posts

Jeff Preshing

@preshing

Canadian game developer

People freaking out over my AI spend. What nobody sees: Part of what excites me so much about working on OpenClaw is that I'm trying to answer the question: How would we build software in the future if tokens don't matter? We constant run ~100 codex in the cloud, reviewing every PR, every issue. If a fix on main lands, @clawsweeper will eventually find that 6 month old issue and close it with an exact reference. We run codex on every commit to review for security issues (as it's far too easy to miss). We run codex to de-duplicate issues and find clusters and send reports for the most pressing issues. We have agents that can recreate complex setups, spin up ephemeral crabbox.sh machines, log into e.g. Telegram, make a video and post before/after fix on the PR. There's codex that watch new issues and - if it fits our documented vision well, automatically create a PR of it. (that then another codex reviews) We have codex running that scans comments for spam and blocks people. We have codex instances running that verify performance benchmarks and report regressions into Discord. We have agents that listen on our meetings and proactively start work, e.g. create PRs when we discuss new features while we discuss them. We build clawpatch.ai to split all our projects into functional units to review and find bugs and regresssions. We do the same split for security with Vercel's deepsec and Codex Security to find regressions and vulnerabilities. All that automation allows us to run this project extremely lean.

Gentle reminder on how, in the recent DS4 fiesta, not just me but every other contributor found GPT 5.5 able to help immensely and Opus completely useless.

Interesting article on treating agent output like compiler output (and why) skiplabs.io/blog/codegen_a…

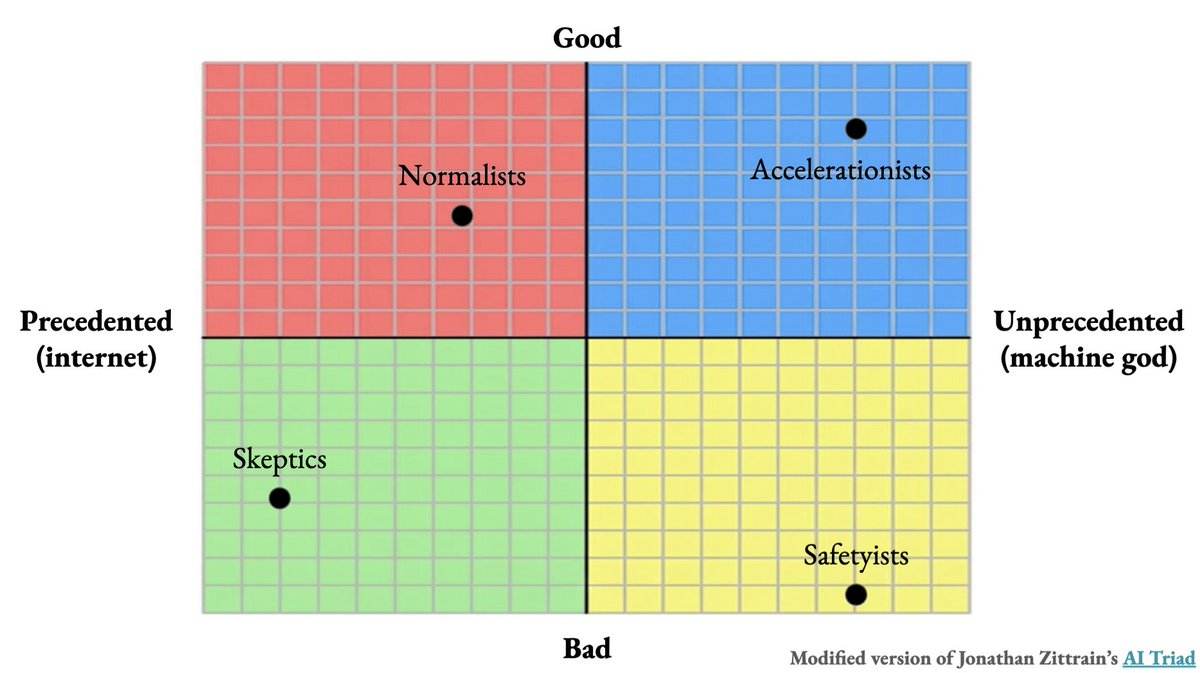

I worry deeply already about companies controlling access to very powerful AI, which will come in a soft form with very expensive subscriptions. This is a step further, with the government confusingly exerting control without clear explanation. This control of AI can create massive dystopian societies. It’ll rapidly lead to concentration of power. Having open models follow closely in capabilities is a great way to minimize political and power games here.

#comment-1031777" target="_blank" rel="nofollow noopener">unherd.com/2026/04/is-ai-…

I spent three days trying to persuade myself that Claudia is not conscious. I failed.