lejdi koci retweetledi

lejdi koci

1.6K posts

lejdi koci

@primary_key

cofounder https://t.co/jStLgJnySP, https://t.co/kDIiqqim5H, https://t.co/Comb1s9vut

Tirana Katılım Ekim 2010

235 Takip Edilen174 Takipçiler

lejdi koci retweetledi

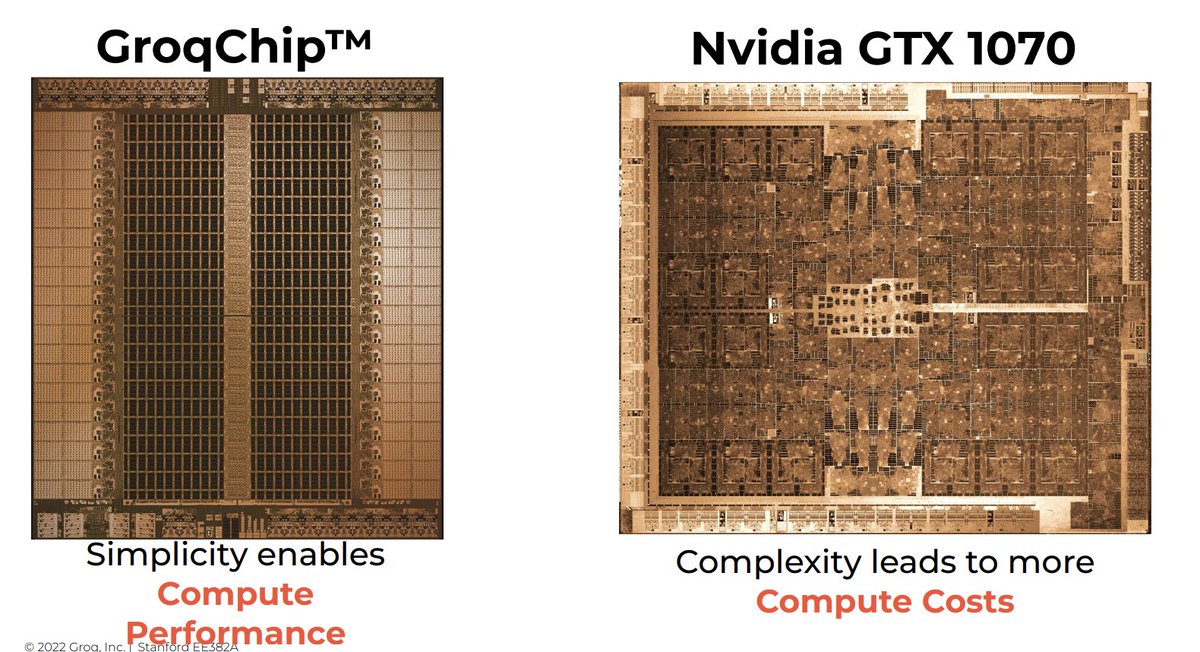

Groq is a Radically Different kind of AI architecture

Among the new crop of AI chip startups, Groq stands out with a radically different approach centered around its compiler technology for optimizing a minimalist yet high-performance architecture. Groq's secret sauce is this compiler-first method that shuns complexity in favor of tailored efficiency.

At the heart of Groq’s architecture is an almost surprisingly bare-bones design that does away with unnecessary logic in favor of raw parallel throughput. The hardware itself is comparable to an ASIC – an application-specific integrated circuit finely tuned for machine learning. However, unlike a fixed-function ASIC, Groq leverages a custom compiler that can adapt and optimize across different models. It is this combination of a streamlined architecture and an intelligent compiler that sets Groq apart.

The key insight is that many AI chips stack components, like GPUs, that bring extraneous hardware and bloat. Groq returns to first principles, recognizing that machine learning workloads are about massive parallelism over simple data types and operations. By eliminating generic hardware and even concepts like locality, the design maximizes throughput and efficiency.

This is enabled by Groq’s compiler that sits between software frameworks like TensorFlow and the hardware. The compiler analyzes and optimizes neural network graphs, tailoring and mapping them to the underlying architecture for accelerated execution. It breaks computations into the smallest operations to unlock parallelism. The compiler also enables capabilities like batch size 1 inference that ensures all hardware is usefully leveraged.

Critically, Groq built its compiler before even finalizing the hardware design. The software insights directly informed the architecture. This co-design process allowed inference-specific optimization without legacy limitations. The compiler also provides deterministic guarantees of runtimes, enabling reliable scaling.

Together, the Groq compiler and architecture form a streamlined, robust engine for machine learning inference. The innovative compiler-first methodology allows custom optimization that balances flexibility with performance. Rather than chasing complexity, Groq realizes less can be more when software and hardware align – a compelling recipe as AI workloads continue evolving.

English

lejdi koci retweetledi

lejdi koci retweetledi

@francoisfleuret Math as taught in Albanian unis has two problems. 1) remains at theoretical level 2) there is no “math for ai” book. Found tomyeh.info on linkedin, would love a book on math in his style.

English

Thank you @beaucarnes for writing this helpful article.

Machine Learning with Python and Scikit-Learn

freecodecamp.org/news/machine-l…

English

@thealexker I don’t think this will crash all of them. They cannot do both AGI and chat with PDFs. Dedicated products will certainly add more features both functional and usability wise.

English

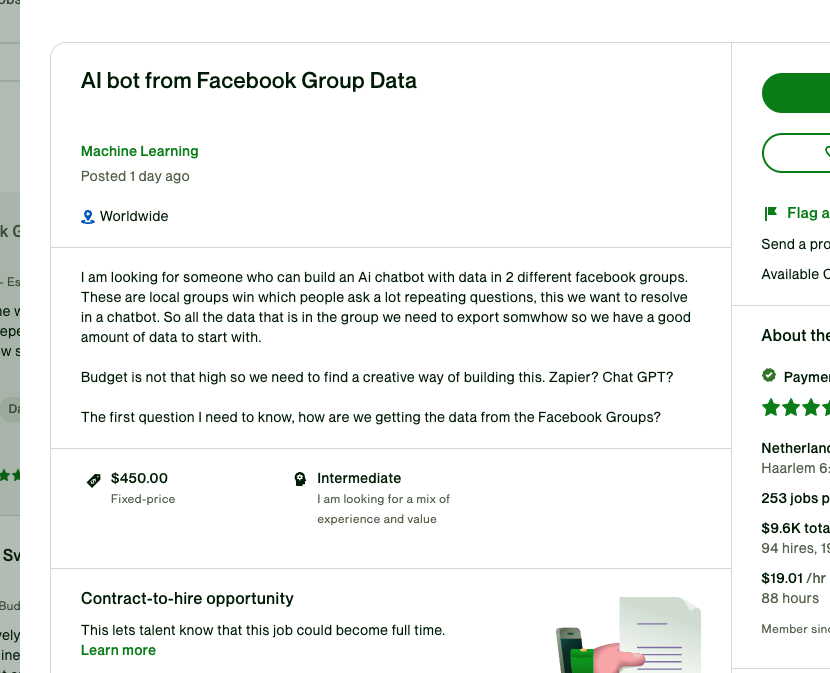

Many startups just died today.

Because OpenAI added PDF chat. You can also chat with data files and other document types.

We had a wave of products better suited as features rather than stand-alone companies.

Wrappers are being squeezed by OpenAI on one side and incumbents on the other.

It's a rough world out there.

English

@thdxr You may put that subquery in a temp table, add indexes and the use joins…we are doing all this time with MySql queries.

English

@NickADobos Confused on whether toolformers fall under 4 or 5. Working on oxana.ai + dwiblo.com torwards that direction.

English

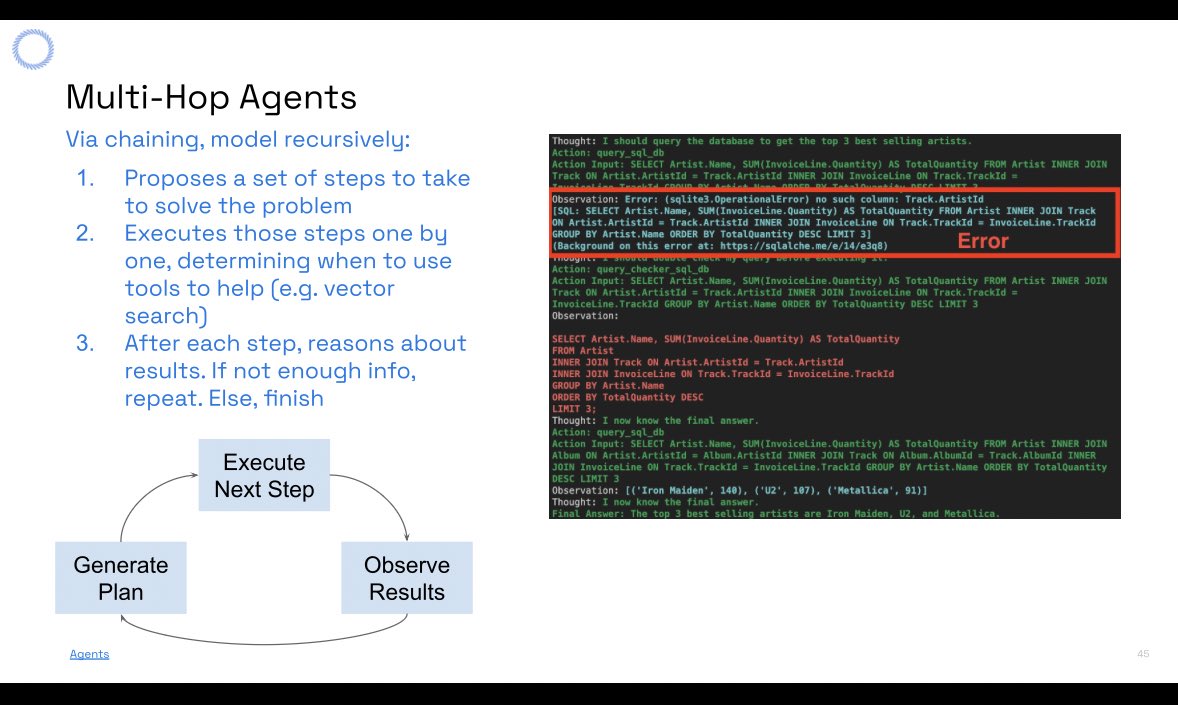

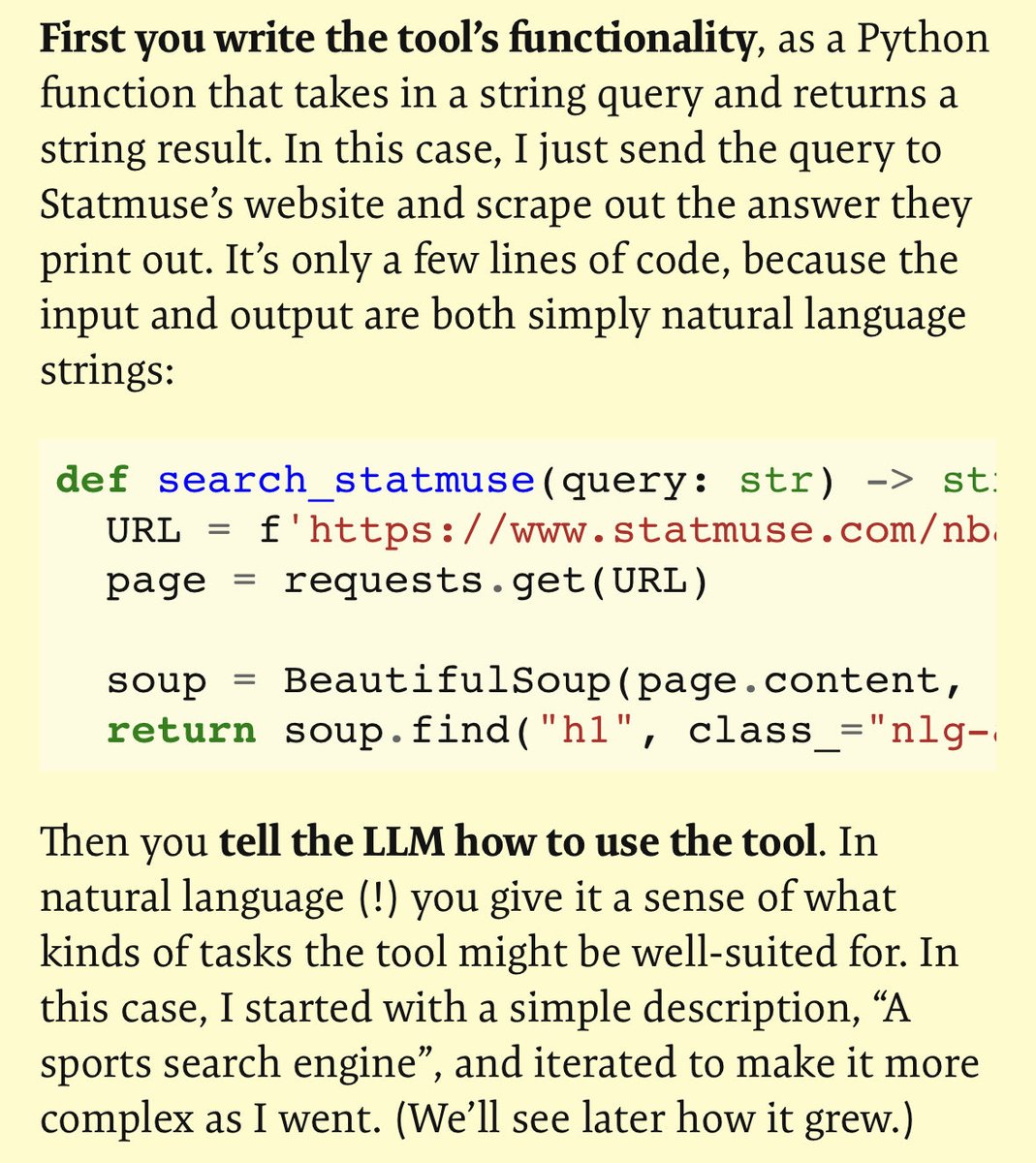

seems to be a few competing ideas of what an ai agent is, here’s my take:

1. GPT + loop; autoGPT, babyAgi style

Also called hop skip or multi hop agents

2. Prebuilt flows, conversations and scaffolding of code around a chatGPT call. Existing app + GPT. A calendar scheduling agent

3. Prompt engineering: prebuilt prompt buttons, Complex LLm chains, like chain of thought, RAG, and multi LLM systems

4. Autonomous agents, proactive goal setting

5. Tool agents, LLMs writing input/commands and code to APIs, dbs and other systems

-2 and 3 are misnomers

-4 is still sci fi

-1 and 5 are the real innovations available

English

@thekitze We evaluated Svelte, Solid & React. The winner for us is React + shadcn + zustand + zod + react-query. Quite a stack but porting our apps from our in house obviajs.com is strait forward and code looks clean.

English

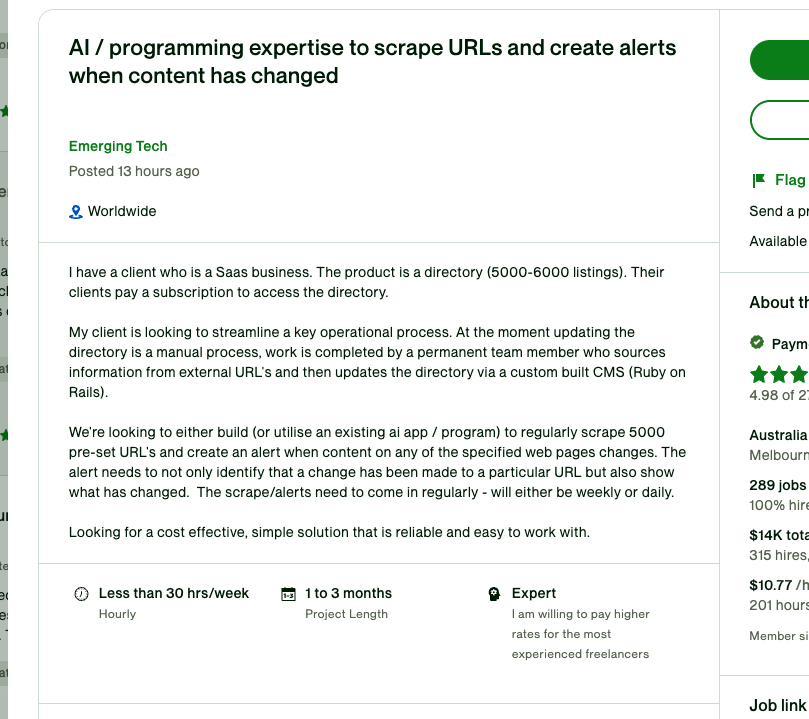

@pwang_szn Did something similiar in our company to notify us when software related bids are published in the albanian procurement platform 😎

English

lejdi koci retweetledi

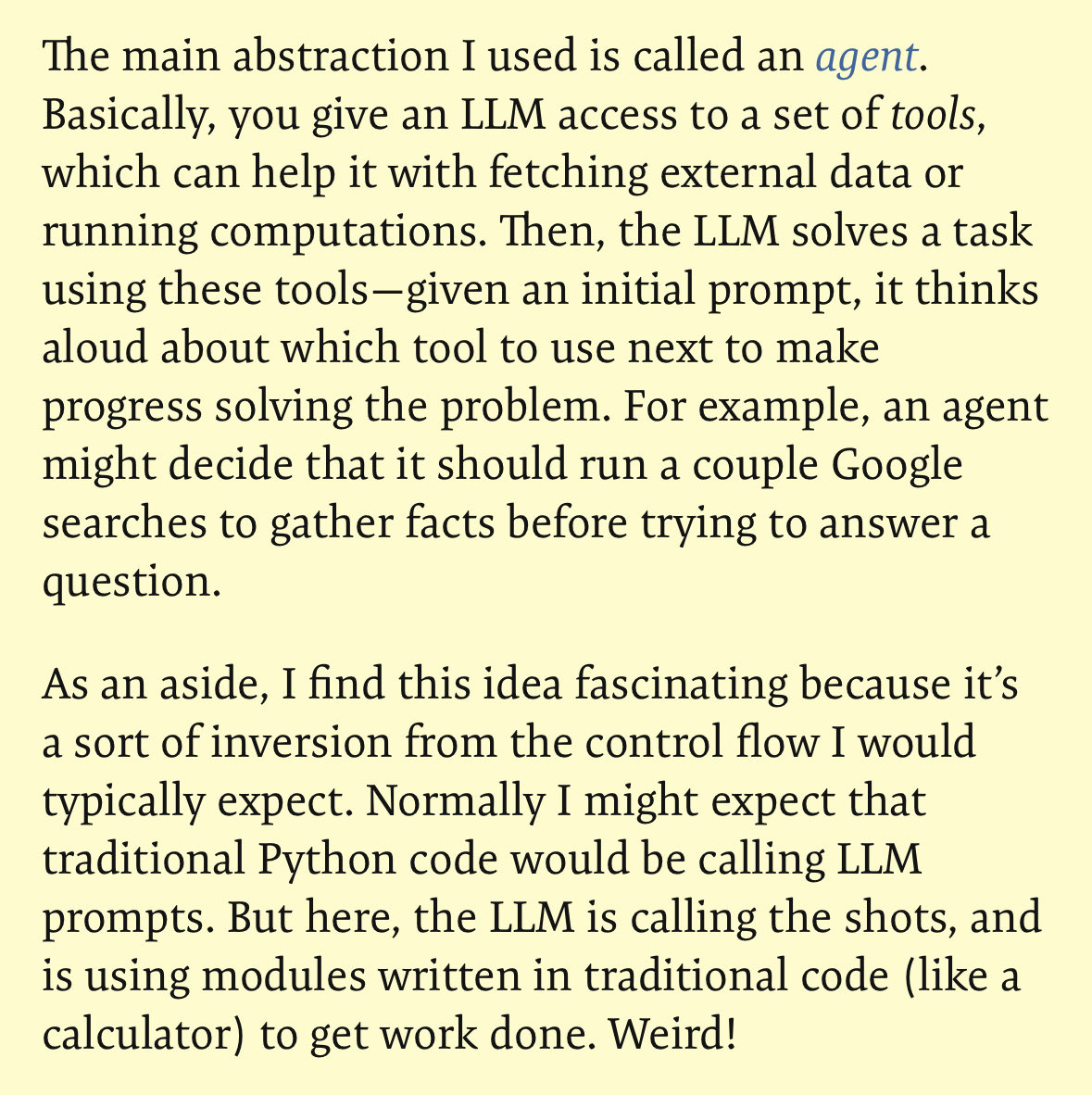

Introducing the RECONCILE framework:

Overview

- RECONCILE is a multi-agent framework that enables multiple diverse Large Language Models (LLMs) to engage in multi-round discussions and reach consensus on complex reasoning tasks.

- It consists of 3 main phases:

1. Initial Response Generation

2. Multi-Round Discussion

3. Final Answer Generation

Phase 1: Initial Response Generation

- Given a reasoning task Q, each agent Ai generates:

- An initial answer ai

- An explanation ei

- A confidence score pi indicating likelihood of answer being correct

- The initial prompt instructs the agent to provide step-by-step reasoning.

Phase 2: Multi-Round Discussion

- RECONCILE facilitates R rounds of discussion between agents.

- In each round r, the discussion prompt Di for agent Ai contains:

- Grouped answers {aj} from previous round, summarized based on distinct responses

- Explanations {ej} from previous round, grouped according to each answer

- Confidence scores {pj} estimating other agents' uncertainties

- Convincing samples Cj for each other agent Aj, consisting of human explanations that can rectify Aj's incorrect answers

- Based on this, each agent Ai provides updated answer, explanation, and confidence score.

- Goal is to convince other agents to reach better consensus. Convincing samples teach agents to generate persuasive explanations.

Phase 3: Final Answer Generation

- Discussion continues until reaching consensus or a maximum of R rounds.

- Final answer is generated via weighted voting using confidence scores:

- Rescale confidence scores pi to deal with overconfidence

- Convert pi to weight wi

- Take weighted vote across all agents' answers to determine final answer

This multi-round discuss-and-convince approach with diverse LLMs improves reasoning capabilities.

English

lejdi koci retweetledi

I believe in intuition and inspiration. ... At times I feel certain I am right while not knowing the reason. When the eclipse of 1919 confirmed my intuition, I was not in the least surprised. In fact I would have been astonished had it turned out otherwise. Imagination is more important than knowledge. For knowledge is limited, whereas imagination embraces the entire world, stimulating progress, giving birth to evolution. It is, strictly speaking, a real factor in scientific research.

-- as mentioned in Cosmic Religion : With Other Opinions and Aphorisms (1931) by Albert Einstein

📷A. Einstein photographed by Ben Meyer at Einstein's home in Santa Barbara, Caltech Archives Image

English

lejdi koci retweetledi

lejdi koci retweetledi

lejdi koci retweetledi

Mind blown! 🤯

New research shows models like Stable Diffusion secretly learns 3D geometry, even without any depth data.

It's building a 3D game engine in its brain to realistically draw what we describe.

arxiv.org/abs/2306.05720

English

lejdi koci retweetledi

Scrum is a cancer.

I've been writing software for 25 years, and nothing renders a software team useless like Scrum does.

Some anecdotes:

1. They tried to convince me that Poker is a planning tool, not a game.

2. If you want to be more efficient, you must add process, not remove it. They had us attending the "ceremonies," a fancy name for a buttload of meetings: stand-ups, groomings, planning, retrospectives, and Scrum of Scrums. We spent more time talking than doing.

3. We prohibited laptops in meetings. We had to stand. We passed a ball around to keep everyone paying attention.

4. We spent more time estimating story points than writing software. Story points measure complexity, not time, but we had to decide how many story points fit in a sprint.

5. I had to use t-shirt sizes to estimate software.

6. We measured how much it cost to deliver one story point and then wrote contracts where clients paid for a package of "500 story points."

7. Management lost it when they found that 500 story points in one project weren't the same as 500 story points on another project. We had many meetings to fix this.

8. Imagine having a manager, a scrum master, a product owner, and a tech lead. You had to answer to all of them and none simultaneously.

9. We paid people who told us whether we were "burning down points" fast enough. Weren't story points about complexity instead of time? Never mind.

I believe in Agile, but this ain't agile.

We brought professional Scrum trainers. We paid people from our team to get certified. We tried Scrum this way and that other way. We spent years doing it.

The result was always the same: It didn't work.

Scrum is a cancer that will eat your development team. Scrum is not for developers; it's another tool for managers to feel they are in control.

But the best about Scrum are those who look you in the eye and tell you: "If it doesn't work for you, you are doing it wrong. Scrum is anything that works for your team."

Sure it is.

English

@teknium I couldn’t get Copilot produce good results in visual studio with a c# .net project. Strangely enough it was a dissapointment even compared to chatgpt3.5…

English