prompt surfer

2.8K posts

prompt surfer

@promptsurfer

GEN AI ART

South Salt Lake, UT Katılım Nisan 2024

513 Takip Edilen167 Takipçiler

prompt surfer retweetledi

This hole unlocks a new wave of companies, which build deep domain expertise to solve a vertical problem with expert quality automation !

Sam Altman@sama

this works amazingly well; it has been one of my biggest surprises of 2024. excited to see what people build!

English

prompt surfer retweetledi

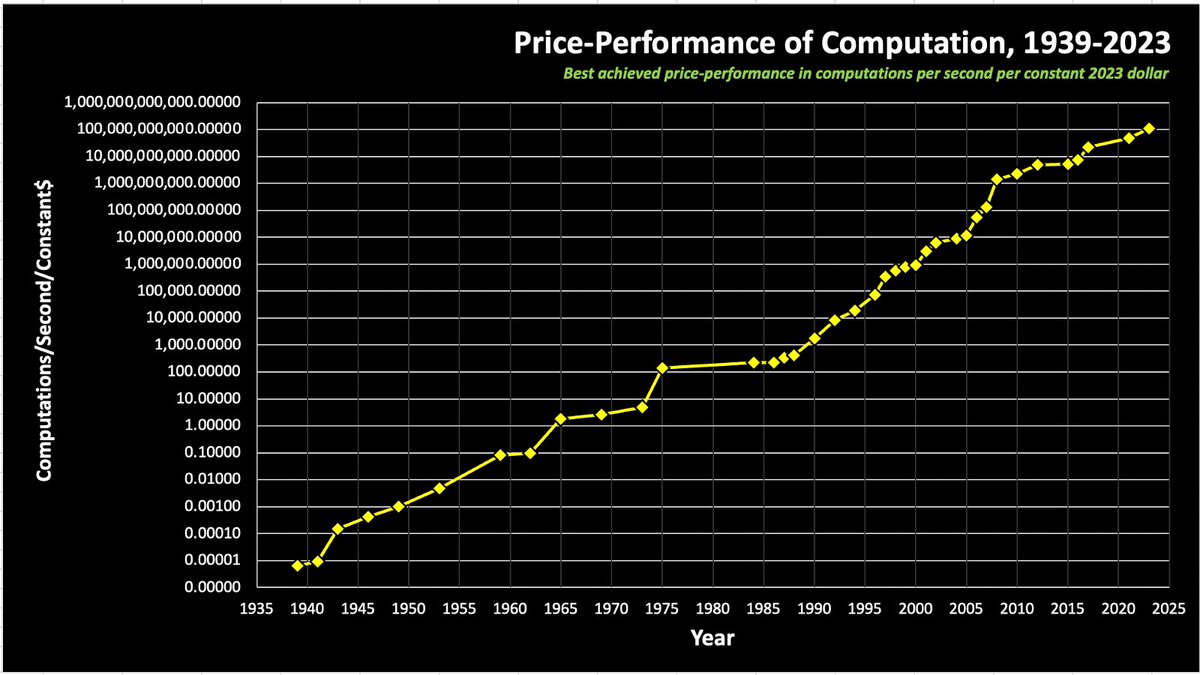

The Moore's Law Update

NOTE: this is a semi-log graph, so a straight line is an exponential; each y-axis tick is 100x. This graph covers a 1,000,000,000,000,000,000,000x improvement in computation/$. Pause to let that sink in.

Humanity’s capacity to compute has compounded for as long as we can measure it, exogenous to the economy, and starting long before Intel co-founder Gordon Moore noticed a refraction of the longer-term trend in the belly of the fledgling semiconductor industry in 1965.

I have color coded it to show the transition among the integrated circuit architectures. You can see how the mantle of Moore's Law has transitioned most recently from the GPU (green dots) to the ASIC (yellow and orange dots), and the NVIDIA Hopper architecture itself is a transitionary species — from GPU to ASIC, with 8-bit performance optimized for AI models, the majority of new compute cycles.

There are thousands of invisible dots below the line, the frontier of humanity's capacity to compute (e.g., everything from Intel in the past 15 years). The computational frontier has shifted across many technology substrates over the past 128 years. Intel ceded leadership to NVIDIA 15 years ago, and further handoffs are inevitable.

Why the transition within the integrated circuit era? Intel lost to NVIDIA for neural networks because the fine-grained parallel compute architecture of a GPU maps better to the needs of deep learning. There is a poetic beauty to the computational similarity of a processor optimized for graphics processing and the computational needs of a sensory cortex, as commonly seen in the neural networks of 2014. A custom ASIC chip optimized for neural networks extends that trend to its inevitable future in the digital domain. Further advances are possible with analog in-memory compute, an even closer biomimicry of the human cortex. The best business planning assumption is that Moore’s Law, as depicted here, will continue for the next 20 years as it has for the past 128. (Note: the top right dot for Mythic is a prediction for 2026 showing the effect of a simple process shrink from an ancient 40nm process node)

----

For those unfamiliar with this chart, here is a more detailed description:

Moore's Law is both a prediction and an abstraction. It is commonly reported as a doubling of transistor density every 18 months. But this is not something the co-founder of Intel, Gordon Moore, has ever said. It is a nice blending of his two predictions; in 1965, he predicted an annual doubling of transistor counts in the most cost effective chip and revised it in 1975 to every 24 months. With a little hand waving, most reports attribute 18 months to Moore’s Law, but there is quite a bit of variability. The popular perception of Moore’s Law is that computer chips are compounding in their complexity at near constant per unit cost. This is one of the many abstractions of Moore’s Law, and it relates to the compounding of transistor density in two dimensions. Others relate to speed (the signals have less distance to travel) and computational power (speed x density).

Unless you work for a chip company and focus on fab-yield optimization, you do not care about transistor counts. Integrated circuit customers do not buy transistors. Consumers of technology purchase computational speed and data storage density. When recast in these terms, Moore’s Law is no longer a transistor-centric metric, and this abstraction allows for longer-term analysis.

What Moore observed in the belly of the early IC industry was a derivative metric, a refracted signal, from a longer-term trend, a trend that begs various philosophical questions and predicts mind-bending AI futures.

In the modern era of accelerating change in the tech industry, it is hard to find even five-year trends with any predictive value, let alone trends that span the centuries.

I would go further and assert that this is the most important graph ever conceived. A large and growing set of industries depends on continued exponential cost declines in computational power and storage density. Moore’s Law drives electronics, communications and computers and has become a primary driver in drug discovery, biotech and bioinformatics, medical imaging and diagnostics. As Moore’s Law crosses critical thresholds, a formerly lab science of trial and error experimentation becomes a simulation science, and the pace of progress accelerates dramatically, creating opportunities for new entrants in new industries. Consider the autonomous software stack for Tesla and SpaceX and the impact that is having on the automotive and aerospace sectors.

Every industry on our planet is going to become an information business. Consider agriculture. If you ask a farmer in 20 years’ time about how they compete, it will depend on how they use information — from satellite imagery driving robotic field optimization to the code in their seeds. It will have nothing to do with workmanship or labor. That will eventually percolate through every industry as IT innervates the economy.

Non-linear shifts in the marketplace are also essential for entrepreneurship and meaningful change. Technology’s exponential pace of progress has been the primary juggernaut of perpetual market disruption, spawning wave after wave of opportunities for new companies. Without disruption, entrepreneurs would not exist.

Moore’s Law is not just exogenous to the economy; it is why we have economic growth and an accelerating pace of progress. At Future Ventures, we see that in the growing diversity and global impact of the entrepreneurial ideas that we see each year — from automobiles and aerospace to energy and chemicals.

We live in interesting times, at the cusp of the frontiers of the unknown and breathtaking advances. But, it should always feel that way, engendering a perpetual sense of future shock.

English

prompt surfer retweetledi

Introducing: Runner H 🏃🤖

After raising a $200m seed round, @hcompany_ai just dropped information about Runner H.

It's a specialized 3B model that can accomplish real-world tasks for you.

Details and WILD demos below 🧵

English

Has Pre-Training Hit a Wall? A Closer Look at the Debate

There’s a growing narrative in the AI community that pre-training has reached its limits. Proponents of this idea argue that we’ve extracted most of the value from large-scale pre-training, and that future gains will require innovations in scaling test-time compute rather than in the pre-training phase itself. OpenAI’s O1 Preview, for example, is cited as a model demonstrating how allocating more compute at inference time can enhance capabilities without further scaling dataset size or training resources. The claim is that these methods are necessary because we’ve hit a wall in improving models through traditional pre-training.

I disagree.

The Untapped Potential of Pre-Training

The idea that pre-training has "hit a wall" overlooks a fundamental challenge: we haven’t yet trained models on the best possible data humanity has to offer. Much of the world’s most valuable information remains locked behind barriers of intellectual property (IP), copyright, and legal restrictions. These constraints limit the diversity and richness of training datasets, leaving models with only a partial representation of the knowledge they could potentially learn from.

Furthermore, even when such data is theoretically accessible, the cost of acquiring and curating rare, high-quality data is astronomically high. As a result, pre-training efforts are often limited to data that is widely available or less expensive to obtain. This creates a situation where the perceived plateau in pre-training performance may be less about the methodology itself and more about the scarcity of the right kind of data.

The Cost of Rare Data

The rarer and more specialized the data, the greater the expense. This economic reality means that much of the high-value, nuanced data necessary for pushing pre-trained models to the next level remains unused. Until these barriers are addressed, it’s premature to declare that pre-training has hit its limits.

Scaling Test-Time Compute: Complementary, Not Replacement

The move towards scaling test-time compute is an exciting innovation, and methods like O1 Preview demonstrate how inference-time improvements can lead to better model performance. These approaches enable models to simulate human-like reasoning by leveraging additional compute resources during inference, which is a significant step forward.

However, this doesn’t mean pre-training has reached its endpoint. Test-time compute and pre-training are not mutually exclusive. Instead, they should be seen as complementary strategies. Better pre-training, fueled by more comprehensive datasets, would enhance the capabilities of models even before test-time compute is applied. Conversely, stronger pre-training reduces the dependency on massive inference compute, creating a more balanced and efficient AI system.

Breaking the Data Barrier

If we want to continue advancing AI, we need to focus on breaking the data barrier. This involves addressing legal and economic hurdles to unlock high-quality datasets while ensuring ethical and responsible use. Innovations in data acquisition, such as better synthetic data generation or collaborative data-sharing frameworks, could also play a pivotal role.

The Future of Pre-Training

The belief that pre-training has hit a wall is shortsighted. We’re not at the limits of what pre-training can achieve; we’re at the limits of what our current access to data allows us to achieve. By overcoming the data scarcity challenge, we can unlock the next wave of AI progress. Test-time compute is a powerful tool, but it should enhance, not replace, the foundational role of pre-training in building smarter, more capable models.

Let’s not close the book on pre-training just yet. Instead, let’s work to ensure that AI has the data it needs to realize its full potential.

English

@OfficialLoganK @simonw Why such a big difference between this and Gemini 1.5 Pro?

English

Say hello to gemini-exp-1121! Our latest experimental gemini model, with:

- significant gains on coding performance

- stronger reasoning capabilities

- improved visual understanding

Available on Google AI Studio and the Gemini API right now: aistudio.google.com

English

@tsarnick It's absolutely insane to think that we've perfected pretraining.

English

@tsarnick Bullshit. We just haven't gotten all the best data in the models yet because the best data is astronomically expensive the rarer it is. So companies keep on ranting about "test time compute" and synthetic data. We just need more efficient models and better data sets.

English

Arc is not going anywhere.

And since we are now building a more mass market, second product, we can give our Arc loyalists more gifts like these!

Now you can pick a custom dock icon for Arc👇enjoy!

Arc@arcinternet

'tis the season for giving 𝓉𝒽𝒶𝓃𝓀𝓈 🔓 all Arc icons are now unlocked on Mac🔓 a lil thanks from us, to you

English

@elonmusk @BrianRoemmele Lmao this is what Utah would look like if Elon moved here

English