Peter Schafhalter

118 posts

Peter Schafhalter

@pschafhalter

AI-Sys PhD student @ucbrise @ucberkeley focusing on systems for self-driving cars

🚀 Just Launched: VideoArena!🎥 Discover head-to-head comparisons of video clips generated from the same prompts across top text-to-video models. Compare outputs from 7 leading models and we're adding more soon! 🔗 Check out the leaderboard: videoarena.tv #Text2Video

@conor_power23 PSA: everybody just take the OS prelims. We rehauled the prelims last semester, and it’s now a pure riot 🎆🎉 cc @samkumar_cs @pschafhalter

We’re open-sourcing and arxiving GPUDrive, a GPU-accelerated 2.5D multi-agent driving simulator that runs at over a million FPS. Hundreds of scenes on one GPU means scalable multi-agent planning

We’ve built the Waymo Driver to operate without the need for human intervention, and in order to do that sometimes, it requires additional context from our fleet response team. See their role in helping us safely scale: waymo.com/blog/2024/05/f…

Ray operates at two levels: Ray Core, which scales Python functions and classes with tasks and actors, and its libraries, offering easy-to-use abstractions tailored for ML workloads. #Ray #ML #DistributedComputing

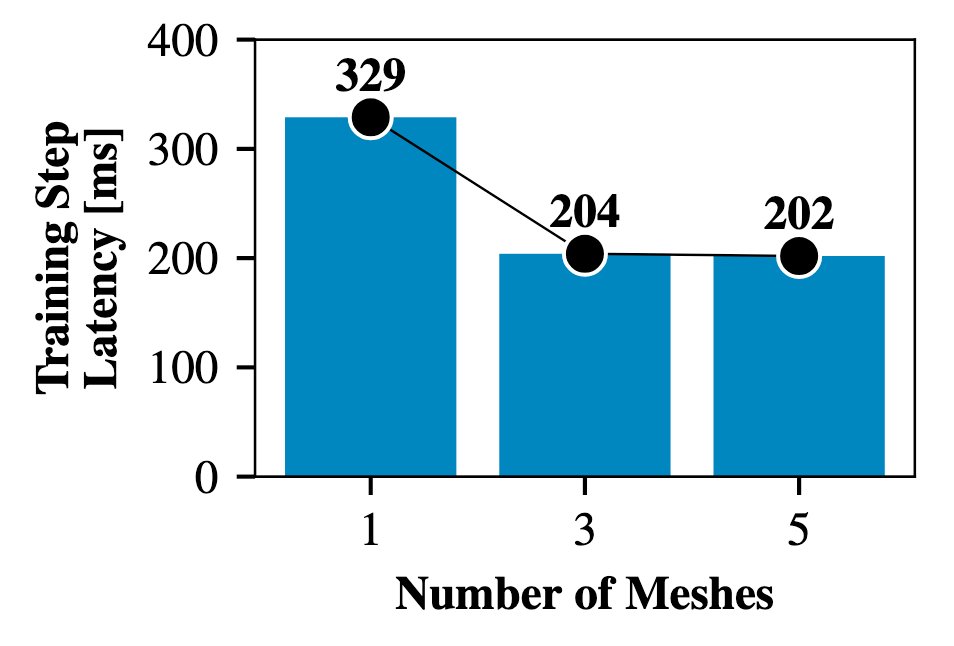

How do state-of-the-art LLMs like Gemini 1.5 and Claude 3 scale to long context windows beyond 1M tokens? Well, Ring Attention by @haoliuhl presents a way to split attention calculation across GPUs while hiding the communication overhead in a ring, enabling zero overhead scaling