OpenAI’s decision to enable erotica in ChatGPT is causing internal opposition among its own advisors.📣

In January, a council of advisers selected by OpenAI warned that AI-powered erotica could foster unhealthy emotional dependence on ChatGPT for users and that minors could find ways to access sex chats. One council member even suggested that OpenAI risked creating a “sexy suicide coach.”

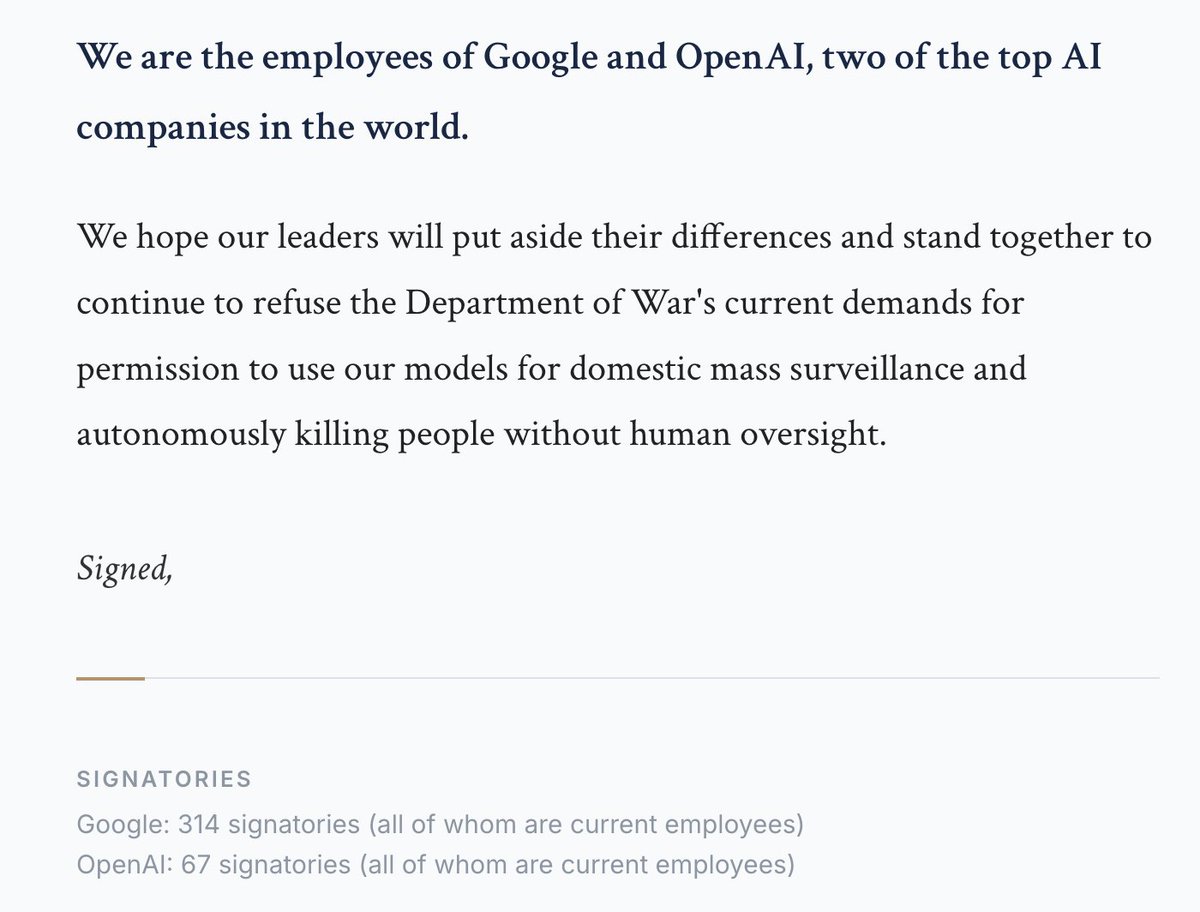

This is exactly why insider voices matter: without external oversight, only people inside these companies can push back on products that could harm vulnerable users—especially children.

#AI #OpenAI

wsj.com/tech/ai/openai…

English