Adrien Pacifico retweetledi

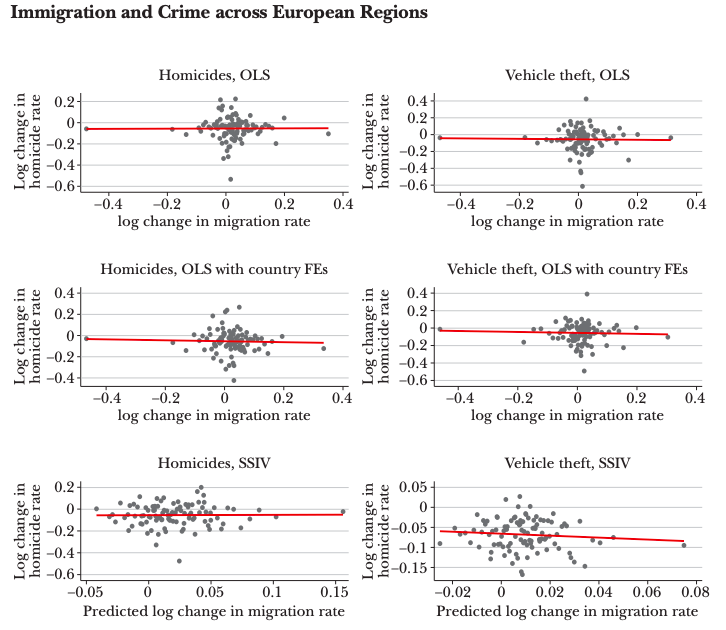

L’assemblée nationale vient de décider que les policiers pouvaient tuer les citoyens sans avoir à expliquer pourquoi.

LCP@LCP

Les députés adoptent l'amendement du gouvernement qui prévoit une présomption d'usage légitime de l'arme pour les forces de l'ordre. #DirectAN

Français