Ragavan

4.3K posts

Ragavan

@ragavan

Llama @ Meta. Prev: General Catalyst, Facebook AI, Mozilla. Increase access & opportunity for every human.

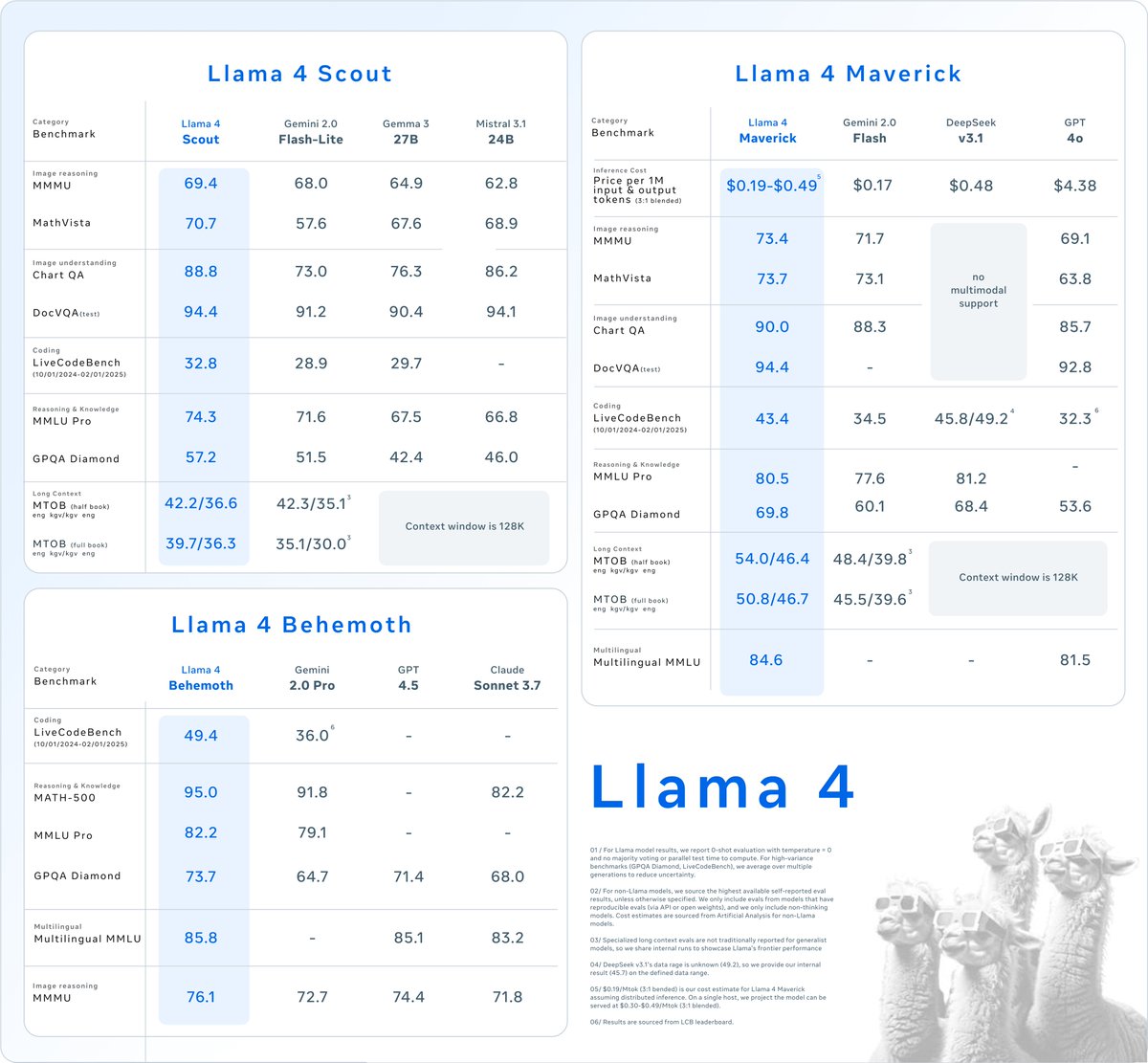

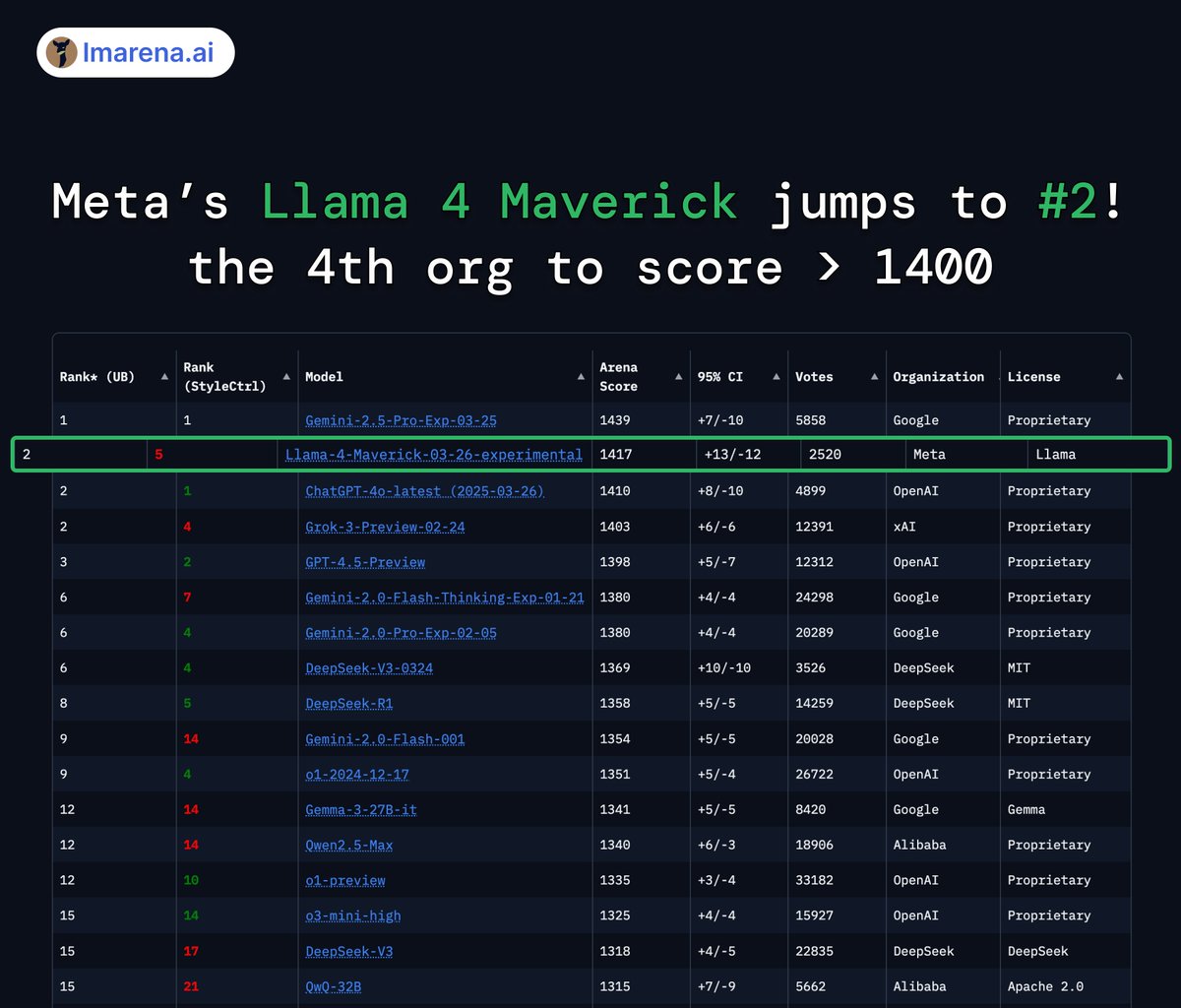

Today is the start of a new era of natively multimodal AI innovation. Today, we’re introducing the first Llama 4 models: Llama 4 Scout and Llama 4 Maverick — our most advanced models yet and the best in their class for multimodality. Llama 4 Scout • 17B-active-parameter model with 16 experts. • Industry-leading context window of 10M tokens. • Outperforms Gemma 3, Gemini 2.0 Flash-Lite and Mistral 3.1 across a broad range of widely accepted benchmarks. Llama 4 Maverick • 17B-active-parameter model with 128 experts. • Best-in-class image grounding with the ability to align user prompts with relevant visual concepts and anchor model responses to regions in the image. • Outperforms GPT-4o and Gemini 2.0 Flash across a broad range of widely accepted benchmarks. • Achieves comparable results to DeepSeek v3 on reasoning and coding — at half the active parameters. • Unparalleled performance-to-cost ratio with a chat version scoring ELO of 1417 on LMArena. These models are our best yet thanks to distillation from Llama 4 Behemoth, our most powerful model yet. Llama 4 Behemoth is still in training and is currently seeing results that outperform GPT-4.5, Claude Sonnet 3.7, and Gemini 2.0 Pro on STEM-focused benchmarks. We’re excited to share more details about it even while it’s still in flight. Read more about the first Llama 4 models, including training and benchmarks ➡️ go.fb.me/gmjohs Download Llama 4 ➡️ go.fb.me/bwwhe9

🎥 Today we’re premiering Meta Movie Gen: the most advanced media foundation models to-date. Developed by AI research teams at Meta, Movie Gen delivers state-of-the-art results across a range of capabilities. We’re excited for the potential of this line of research to usher in entirely new possibilities for casual creators and creative professionals alike. More details and examples of what Movie Gen can do ➡️ go.fb.me/kx1nqm 🛠️ Movie Gen models and capabilities Movie Gen Video: 30B parameter transformer model that can generate high-quality and high-definition images and videos from a single text prompt. Movie Gen Audio: A 13B parameter transformer model that can take a video input along with optional text prompts for controllability to generate high-fidelity audio synced to the video. It can generate ambient sound, instrumental background music and foley sound — delivering state-of-the-art results in audio quality, video-to-audio alignment and text-to-audio alignment. Precise video editing: Using a generated or existing video and accompanying text instructions as an input it can perform localized edits such as adding, removing or replacing elements — or global changes like background or style changes. Personalized videos: Using an image of a person and a text prompt, the model can generate a video with state-of-the-art results on character preservation and natural movement in video. We’re continuing to work closely with creative professionals from across the field to integrate their feedback as we work towards a potential release. We look forward to sharing more on this work and the creative possibilities it will enable in the future.

I'm so excited to introduce Augment, the company where I get to focus on supporting developers, ridding them of toil and allowing them to enjoy building!