Raphaël Millière

2.7K posts

Raphaël Millière

@raphaelmilliere

AI & Cognitive Science @UniofOxford @EthicsInAI Fellow @JesusOxford @raphaelmilliere.com on 🦋 Blog: https://t.co/2hJjfShFfr

Guys, stop pestering Mech Interp researchers about causality please! It's this inexplicable obsession with causality that made us lose beautiful sciences like Astrology, Palmistry and Phrenology! 😡

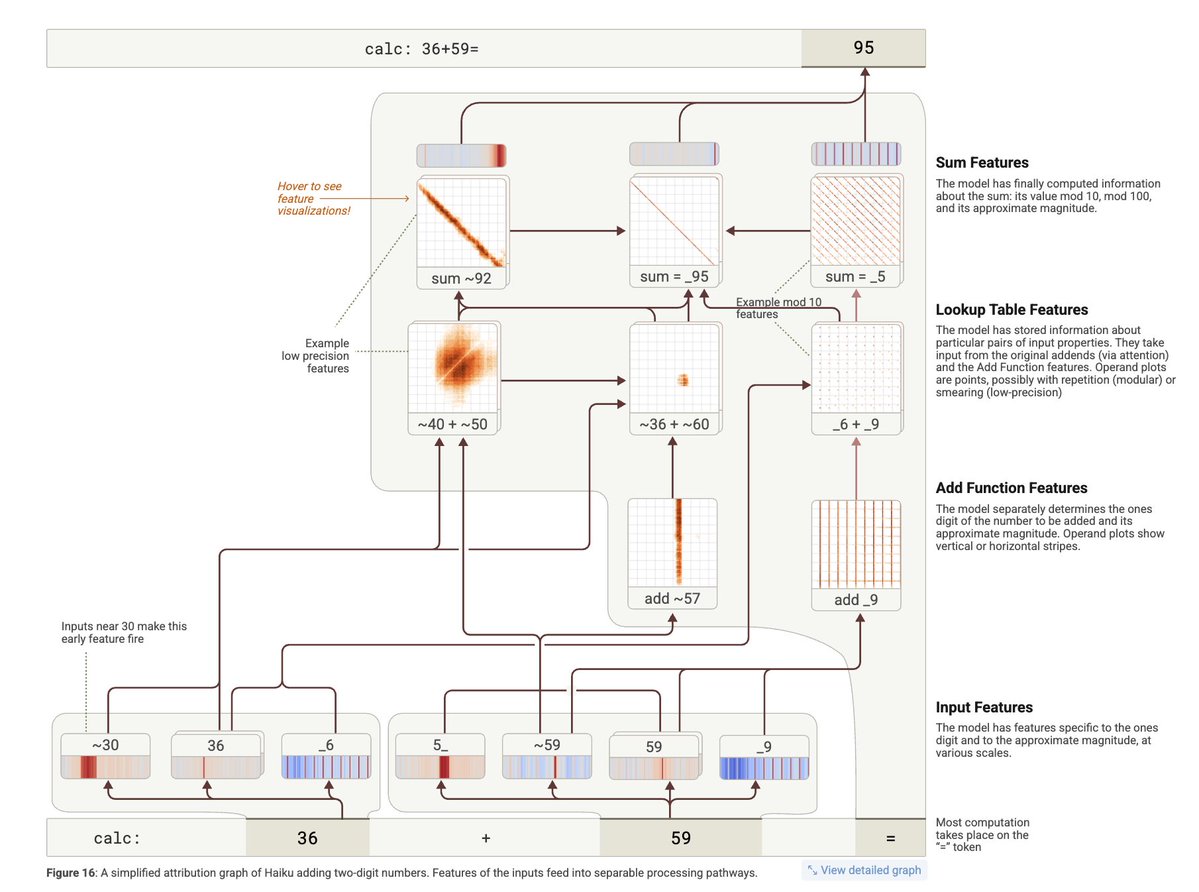

How well does this work? One quick independent test is to see if it can recover an "internal CoT" in cases where AIs can solve math problems in a single forward pass. TLDR: it doesn't. (TBC, this might require the NLA to see activations at multiple positions/location to work.)

@jatin_n0 Mostly a joke, it's a cool paper! yes the planning result is causal but only looking at total effect (i.e. an NLA-derived resid stream edit changes the output). I was referring to causal effect on the model's downstream computations, not anything inside/after the autoencoder. 1/2

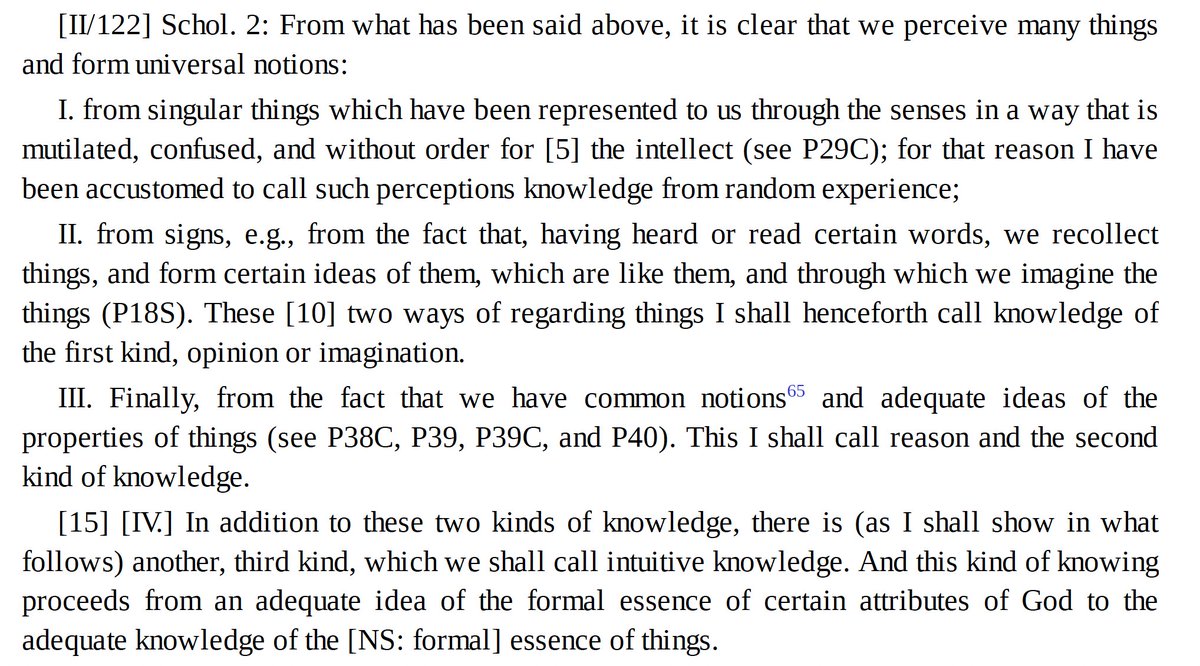

Despite extensive safety training, LLMs remain vulnerable to “jailbreaking” through adversarial prompts. Why does this vulnerability persist? In a new paper published in Philosophical Studies, I argue this is because current alignment methods are fundamentally shallow. 1/13