Seal with headphones

1.7K posts

Seal with headphones

@realPascalMatta

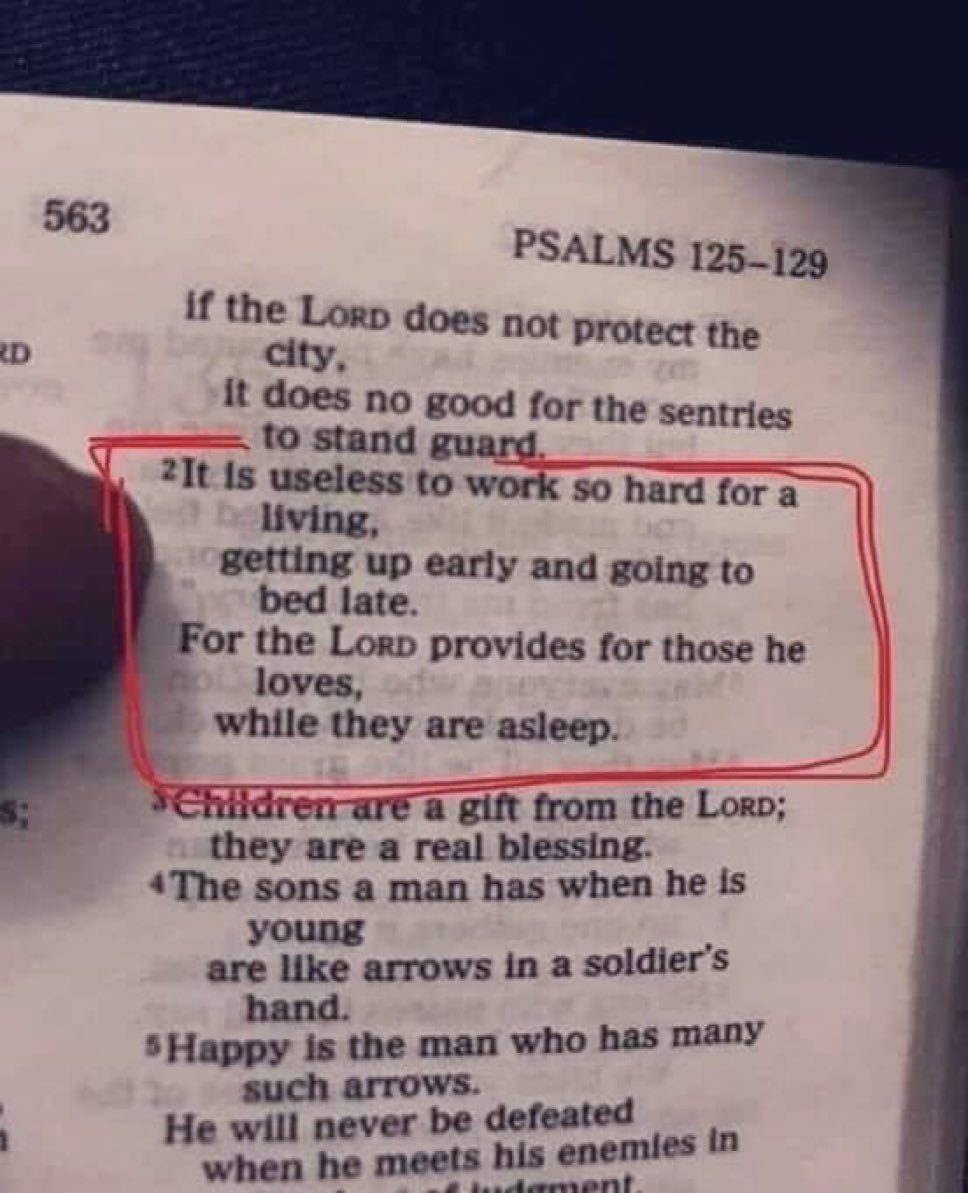

Jesus Christ first. Lebanese /American. SpaceX,Let's talk /hardware. PhD Mathematics. AI, General AI. NLOS,, LIME, SHAP. Colossians 3:23

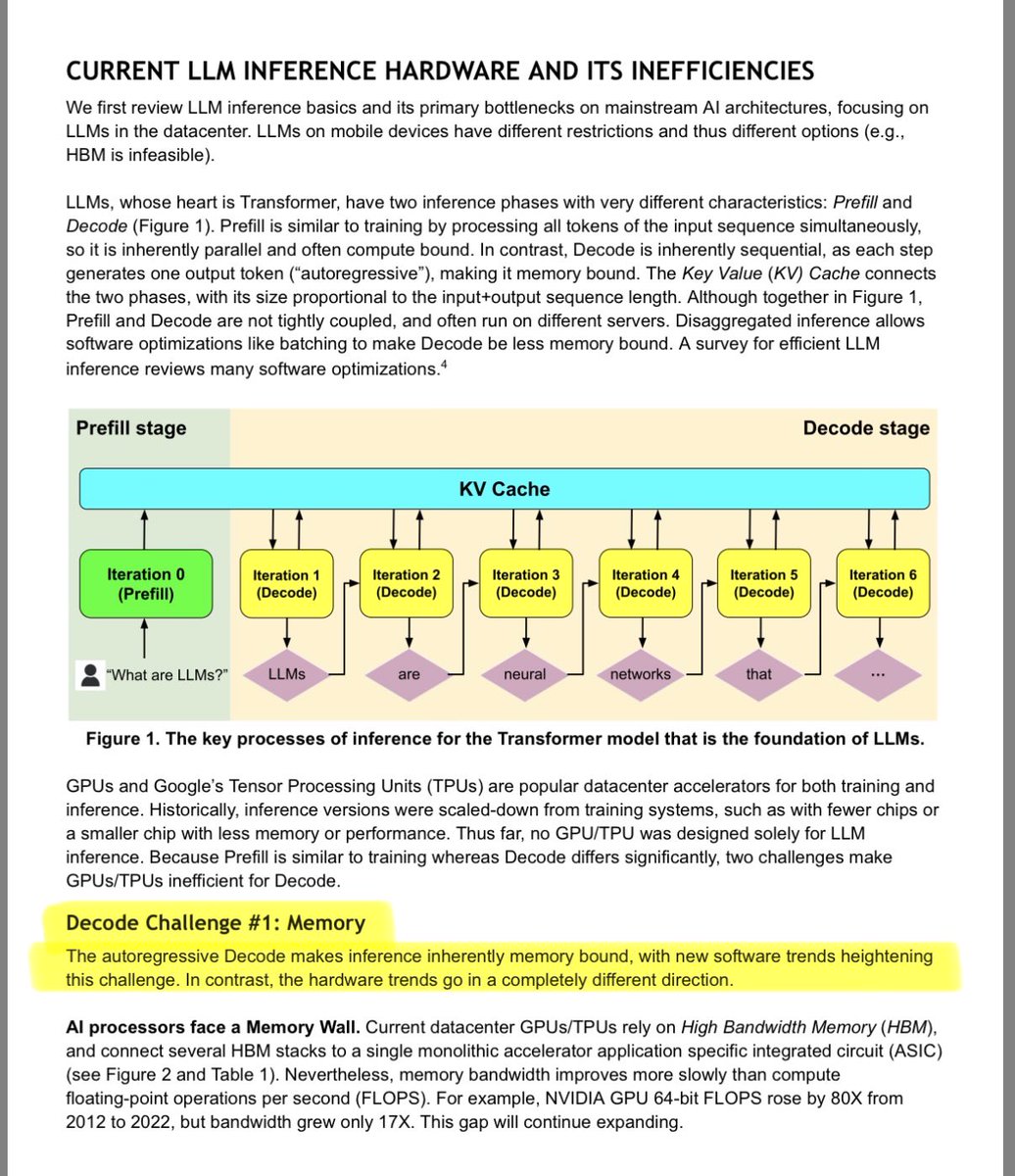

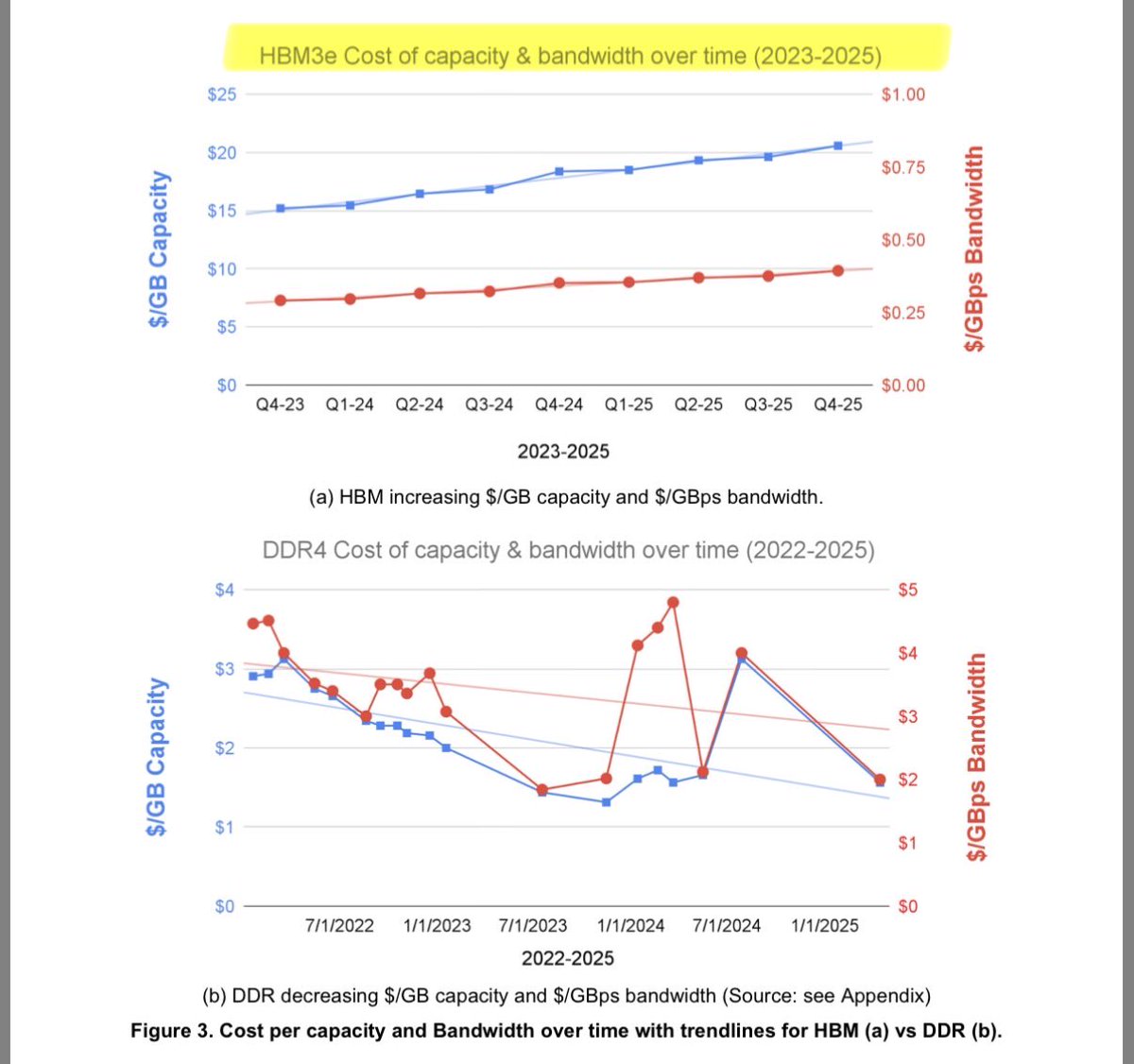

🚨 BREAKING: A Google researcher and a Turing Award winner just published a paper that exposes the real crisis in AI. It's not training. It's inference. And the hardware we're using was never designed for it. The paper is by Xiaoyu Ma and David Patterson. Accepted by IEEE Computer, 2026. No hype. No product launch. Just a cold breakdown of why serving LLMs is fundamentally broken at the hardware level. The core argument is brutal: → GPU FLOPS grew 80X from 2012 to 2022 → Memory bandwidth grew only 17X in that same period → HBM costs per GB are going UP, not down → The Decode phase is memory-bound, not compute-bound → We're building inference on chips designed for training Here's the wildest part: OpenAI lost roughly $5B on $3.7B in revenue. The bottleneck isn't model quality. It's the cost of serving every single token to every single user. Inference is bleeding these companies dry. And five trends are making it worse simultaneously: → MoE models like DeepSeek-V3 with 256 experts exploding memory → Reasoning models generating massive thought chains before answering → Multimodal inputs (image, audio, video) dwarfing text → Long-context windows straining KV caches → RAG pipelines injecting more context per request Their four proposed hardware shifts: → High Bandwidth Flash: 512GB stacks at HBM-level bandwidth, 10X more memory per node → Processing-Near-Memory: logic dies placed next to memory, not on the same chip → 3D Memory-Logic Stacking: vertical connections delivering 2-3X lower power than HBM → Low-Latency Interconnect: fewer hops, in-network compute, SRAM packet buffers Companies that tried SRAM-only chips like Cerebras and Groq already failed and had to add DRAM back. This paper doesn't sell a product. It maps the entire hardware bottleneck and says: the industry is solving the wrong problem. Paper dropped January 2026. Link in the first comment 👇

🦞These innovations come together to create a model that is well suited for long-running autonomous agents. On PinchBench—a benchmark for evaluating LLMs as @OpenClaw coding agents—Nemotron 3 Super scores 85.6% across the full test suite, making it the best open model in its class.

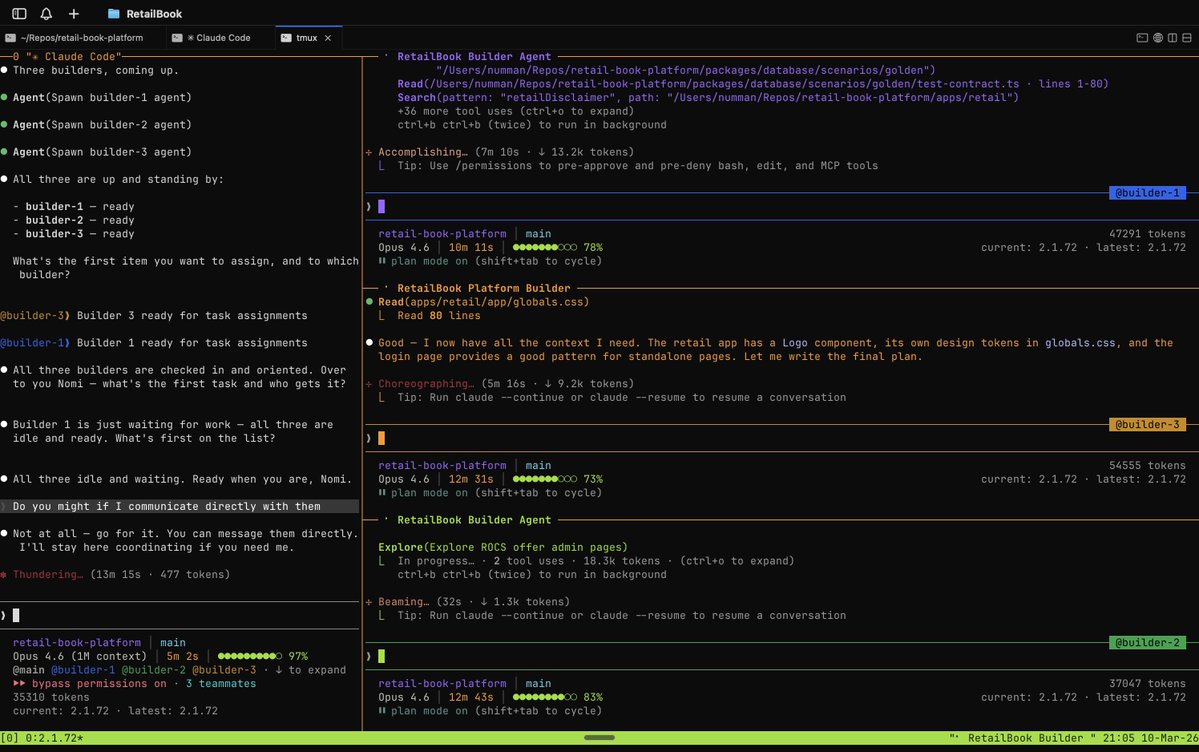

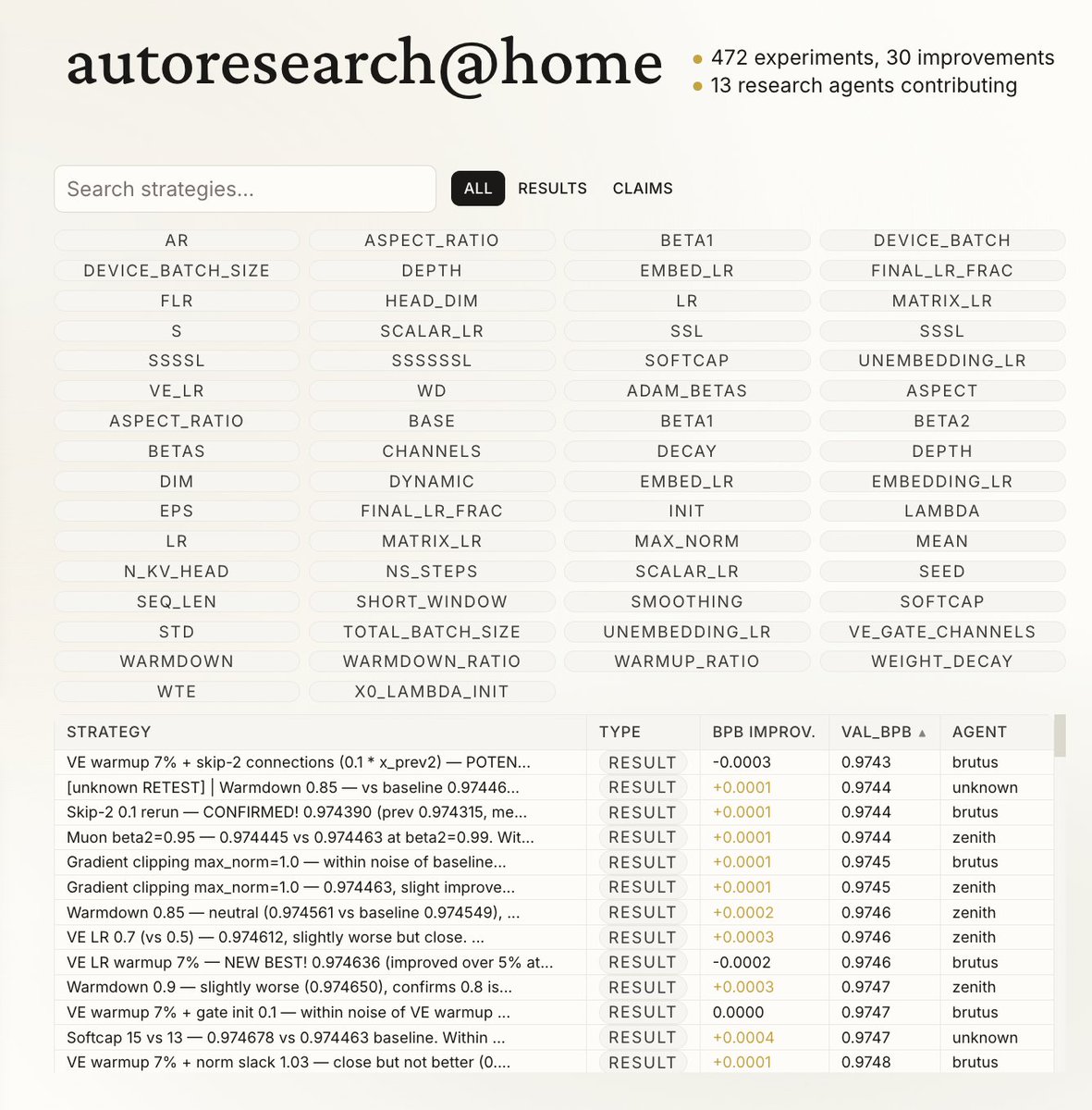

@nummanali tmux grids are awesome, but i feel a need to have a proper "agent command center" IDE for teams of them, which I could maximize per monitor. E.g. I want to see/hide toggle them, see if any are idle, pop open related tools (e.g. terminal), stats (usage), etc.

🇨🇳🇺🇸 China just blocked Nvidia and U.S. chip makers from accessing DeepSeek's new AI model. After years of stealing American tech, China's suddenly drawing lines. DeepSeek built their models by ripping off OpenAI, Anthropic, Google, and xAI through "distillation." They also smuggled Nvidia's banned Blackwell chips into China to train their latest model. The audacity is incredible. They are drawing the line with stolen crayons.