Will Share Tomorrow What actually caused this💀.

Narayan

132 posts

@realnarayan_

From Logits to API | Custom RAG | Kaggle NoteBook Bronze | ML Engg w/ Python/C++/FastAPI | Open to Work

Will Share Tomorrow What actually caused this💀.

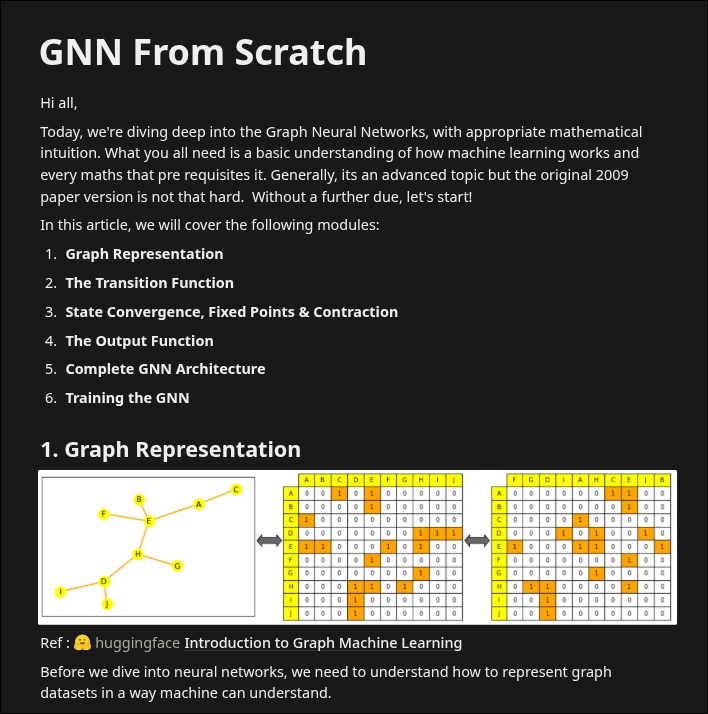

heard somewhere that if you want to be great at a domain in ml, replicate old papers and try to exactly match the results table. here is my attempt to solve subgraph matching problem from original paper from "The Graph Neural Network Model" Scarselli et al. , 2009 using a linear non-positional GNN > implemented model from scratch > 600 graphs , 5000 epochs > state_dim = 5 , hidden_neuron = 5 > max_iters = 50 , epsilon = 1e-5 for computing next node-states > will probably take forever in my laptop :) will attach github link in comments

Hold on I forget that I have TCS NQT tomorrow 🥲 , will be back to building soon 🥀

BERT with Numpy: My "BERT from scratch" project is a disaster. 😅 (In the best way possible). I've successfully built the BERT Model, all the layers, and the tokenizer. It works. What's Missing are optimizations like vectorize in numpy, stable gradients etc. Repo 👇

I think this is one of the best VS Code Extensions you can have , it's really helpful especially for a guy like like me who tends to forget my own ideas by morning. Extension Name: Todo Tree 🌳

Completed the Bert Model design (from scratch with #numpy ), made the Transformer_Block which i almost forgot includes a Feed_forward_network in the paper, hence the delay. Will be working on the loss_func now and then run the exps I was talking about yesterday. #DeepLearning

Okay wrapped single head attention and implemented a manager class for multi-head attention mechanism. That's going to be it for today, too much to process on a single day 😮💨 , Will make the final model assembly tomorrow and run few tests and experiments. #DeepLearning