reservatiomentalis

3.7K posts

@reservatiomen

Sebastian Edinger and Alexey Zhavoronkov, a German and a Russian philosopher, active on Substack. Delivering what the media and academia do not deliver.

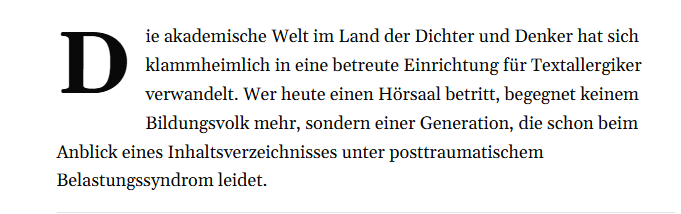

Als Heike Egner, Professorin für Humangeographie in Klagenfurt, 2018 entlassen wird, beginnt sie zu recherchieren – und stößt auf ein wachsendes Muster. Ihr Buch zeichnet das Bild eines Wandels im Wissenschaftssystem, in dem Leistungsmaßstäbe und Freiheit unter Druck geraten. cicero.de/innenpolitik/s…

Die Polizei erfasst 2025 knapp 14.000 Vergewaltigungsdelikte. Das sind neun Prozent mehr als im Vorjahr. Die Gründe für den Anstieg sind unklar. berliner-zeitung.de/news/kriminals…

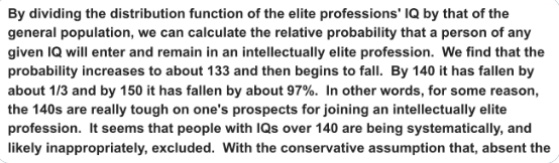

It has been emphasized lately that INTJs and INTPs, although comprising roughly 5% of the overall population, account for more than 50% of all Bitcoiners. (Astrology, of course) The picture is not much different when you look at male National Merit Finalists (top 0.3% of students), a much smaller and more selective group; 👇 they still make up more than 32% of the entire cohort (roughly 50% are made up by the four INxx types). All eight xSxx types (more than 70% of males in the overall population) combined account for roughly 17% of National Merit Finalists, barely about 1% more than INTJs alone (roughly 3% of the male population in the US, 1.4% in the UK overall, 0.48% among Hispanics overall). Pure coincidence in the field of astrology, of course. [S.E.] @jensenjeans @0x49fa98 @MetapolitikerX @LGcommaI @schwopi__ @FrikaWies @KaiserBenKaiser

In welchen Büchern habt Ihr diese Warnung gefunden? (Mir als Intensivnutzer etlicher Bibliotheken nur einmal passiert, natürlich mußte ich hier die Beweismittel sichern.) [S.E.]

🦔A researcher invented a fake eye condition called bixonimania, uploaded two obviously fraudulent papers about it to an academic server, and watched major AI systems present it as real medicine within weeks. The fake papers thanked Starfleet Academy, cited funding from the Professor Sideshow Bob Foundation and the University of Fellowship of the Ring, and stated mid-paper that the entire thing was made up. Google's Gemini told users it was caused by blue light. Perplexity cited its prevalence at one in 90,000 people. ChatGPT advised users whether their symptoms matched. The fake research was then cited in a peer-reviewed journal that only retracted it after Nature contacted the publisher. My Take The researcher made the papers as obviously fake as possible on purpose. The AI systems didn't catch it. Neither did the human researchers who cited it in real journals, which means people are feeding AI-generated references into their work without reading what they're actually citing. I've covered the FDA using AI for drug review, the NYC hospital CEO ready to replace radiologists, and ChatGPT Health launching this year. All of that is happening in the same environment where a condition funded by a Simpsons character and endorsed by the crew of the Enterprise was being presented as emerging medical consensus. The people making these deployment decisions seem to believe the pipeline from research to AI to patient is more supervised than it actually is. This experiment suggests it isn't supervised much at all. Hedgie🤗 nature.com/articles/d4158…