Deedy@deedydas

The vibes in SF feel pretty frenetic right now. The divide in outcomes is the worst I've ever seen.

Over the last 5yrs, a group of ~10k people - employees at Anthropic, OpenAI, xAI, Nvidia, Meta TBD, founders - have hit retirement wealth of well above $20M (back of the envelope AI estimation).

Everyone outside that group feels like they can work their well-paying (but <$500k) job for their whole life and never get there.

Worse yet, layoffs are in full swing. Many software engineers feel like their life's skill is no longer useful. The day to day role of most jobs has changed overnight with AI.

As a result,

1. The corporate ladder looks like the wrong building to climb.

Everyone's trying to align with a new set of career "paths": should I be a founder? Is it too late to join Anthropic / OpenAI? should I get into AI? what company stock will 10x next? People are demanding higher salaries and switching jobs more and more.

2. There’s a deep malaise about work (and its future).

Why even work at all for “peanuts”? Will my job even exist in a few years? Many feel helpless. You hear the “permanent underclass” conversation a lot, esp from young people. It's hard to focus on doing good work when you think "man, if I joined Anthropic 2yrs ago, I could retire"

3. The mid to late middle managers feel paralyzed.

Many have families and don't feel like they have the energy or network to just "start a company". They don't particularly have any AI skills. They see the writing on the wall: middle management is being hollowed out in many companies.

4. The rich aren’t particularly happy either.

No one is shedding tears for them (and rightfully so). But those who have "made it" experience a profound lack of purpose too. Some have gone from <$150k to >$50M in a few years with no ramp. It flips your life plans upside down. For some, comparison is the thief of joy. For some, they escape to NYC to "live life". For others still, they start companies "just cuz", often to win status points. They never imagined that by age 30, they'd be set. I once asked a post-economic founder friend why they didn't just sell the co and they said "and do what? right now, everyone wants to talk to me. if i sell, I will only have money."

I understand that many reading this scoff at the champagne problems of the valley. Society is warped in this tech bubble. What is often well-off anywhere else in the world is bang average here.

Unlike many other places, tenure, intelligence and hard work can be loosely correlated with outcomes in the Bay. Living through a societally transformative gold rush in that environment can be paralyzing. "Am I in the right place? Should I move? Is there time still left? Am I gonna make it?" It psychologically torments many who have moved here in search of "success".

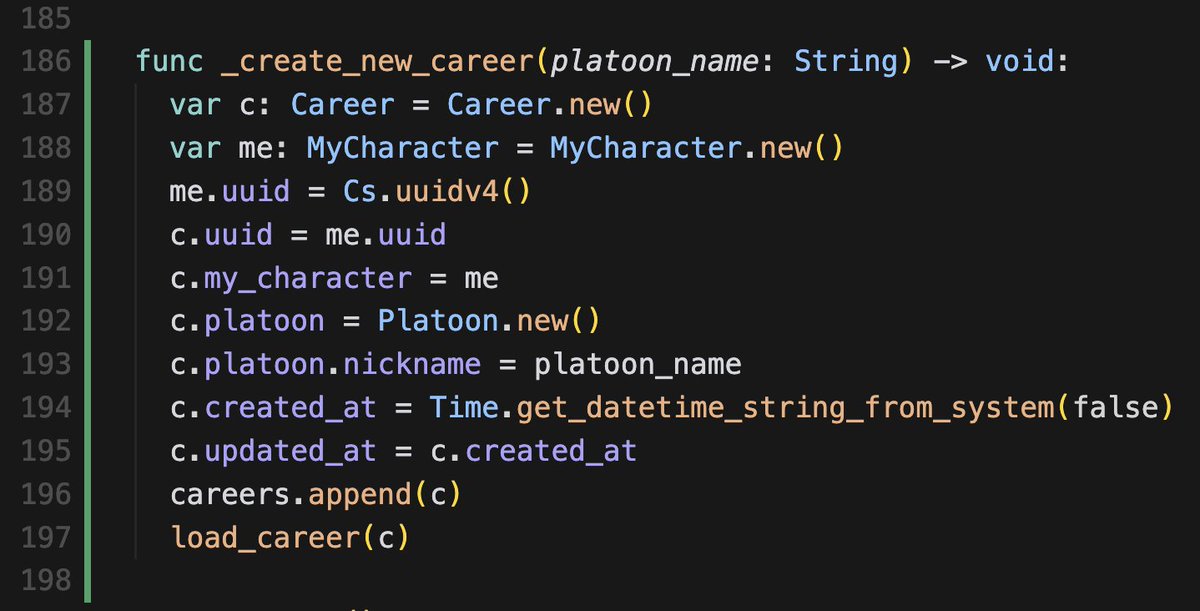

Ironically, a frequent side effect of this torment is to spin up the very products making everyone rich in hopes that you too can vibecode your path to economic enlightenment.