Romin

428 posts

Romin

@rmirmo

Building agentic harnesses with a focus on backpressure + self-testing loops

I want to make /init more useful- what do you think it should do to help setup Claude Code in a repo?

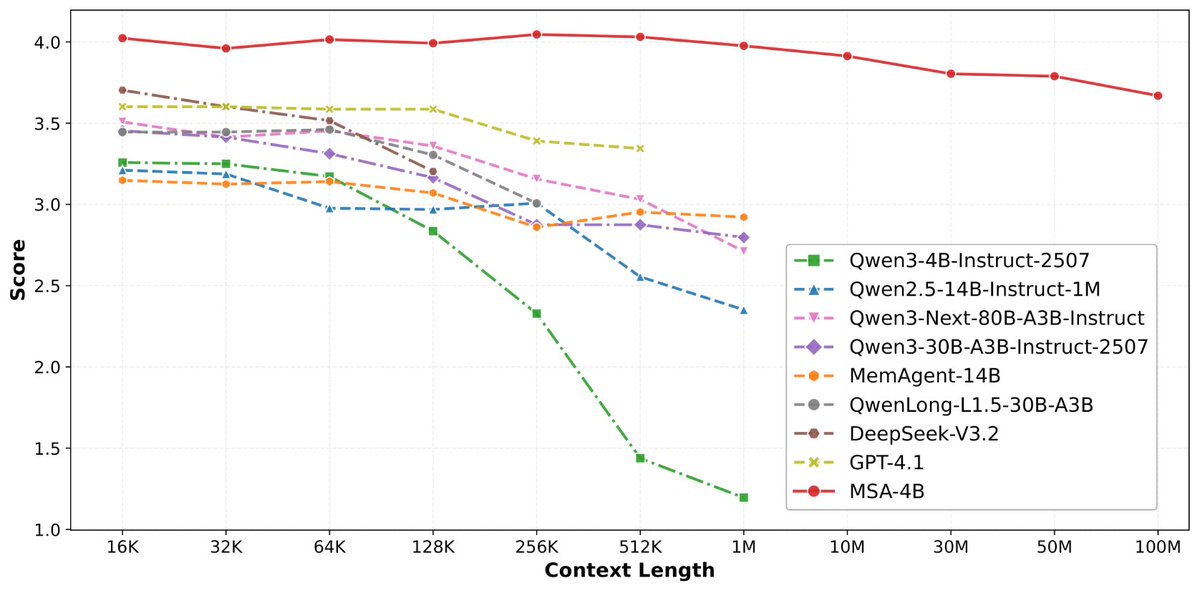

论文来了。名字叫 MSA,Memory Sparse Attention。 一句话说清楚它是什么: 让大模型原生拥有超长记忆。不是外挂检索,不是暴力扩窗口,而是把「记忆」直接长进了注意力机制里,端到端训练。 过去的方案为什么不行? RAG 的本质是「开卷考试」。模型自己不记东西,全靠现场翻笔记。翻得准不准要看检索质量,翻得快不快要看数据量。一旦信息分散在几十份文档里、需要跨文档推理,就抓瞎了。 线性注意力和 KV 缓存的本质是「压缩记忆」。记是记了,但越压越糊,长了就丢。 MSA 的思路完全不同: → 不压缩,不外挂,而是让模型学会「挑重点看」 核心是一种可扩展的稀疏注意力架构,复杂度是线性的。记忆量翻 10 倍,计算成本不会指数爆炸。 → 模型知道「这段记忆来自哪、什么时候的」 用了一种叫 document-wise RoPE 的位置编码,让模型天然理解文档边界和时间顺序。 → 碎片化的信息也能串起来推理 Memory Interleaving 机制,让模型能在散落各处的记忆片段之间做多跳推理。不是只找到一条相关记录,而是把线索串成链。 结果呢? · 从 16K 扩到 1 亿 token,精度衰减不到 9% · 4B 参数的 MSA 模型,在长上下文 benchmark 上打赢 235B 级别的顶级 RAG 系统 · 2 张 A800 就能跑 1 亿 token 推理。这不是实验室专属,这是创业公司买得起的成本。 说白了,以前的大模型是一个极度聪明但只有金鱼记忆的天才。MSA 想做的事情是,让它真正「记住」。 我们放 github 上了,算法的同学不容易,可以点颗星星支持一下。🌟👀🙏 github.com/EverMind-AI/MSA

稍微剧透一下,@EverMind 这周还会发一篇高质量论文

Collapsing liquidity in refined product futures cud have major implications. Asian jet traded $225/bbl - possibly the highest price ever paid for oily product. If refineries start stopping out on short hedges because of margin calls and can't sell futures, then who will? #OOTT

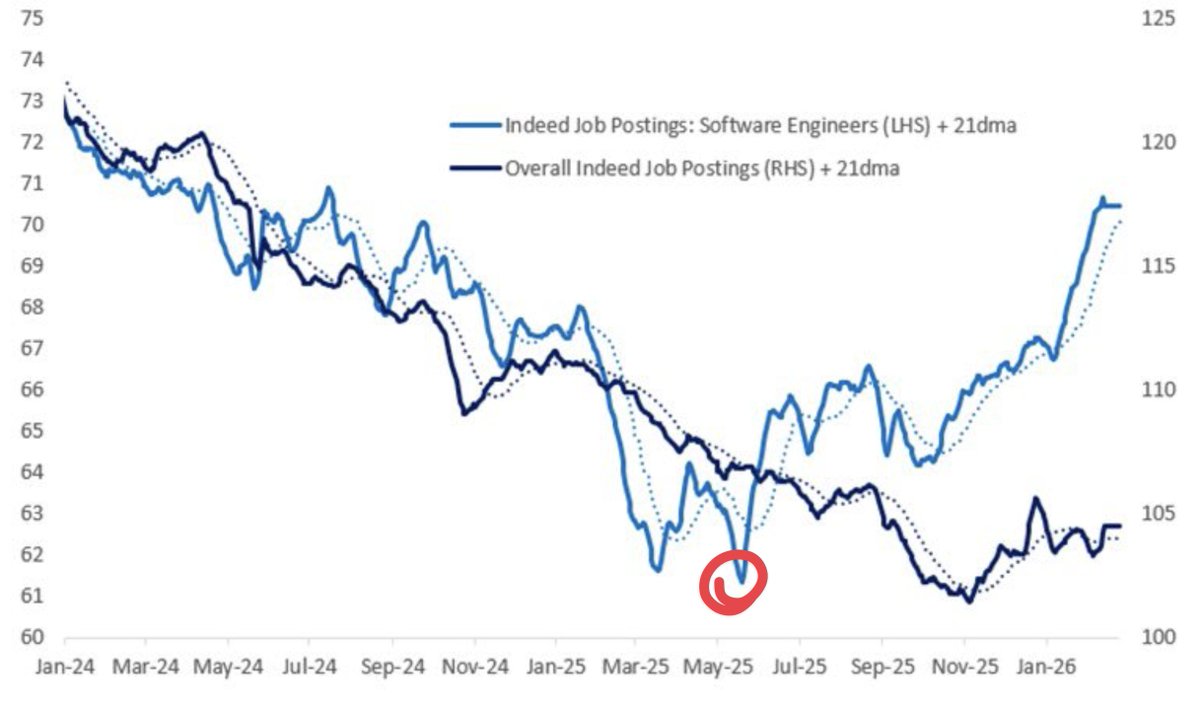

I'm squarely in the camp of believing that there is an insatiable demand for software, and until that stops, software engineers are going to be in overwhelmingly short supply in the long term. AI is forcing companies to recalibrate their resources (we're all seeing that now with layoffs and freezes), but once AI is more-or-less saturated among most companies, hiring more engineers will, once again, be the only way to build more product than the competition. And yes, being better than the competition is all that matters. Not being better than the competition 2 years ago. The pre-AI world is gone and not coming back. Readjust your expectations. Companies that think they'll be able to last with tiny headcounts are going to get crushed by the companies who choose to grow and force AI adoption across large teams. Yes, there's a brief period where adoption hasn't fully hit yet, but once it has, the low water mark for productivity will adjust and you still need more people to build more product, unless you have some secret AI sauce that no one else has access to (indie solopreneur building saas products, that's not you!).

prediction cone/safe triangle — this is something we take so much for granted in modern day native UIs. but it's not the same for most web-based dropdown menus. it took me a while to implement this here. Amazon, macOS, Windows all implement some version of this.

Most people take melatonin right before bed. That might be one of the worst times to take it. Melatonin isn't a sedative, it's a chronobiotic. It doesn't knock you out. It signals to your circadian clock that it's dark. And the effect it has on your sleep timing depends entirely on WHEN you take it. There's a concept called the Phase Response Curve. It shows that melatonin taken ~3 hours before your usual bedtime produces the largest phase advance, meaning it shifts your internal clock earlier, so you fall asleep sooner and wake up easier. But take it AT bedtime? You can actually push your clock in the wrong direction. That's a phase delay. You end up falling asleep later over time, not earlier. The sweet spot sits just before dim light melatonin onset, the point when your brain would naturally start releasing melatonin. For most people, that's roughly 3 hours before habitual bedtime. Timing > dose. Lewy et al. (1998), Burgess et al. (2010), and Challet et al., J Pineal Res (2024).