Sabitlenmiş Tweet

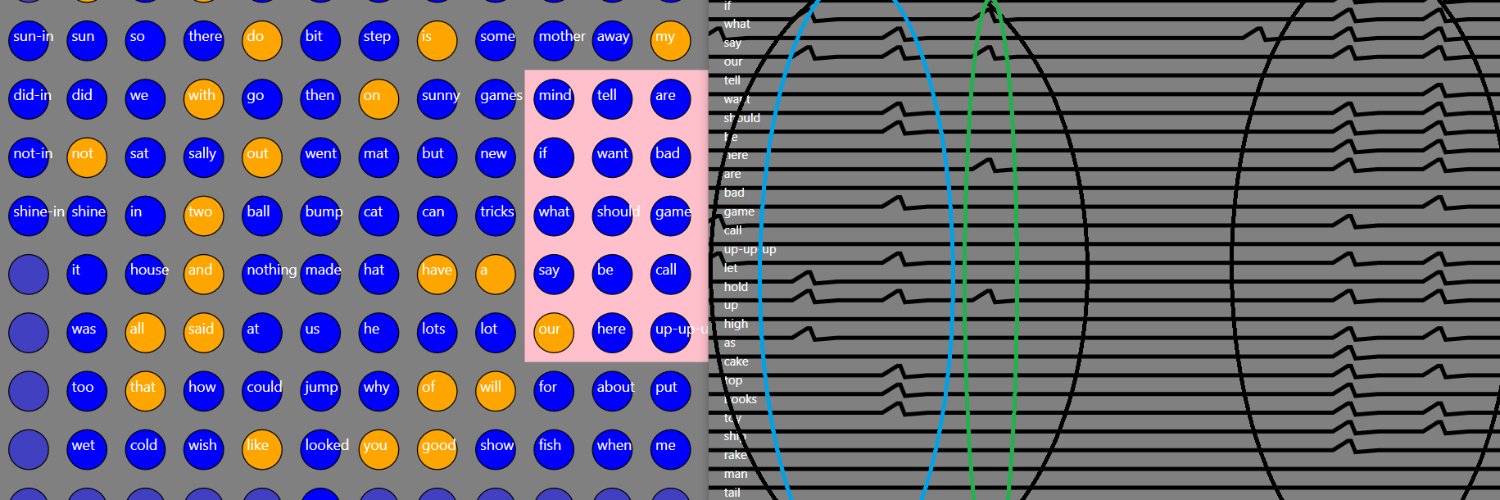

My presentation to INLP workshop at AGI-21. Creativity, freewill, consciousness. Process & where deep learning fails @IntuitMachine. "Togetherness" still too trapped in formalism @coecke. Hypothesis for significance of neural oscillations at end @hb_cell.

youtu.be/YiVet-b-NM8

YouTube

English