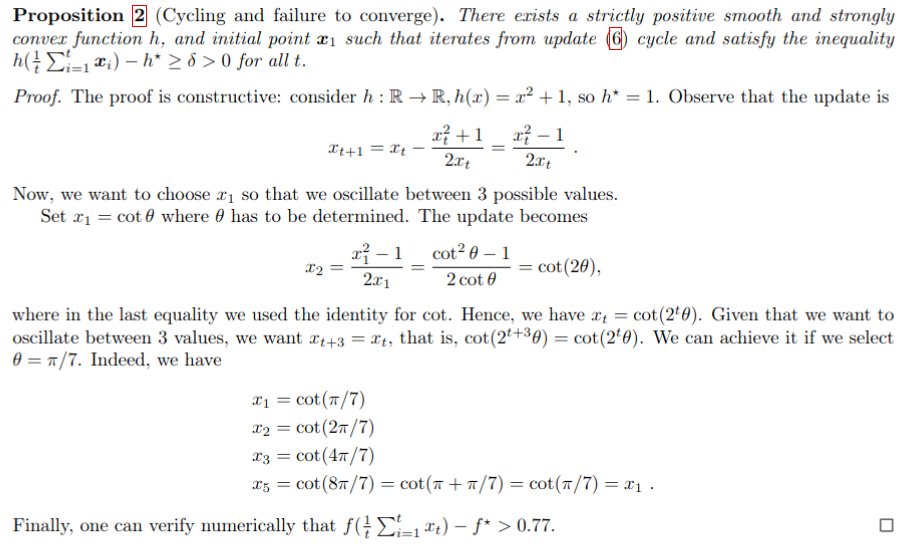

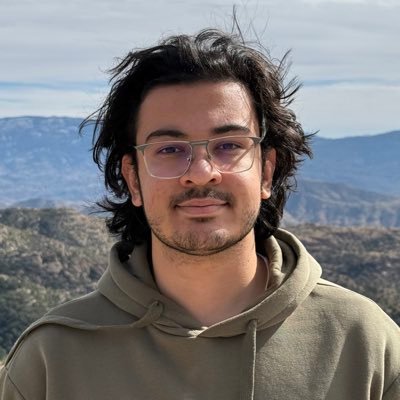

This is a turning point: I just proved a complex math result useful for my research using an LLM. I am not sure if I should be happy or scared...

Robert Vacareanu

124 posts

@robert_nlp

fighting entropy | PhD from @UofArizona Working on #nlproc Past: 2022, 2023: Applied Scientist Intern (@AWS)

This is a turning point: I just proved a complex math result useful for my research using an LLM. I am not sure if I should be happy or scared...

1/🚨 Thrilled to share that our paper (w/ @eduardo_nlp), "Making Language Models Robust Against Negation," has been accepted to the #NAACL2025 main conference! 🎉 #Negation has always been a challenge for language models. Here's our self-supervised method to tackle this issue:

📢 Join us at the CMU Agent Workshop 2025, April 10-11! Don't miss our esteemed invited speakers: - Qingyun Wu (PSU) - Diyi Yang (Stanford) - Aviral Kumar (CMU) - Graham Neubig (CMU) ...and many more to come! To register, visit: cmu-agent-workshop.github.io

In-context learning provides an LLM with a few examples to improve accuracy. But with long-context LLMs, we can now use *thousands* of examples in-context. We find that this long-context ICL paradigm is surprisingly effective– and differs in behavior from short-context ICL! 🧵

🚀 New Paper: RSQ: Learning from Important Tokens Leads to Better Quantized LLMs We show that not all tokens should be treated equally during quantization. By prioritizing important tokens through a three-step process—Rotate, Scale, and Quantize—we achieve better-quantized models on LLaMA3, Mistral, and Qwen2.5. 🧵👇

Check out 🔥 EgoNormia: a benchmark for physical social norm understanding egonormia.org Can we really trust VLMs to make decisions that align with human norms? 👩⚖️ With EgoNormia, a 1800 ego-centric video 🥽 QA benchmark, we show that this is surprisingly challenging 🤖 🌐 arxiv.org/abs/2502.20490 Our amazing team: MohammadHossein Rezaei* (U of A), Yicheng Fu* , Phil Cuvin* (U of T), @cjziems , @StevenyzZhang , @_Hao_Zhu

Do you have opinions about the current state of reviewing at *CL conferences? Do you want to help? We (@emnlpmeeting PCs) want to hear from you: forms.office.com/r/P68uvwXYqf

New Reading Group! Trustworthy AI Reading Group; dedicated to exploring critical topics in AI, including fairness, security, explainability, and their broader applications. Open to CS undergraduate, master’s, and PhD students. ruixiangtang.net/teaching-mento…