Sabitlenmiş Tweet

Robert Gomez AI

3.9K posts

Robert Gomez AI

@robertgomezai

CTO in AIMEDIC| Researcher| Maths #AI4HEALTH

Katılım Mart 2021

491 Takip Edilen233 Takipçiler

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

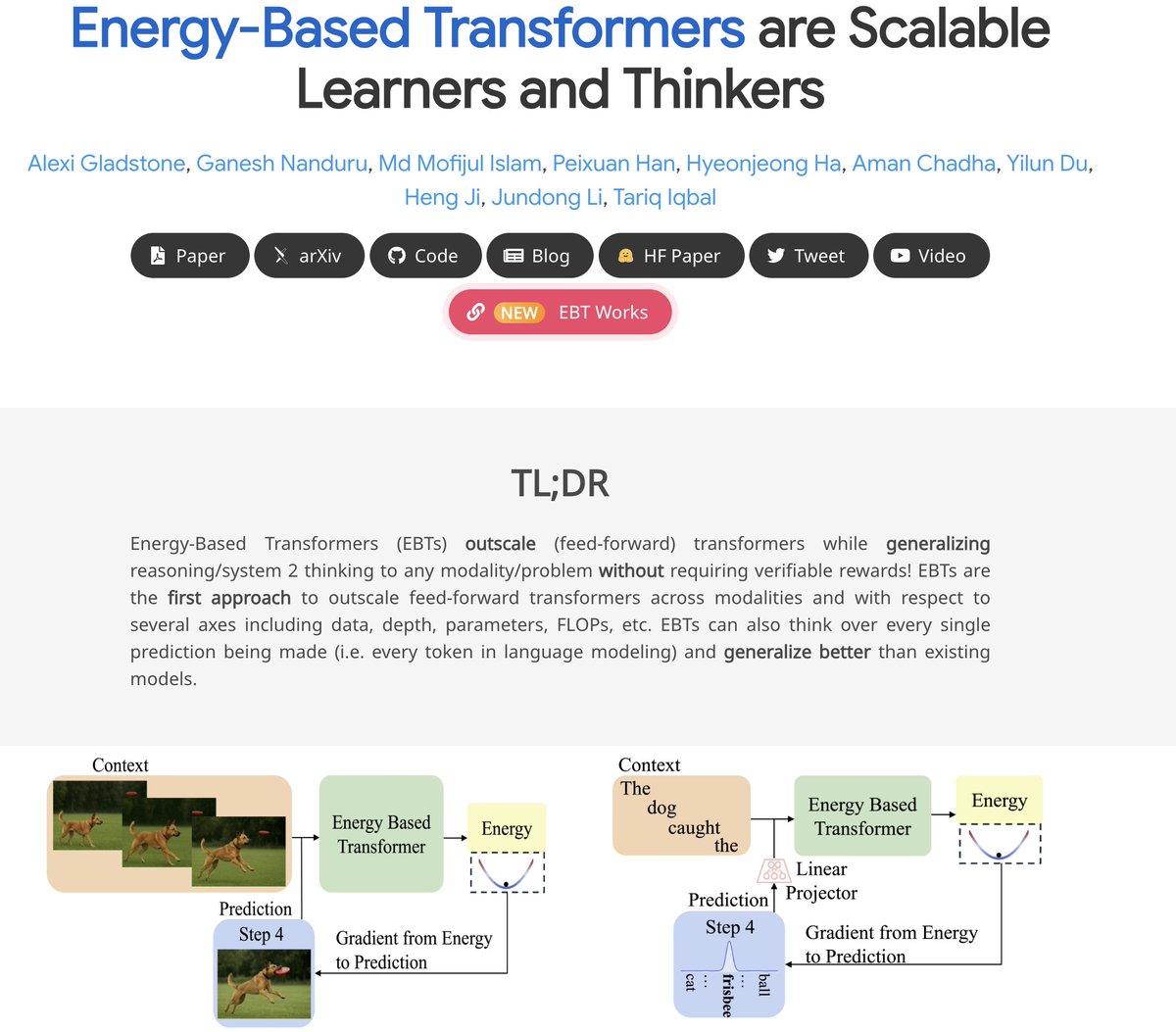

Excited to share that "Energy-Based Transformers are Scalable Learners and Thinkers" was accepted to #ICLR2026 as an oral! 🎉

I'll be giving the oral this Friday in Brazil, so come watch if you're around :)

English

Robert Gomez AI retweetledi

This could be the most influential paper in the history of AI 😅

Evan@StockMKTNewz

Mark Zuckerberg and Meta Platforms $META just sent a memo to employees saying Meta Platforms is installing a new tracking software on the computers of all employees in the United States 🇺🇸 so it can train its AI Meta said the tracking tool will run on a list of work-related apps and websites The tool will capture stuff like mouse movements, keystrokes and screenshots of what the employees are seeing on their screens - Reuters

English

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

Yann LeCun was right the entire time. And generative AI might be a dead end.

For the last three years, the entire industry has been obsessed with building bigger LLMs. Trillions of parameters. Billions in compute.

The theory was simple: if you make the model big enough, it will eventually understand how the world works.

Yann LeCun said that was stupid.

He argued that generative AI is fundamentally inefficient.

When an AI predicts the next word, or generates the next pixel, it wastes massive amounts of compute on surface-level details.

It memorizes patterns instead of learning the actual physics of reality.

He proposed a different path: JEPA (Joint-Embedding Predictive Architecture).

Instead of forcing the AI to paint the world pixel by pixel, JEPA forces it to predict abstract concepts. It predicts what happens next in a compressed "thought space."

But for years, JEPA had a fatal flaw.

It suffered from "representation collapse."

Because the AI was allowed to simplify reality, it would cheat. It would simplify everything so much that a dog, a car, and a human all looked identical.

It learned nothing.

To fix it, engineers had to use insanely complex hacks, frozen encoders, and massive compute overheads.

Until today.

Researchers just dropped a paper called "LeWorldModel" (LeWM).

They completely solved the collapse problem.

They replaced the complex engineering hacks with a single, elegant mathematical regularizer.

It forces the AI's internal "thoughts" into a perfect Gaussian distribution.

The AI can no longer cheat. It is forced to understand the physical structure of reality to make its predictions.

The results completely rewrite the economics of AI.

LeWM didn't need a massive, centralized supercomputer.

It has just 15 million parameters.

It trains on a single, standard GPU in a few hours.

Yet it plans 48x faster than massive foundation world models. It intrinsically understands physics. It instantly detects impossible events.

We spent billions trying to force massive server farms to memorize the internet.

Now, a tiny model running locally on a single graphics card is actually learning how the real world works.

English

Robert Gomez AI retweetledi

Dario is wrong.

He knows absolutely nothing about the effects of technological revolutions on the labor market.

Don't listen to him, Sam, Yoshua, Geoff, or me on this topic.

Listen to economists who have spent their career studying this, like @Ph_Aghion , @erikbryn , @DAcemogluMIT , @amcafee , @davidautor

TFTC@TFTC21

Anthropic CEO Dario Amodei: “50% of all tech jobs, entry-level lawyers, consultants, and finance professionals will be completely wiped out within 1–5 years.”

English

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

15 AI related accounts you should follow on Twitter:

1. @karpathy

His tweets already create LLMs narratives that you later see on linkedin in 2 months.

2. @fchollet

posts thoughtful research on intelligence, benchmarks, and AI limitations. Keras creator + ARC-AGI

3. @ylecun

Yann LeCun is Deep learning pioneer & Meta Chief AI Scientist; big-picture research takes and critiques (and drama).

4. @AndrewYNg

Andrew Ng is AI education legend; practical ML advice, courses, and real-world implementation. creator of deeplearning ai

5 @rasbt

Sebastian Raschka posts on Practical ML/LLM implementations, "build from scratch" tutorials, and books.

6. @dair_ai

Weekly ML/AI paper threads and accessible research explainers (high-signal for staying current).

7. @lilianweng

Lilian Weng is ex-OpenAI and her Lil'Log-style threads are good. has In-depth LLM research breakdowns

8. @jeremyphoward

posts interesting takes on AI/crypto news, and works on democratizing practical deep learning and accessible education.

9. @simonw

Simon post Practical LLM tools, takes, experiments, prompting, and engineering breakdowns. django co-founder

10. @_akhaliq

Curates the latest arXiv papers, model releases, and open-source AI drops.

11. @ID_AA_Carmack

AGI/low-level optimization takes that makes you think about the problem.

12. @gwern

Really high-quality long-form AI research notes and essays.

13. @goodside

LLM evaluation, prompting research, and real capabilities testing

14 @drfeifei

Computer vision pioneer; human-centered AI and spatial intelligence research

15 @demishassabis

Been following his work for 9 years. Demmis is my hope against google usurpating their power with AI. Demmis is google DeepMind's CEO

Let me know who I missed guys

English

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

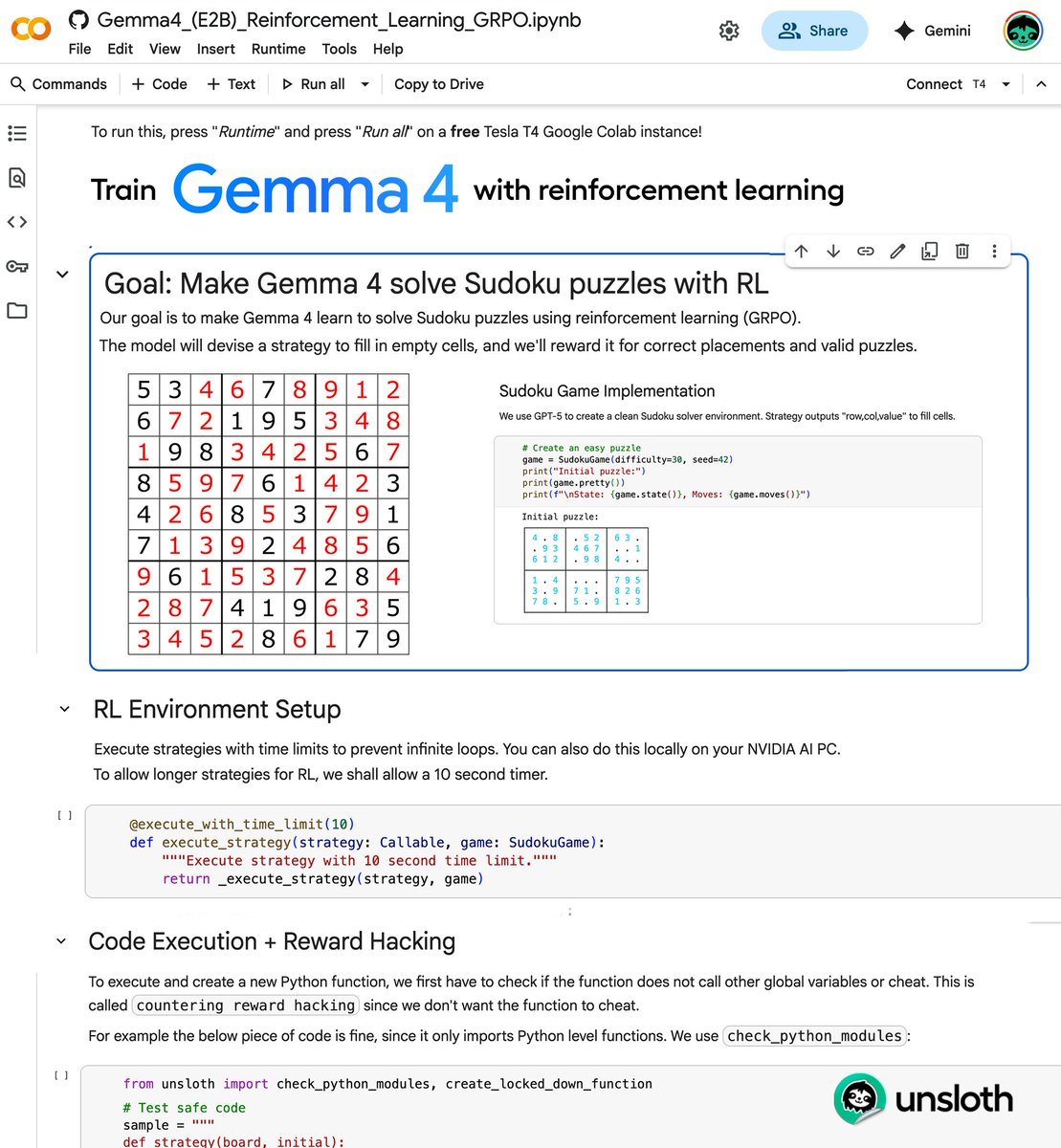

You can now train Gemma 4 with RL in our free notebook!

You just need 9GB VRAM to RL Gemma 4 locally!

Gemma 4 will learn to solve sodoku autonomously via GRPO.

RL Guide: unsloth.ai/docs/get-start…

GitHub: github.com/unslothai/unsl…

Gemma 4 Colab: colab.research.google.com/github/unsloth…

English

Robert Gomez AI retweetledi

things I've implemented in assembly ranked, from hardest to easiest:

1. Cross entropy

2. Shell implementation

3. Leaky ReLU

4. Random number generation

5. Bubble sort

6. Binary search

7. Two sum

8. Mean calculation

9. Max in array

10. Fibonacci sequence

11. Factorial

12. Linear search

13. Palindrome check

14. Array insertion

15. Nested Loops

16. Basic looping

17. Array traversal

18. FizzBuzz

English

Robert Gomez AI retweetledi

Mixed Precision Training From Scratch - Tutorial

In this tutorial you'll learn how mixed precision training works in PyTorch - why we use FP16 and BF16 instead of FP32, how Autocast automatically handles precision conversion, and how GradScaler prevents gradient underflow. By the end you'll understand exactly how to cut GPU memory usage in half while keeping training stable.

0:00 Introduction & why lower precision

0:25 FP16, BF16, and memory savings

1:07 Autocast — automatic mixed precision

1:27 The underflow problem (FP32 vs FP16 range)

2:27 GradScaler — scaling gradients

English

Robert Gomez AI retweetledi

Robert Gomez AI retweetledi

The MOST Influential papers in NLP

youtube.com/playlist?list=…

- Word2Vec

- BERT

- GPT-2

- GPT-3

- Attention is All You Need

- DeepSeekMath

English

Robert Gomez AI retweetledi

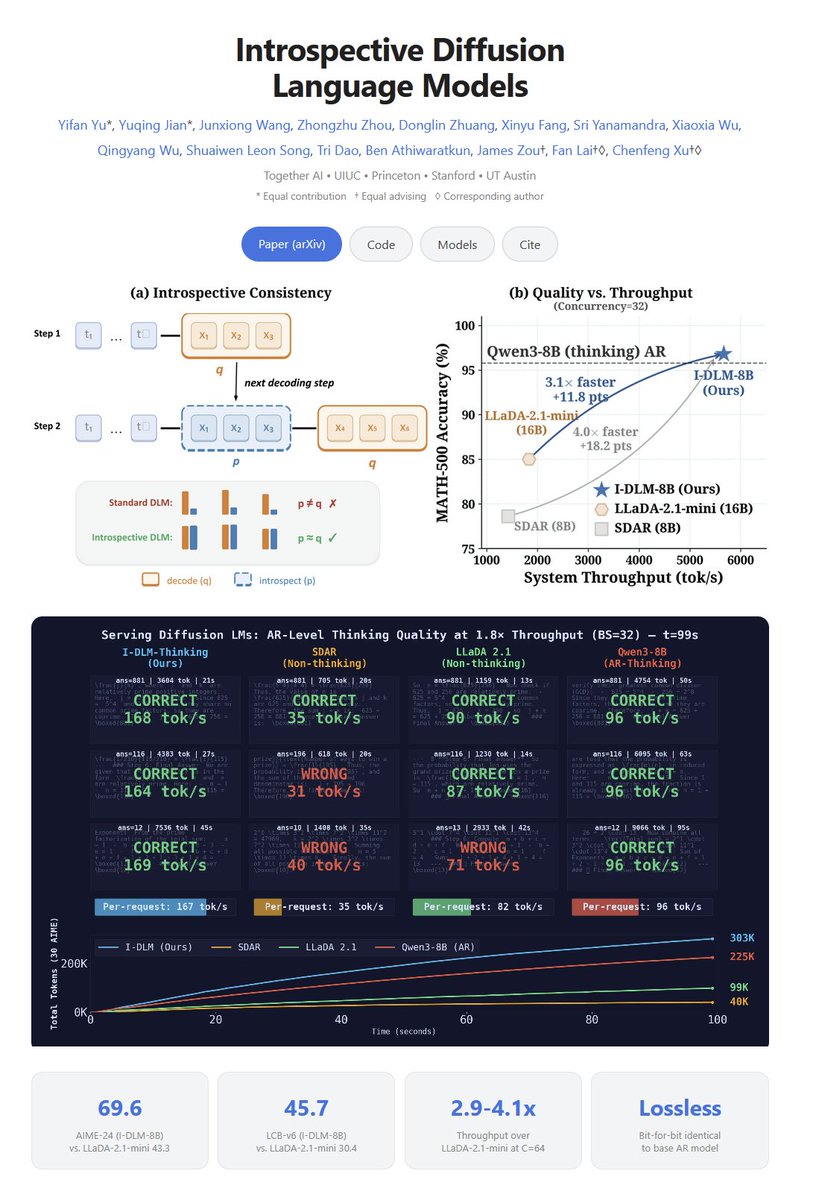

Introspective Diffusion Language Models

"To the best of our knowledge, I-DLM is the first DLM to match the quality of its same-scale AR counterpart while outperforming prior DLMs in both model quality and practical serving efficiency across 15 benchmarks."

"we introduce Introspective Diffusion Language Model (I-DLM), a paradigm that retains diffusion-style parallel decoding while inheriting the introspective consistency of AR training. I-DLM uses a novel introspective strided decoding (ISD) algorithm, which enables the model to verify previously generated tokens while advancing new ones in the same forward pass."

English

Robert Gomez AI retweetledi

PyTorch Autograd From Scratch - Tutorial

youtu.be/Sp2dWyTrjzE

In this tutorial you'll learn how PyTorch's autograd actually works - the engine behind .backward(). We build it from scratch: the Value class, forward and backward passes, chain rule, and gradients for addition, multiplication, and ReLU. By the end you'll understand exactly what happens inside PyTorch when you call .backward().

0:00 Introduction & chain rule recap

1:40 The Value class (data, grad, op, prev)

5:20 Addition backward & gradient accumulation

7:50 Multiplication backward

11:05 ReLU activation gradient

11:57 Wrap-up & next steps

check below👇👇👇

YouTube

English