Katherine Liu

127 posts

Katherine Liu

@robo_kat

Senior Research Scientist @ToyotaResearch, previously Robotics PhD @MIT_CSAIL. Towards generalist embodied intelligence 🤖 Opinions my own!

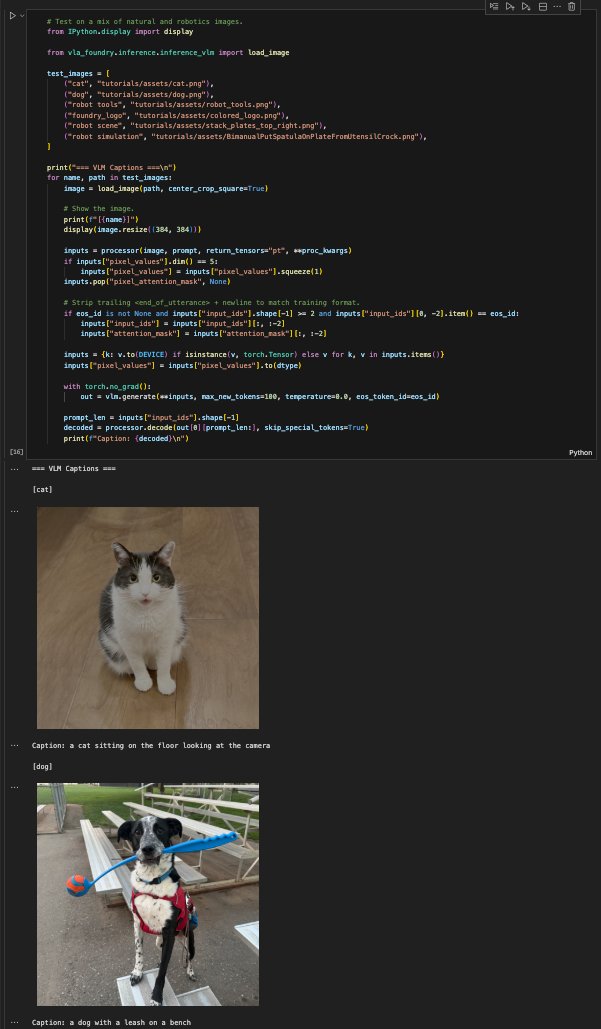

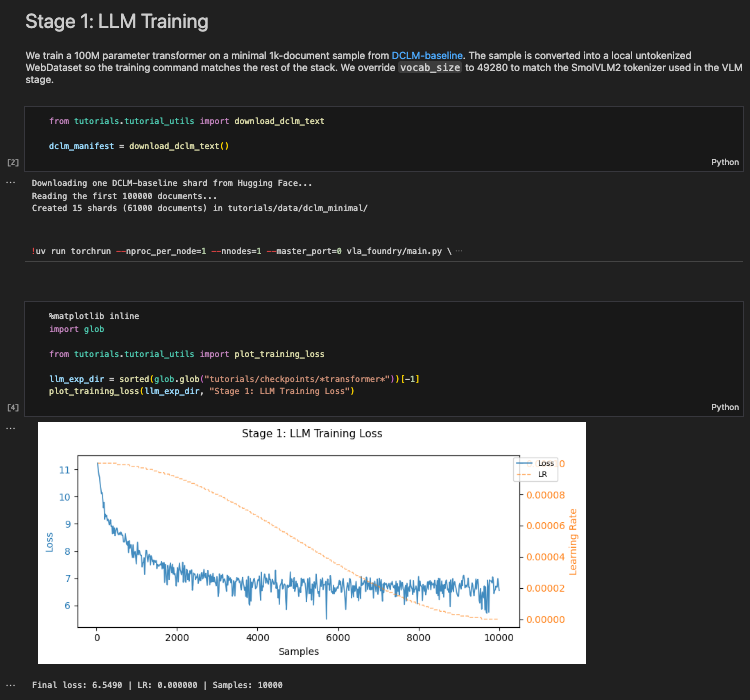

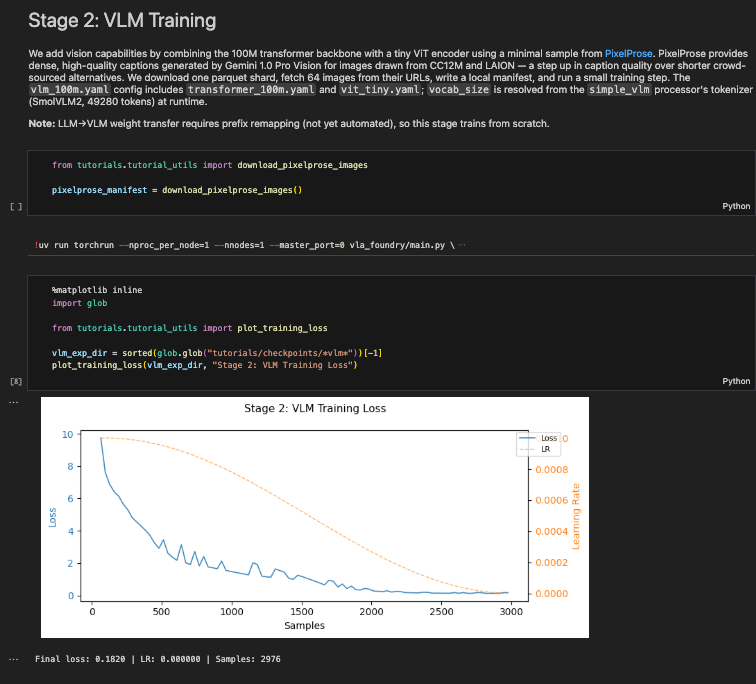

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

A few interesting rollouts from the Foundry-QwenVLA-2.5B multi-task model on seen tasks in sim – a 🧵. I really like behaviors that involve non-prehensile manipulation, like the little nudges in StoreCerealBoxUnderShelf.

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.