r0

536 posts

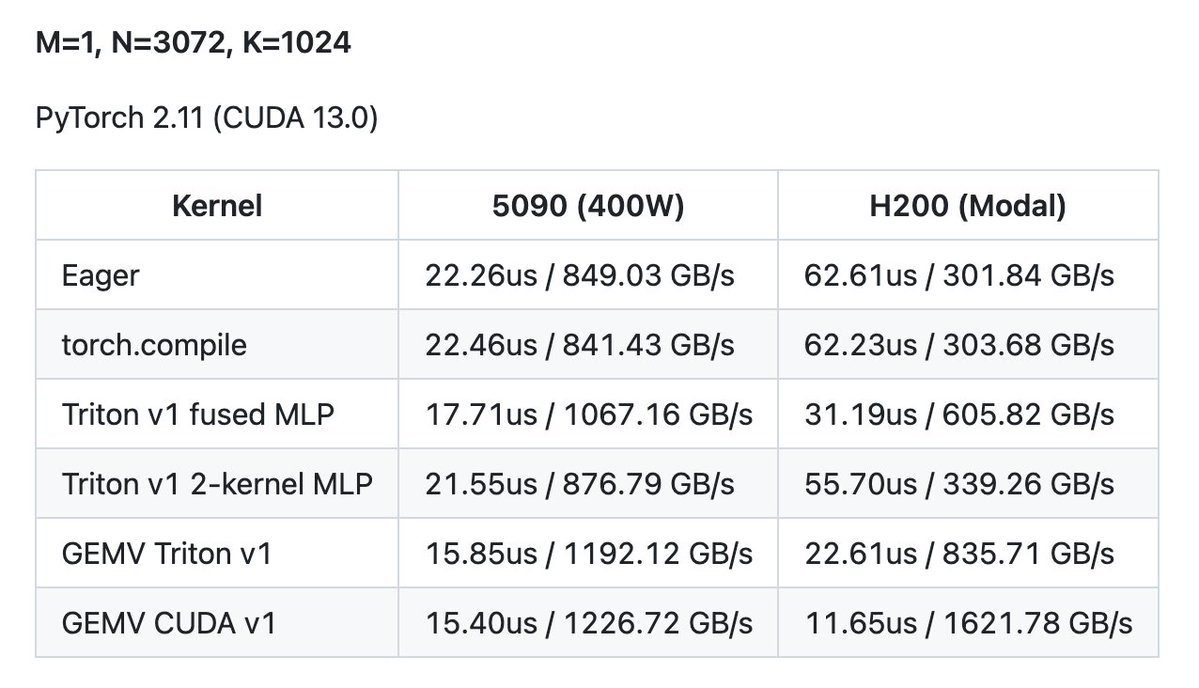

The runtime advantage of MoE comes from dynamic sparsity. But if this dynamism isn't properly handled, you might end up slower. Luckily, for BS=1 decoding, there's a nice way to do this. Instead of doing the indexing in *Python*, let's do the indexing *on the GPU*. (4/8)

Recently, I have been diving deeper into torch compile internals especially for inference related graph optimizations with custom kernels and below are my findings/learnings: Note that this is still a very high-level overview with lots of moving parts hidden behind the scenes. From what I understand the entire process of torch compile can be broken down into 5 stages. 1) Torch Dynamo: responsible for tracing the python bytecode and returning a fx GraphModule (gm). We can call it "functional graph module". 2) Pre-grad: this stage runs fx passes (both built-in and custom) on the gm before passing it to AOT autograd stage. 3) AOT autograd: this stage decomposes the unstable IR from dynamo+pre-grad stage into ATEN operations. ATEN is what pytorch uses behind the scenes. Ops like aten.addmm, aten.mul, and so on. It also builds and runs fx passes on the "joint" forward+backward graph if required (not necessary for inference only). 4) Post grad: this stage applies fx passes on the partitioned forward and backward graphs that come from AOT autograd stage. 5) Inductor codegen: this stage is still kinda a black box for me but I think it fuses ops in the graph, does autotuning, code generation and so on. Within all these stages, we can ask the torch compile backend/inductor to apply our own fx passes using the "pre_grad_custom_pass", "joint_custom_pre_pass", "joint_custom_post_pass", "post_grad_custom_pre_pass", "post_grad_custom_post_pass" present in inductor's config. Here, we can edit the graph nodes (add, remove, update) to have custom fusions that inductor may not do for us. Try thinking of an example as an exercise :) From a practical standpoint, if we wanted to have our own fx passes related to inference, the post grad pre passes (after AOT autograd, and before Inductor decomposition) is the best place to do it. At this point, we still have higher-level ATEN ops intact in the graph as nodes. For example, aten.addmm is not decomposed into aten .mm + aten.add here. To apply custom fx passes, we have two options: > Pattern replacement: Inductor provides a nice helper that lets us define a pattern function and a replacement function using aten ops or custom torch ops. One caveat is that the pattern must be robust, even a small change and inductor won't replace it. > Node surgery: We can search and edit the nodes in the graph directly. This is much more robust but it can get pretty hard for complex patterns. This is not documented much but by leveraging what torch compile/inductor provides us, one can as much graph optimization as they want. Another thing that inductor provides us is, registering custom lowering for built-in aten ops but that is a whole another topic to discuss, maybe next time.

We just OCR'd 27,000 arxiv papers into Markdown using an open 5B model, 16 parallel HF Jobs on L40S GPUs, and a mounted bucket. Total cost: $850 Total time: ~29 hours Jobs that crashed: 0 This now powers "Chat with your paper" on hf.co/papers

Trtllmgen kernels are now open. Fastest prefill and decode kernels for our target workloads. We wrote these to win InferenceX, MLPerf, other benchmarks. Powering some of today’s top served models. Dive in, learn, use them, or level up your own. Enjoy. github.com/flashinfer-ai/…