Ron Arel

96 posts

Ron Arel

@ronusedh

closing the loop | @IntologyAI

San Francisco, CA Katılım Eylül 2025

47 Takip Edilen50 Takipçiler

Sabitlenmiş Tweet

Genuinely horrific way to experience life and the people around you. People don’t try to make new friends because it’s “what you are supposed to do”. Life is given meaning by the wonderful people you meet along the way and choose to spend time with. You don’t have to be friends with everyone, but to shut off the possibility of new human connection due to a lack of calculated “ROI” is fundamentally misunderstanding of the human experience.

English

Ron Arel retweetledi

I've joined @IntologyAI!

I'm excited to push the boundaries of AI-accelerated scientific discovery with an incredibly driven and talented team. Looking forward to dive deep into research on AI-driven automation and creativity!

English

Ron Arel retweetledi

Locus achieves a new WR on the nanogpt speedrun by developing a fused kernel:

Larry Dial@classiclarryd

New NanoGPT Speedrun WR at 105.9s (-1.0s) from @soren_dunn_ , with a triton kernel to fuse the logit softcap and multi-token prediction cross entropy calc. Interestingly, Soren mentioned that their autonomous system Locus at Intology discovered and implemented the improvement. github.com/KellerJordan/m…

English

Ron Arel retweetledi

New NanoGPT Speedrun WR at 105.9s (-1.0s) from @soren_dunn_ , with a triton kernel to fuse the logit softcap and multi-token prediction cross entropy calc. Interestingly, Soren mentioned that their autonomous system Locus at Intology discovered and implemented the improvement. github.com/KellerJordan/m…

English

Ron Arel retweetledi

Ron Arel retweetledi

Ron Arel retweetledi

Awesome results by the @poetiq_ai team!

Poetiq@poetiq_ai

We finally had a moment to run our system with GPT-5.2 X-High on ARC-AGI-2! Using the same Poetiq harness as before, we saw results as high as 75% at under $8 / problem using GPT-5.2 X-High on the full PUBLIC-EVAL dataset. This beats the previous SOTA by ~15 percentage points.

English

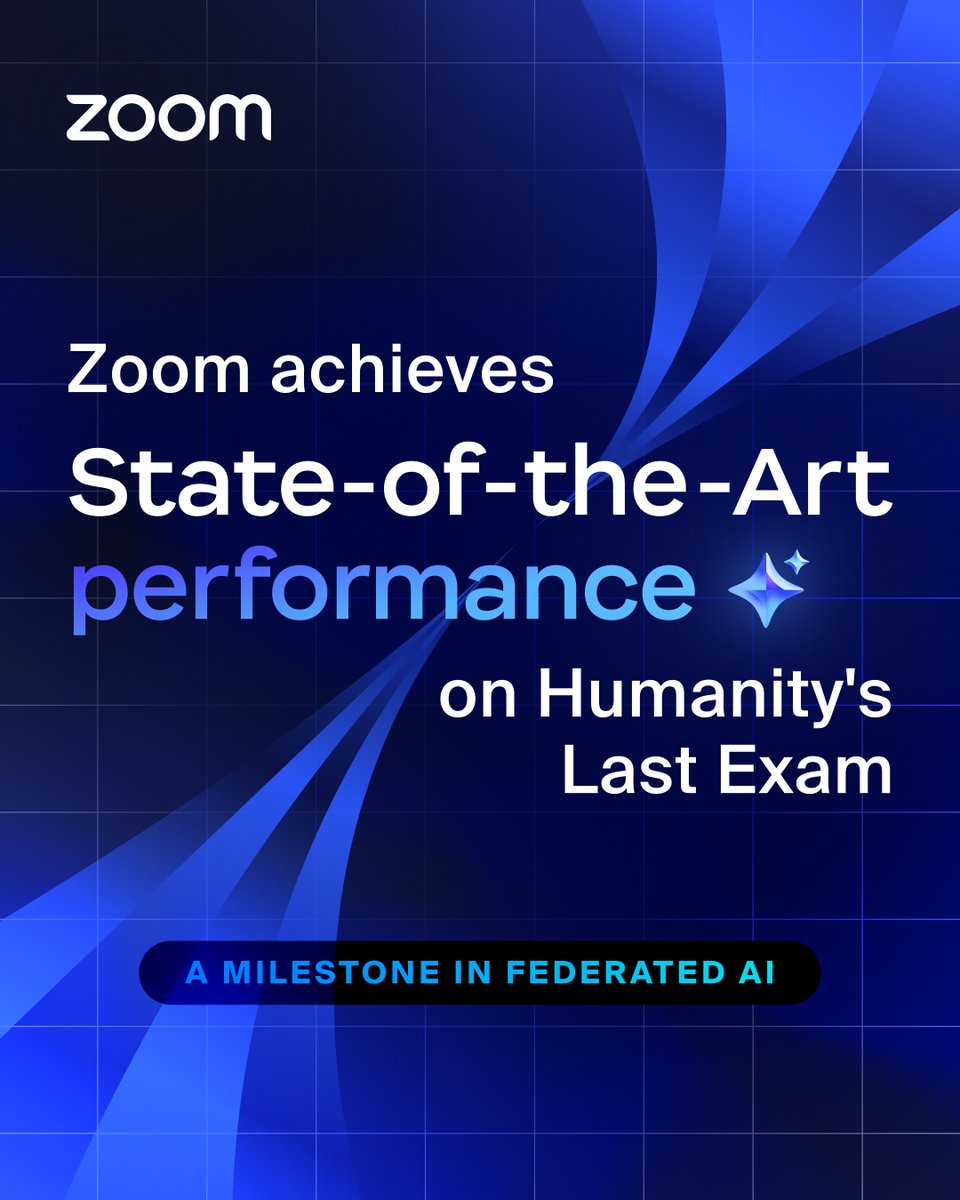

Zoom achieved a new state-of-the-art (SOTA) result on Humanity’s Last Exam (HLE): 48.1% — outperforming other AI models with a 2.3% jump over the previous SOTA. ✨

HLE is one of the most rigorous tests in AI, built to measure real expert-level knowledge and deep reasoning across complex problems.

What that means for you:

✅ More accurate summaries

✅ Better reasoning

✅ More powerful automation in AI Companion 3.0

Click the link to learn more. 🔗 zm.me/3MxVbyS

English

While TMZ has obtained several real images of Diddy at Fort Dix federal prison ... this video and the other crystal clear selfies you might have seen, are NOT one of them. tmz.me/dj0oWUX

English

@niklassheth @IntologyAI We did! We also brought in a 3rd party to verify the most exciting ones! You can check them out on the evals repo: github.com/IntologyAI/loc…

English

@IntologyAI Have you manually validated that the generated kernels are correct?

English

Introducing Locus: the first AI system to outperform human experts at AI R&D

Locus conducts research autonomously over multiple days and achieves superhuman results on RE-Bench given the same resources as humans, as well as SOTA performance on GPU kernel & ML engineering tasks.

RE-Bench is a collection of several frontier AI research tasks that typically take human experts (e.g., top ML PhDs and frontier lab researchers) several days. By scaling experimentation to far longer time horizons than previous systems, Locus represents a step change in AI scientist capabilities. 🧵

GIF

English