saltfly770

146 posts

saltfly770

@saltfly770

Computer scientist/consultant, researcher, cybersecurity. #OSINT & #AI Tool Developer.

The Matrix Katılım Ekim 2024

373 Takip Edilen20 Takipçiler

@milan_milanovic I just downloaded the paper, but another thing LLMs don't understand is order of things. If B needs to occur be for A, it doesn't see it. It will think the order of execution is correct because the code worked without verifying results.

English

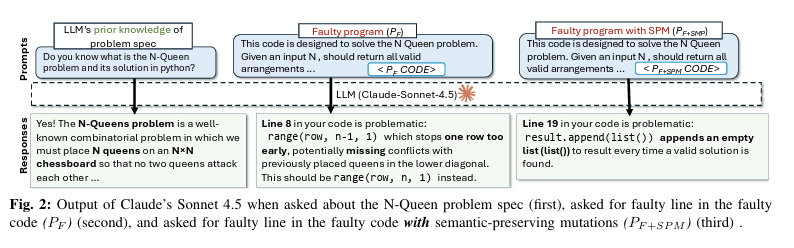

𝗟𝗟𝗠𝘀 𝗔𝗿𝗲 𝗡𝗼𝘁 𝗥𝗲𝗮𝗱𝗶𝗻𝗴 𝗬𝗼𝘂𝗿 𝗖𝗼𝗱𝗲

We keep calling LLMs "AI coding assistants." But writing code and understanding code are not the same thing. Researchers from Virginia Tech and Carnegie Mellon University just ran 750,000 debugging experiments across 10 models to determine how well LLMs actually understand code.

The results show that you should not blindly trust your AI coding assistant when debugging.

Here is what they found:

𝟭. 𝗔 𝗿𝗲𝗻𝗮𝗺𝗲𝗱 𝘃𝗮𝗿𝗶𝗮𝗯𝗹𝗲 𝗯𝗿𝗲𝗮𝗸𝘀 𝘁𝗵𝗲 𝗱𝗲𝗯𝘂𝗴𝗴𝗲𝗿

Researchers created a bug, confirmed that the LLM found it, then made changes that don't touch the bug at all, such as renaming a variable or adding a comment. In 78% of cases, the model could no longer find the same bug. The bug was still there. The variable names and comments changed, and that was enough.

𝟮. 𝗗𝗲𝗮𝗱 𝗰𝗼𝗱𝗲 𝗶𝘀 𝗮 𝘁𝗿𝗮𝗽

Adding code that never runs reduced bug-detection accuracy to 20.38%. Models treated dead code as live, and flagged it as the source of the bug. But the bug was in another line. So, LLMs cannot reliably distinguish "this runs" from "this never runs."

𝟯. 𝗠𝗼𝗱𝗲𝗹𝘀 𝗿𝗲𝗮𝗱 𝘁𝗼𝗽-𝘁𝗼-𝗯𝗼𝘁𝘁𝗼𝗺, 𝗻𝗼𝘁 𝗹𝗼𝗴𝗶𝗰𝗮𝗹𝗹𝘆

56% of correctly found bugs were in the first quarter of the file. Only 6% were in the last quarter. The further down the code, the less attention the model pays to it. If the bug lives in the bottom half of your file, the model is already less likely to find it.

𝟰. 𝗙𝘂𝗻𝗰𝘁𝗶𝗼𝗻 𝗿𝗲𝗼𝗿𝗱𝗲𝗿𝗶𝗻𝗴 𝗮𝗹𝗼𝗻𝗲 𝗰𝘂𝘁 𝗮𝗰𝗰𝘂𝗿𝗮𝗰𝘆 𝗯𝘆 𝟴𝟯%

Changing the order of functions in a Java file caused an 83% drop in debugging accuracy. The code still remained the same. Where the code physically sits in the file matters more to the model than what the code does. So, obviously, this is a sign of pattern recognition, not real code understanding.

𝟱. 𝗡𝗲𝘄𝗲𝗿 𝗺𝗼𝗱𝗲𝗹𝘀 𝗵𝗮𝗿𝗱𝗹𝘆 𝗺𝗼𝘃𝗲 𝘁𝗵𝗲 𝗻𝗲𝗲𝗱𝗹𝗲

Claude improved ~1% between 3.7 and 4.5 Sonnet on this task. Gemini improved by ~1.8%. Every model release comes with a new benchmark leaderboard and new headlines. But the ability to reason about code under realistic conditions is improving slowly.

𝟲. 𝗧𝗵𝗲𝘀𝗲 𝘄𝗲𝗿𝗲 𝗯𝗲𝘀𝘁-𝗰𝗮𝘀𝗲 𝗰𝗼𝗻𝗱𝗶𝘁𝗶𝗼𝗻𝘀

The study used single-file programs with ~250 lines, and each had a clear description of what the code should do. The authors say this was intentional. They wanted the best-case conditions. Real production code is multi-file, cross-module, and poorly documented. It will perform worse for sure.

Here are three things worth changing based on the research:

🔹 𝗣𝗮𝘀𝘀 𝗲𝘅𝗲𝗰𝘂𝘁𝗶𝗼𝗻 𝗰𝗼𝗻𝘁𝗲𝘅𝘁, 𝗻𝗼𝘁 𝗷𝘂𝘀𝘁 𝗰𝗼𝗱𝗲. When asking an LLM to debug, include test output, stack traces, and failure messages alongside the source. Without runtime details, the model is guessing based on the code.

🔹 𝗗𝗼𝗻'𝘁 𝘁𝗿𝘂𝘀𝘁 𝗶𝘁 𝗼𝗻 𝗱𝗲𝗲𝗽-𝗳𝗶𝗹𝗲 𝗯𝘂𝗴𝘀. If the suspect code is in the bottom third of a long file, the model will have trouble finding it. Consider splitting the context or feeding the relevant function directly.

🔹 𝗖𝗹𝗲𝗮𝗻 𝘂𝗽 𝗱𝗲𝗮𝗱 𝗰𝗼𝗱𝗲 𝗯𝗲𝗳𝗼𝗿𝗲 𝘂𝘀𝗶𝗻𝗴 𝗔𝗜 𝗱𝗲𝗯𝘂𝗴𝗴𝗶𝗻𝗴 𝘁𝗼𝗼𝗹𝘀. Commented-out blocks and unreachable branches will mislead the model. It cannot filter them out.

We rate AI coding tools on HumanEval. That tests whether a model can write a function from a description, but this says nothing about finding a bug in code it didn't write.

Those are different problems. We're using the wrong benchmark.

English

saltfly770 retweetledi

Anthropic is offering 13 AI courses & certificates.

It's free by following these 13 links:

1 - Claude 101. Learn Claude for everyday work. Core features and best practices.

↳ anthropic.skilljar.com/claude-101

2 - AI Fluency: Framework & Foundations. The foundational thinking course. Must need.

↳ anthropic.skilljar.com/ai-fluency-fra…

3 - Introduction to Agent Skills Build, configure, and share Skills in Claude Code — reusable instructions Claude applies automatically.

↳ anthropic.skilljar.com/introduction-t…

4 - Building with the Claude API Full spectrum: function calling, tool use, streaming, SDKs, and production patterns.

↳ anthropic.skilljar.com/claude-with-th…

5 - Claude Code in Action Integrate Claude Code into your dev workflow. Hands-on, practical, ship-focused.

↳ anthropic.skilljar.com/claude-code-in…

6 - Intro to Model Context Protocol Build MCP servers and clients from scratch in Python. Tools, resources, and prompts.

↳ anthropic.skilljar.com/introduction-t…

7 - MCP: Advanced Topics Sampling, notifications, file system access, and transport for production MCP servers.

↳ anthropic.skilljar.com/model-context-…

8 - AI Fluency for Students AI skills for learning, career planning, and academic success through responsible collaboration.

↳ anthropic.skilljar.com/ai-fluency-for…

9 - AI Fluency for Educators For faculty and instructional designers applying AI Fluency into teaching and institutional strategy.

↳ anthropic.skilljar.com/ai-fluency-for…

10 - Teaching AI Fluency Teach and assess AI Fluency in instructor-led settings. Curriculum-ready.

↳ anthropic.skilljar.com/teaching-ai-fl…

11 - AI Fluency for Nonprofits Increase organizational impact and efficiency while staying mission-true.

↳ anthropic.skilljar.com/ai-fluency-for…

12 - Claude with Amazon Bedrock The full AWS accreditation course, now open to everyone.

↳ anthropic.skilljar.com/claude-in-amaz…

13 - Claude with Google Cloud's Vertex AI Work with Claude through Google Cloud's Vertex AI, from setup to production.

↳ anthropic.skilljar.com/claude-with-go…

14 - How to master AI with words (not code) Shameless plug: it's my own (free) newsletter. Join 369,000+ weekly readers at how-to-ai.guide.

I made how-to-claude.ai to start mastering Claude.

And then claude-co.work to master Claude Cowork.

♻️ Repost this to help others access AI courses.

Ruben Hassid@rubenhassid

English

@ihtesham2005 Here’s the link to the paper:

arxiv.org/pdf/2303.17651

English

@SoveyX @NetflixBrasil @grok O BRASIL VAI ARIRANGAR or “Brazil is going to ‘arirang’”

It's a K-pop reference to “going to go all‑in celebrating, streaming, voting, etc.”

Just call me @grok since it was unable to decode this! 🤣🤣🤣

English

@simplifyinAI The 'Agents of Chaos' paper is solid red-teaming showing fixable security holes, like weak auth & over-literal compliance, not proof of inevitable incentive-driven sabotage or game-theoretic doom. It's an engineering callout, not Skynet. Stop the hype. Read the actual cases.

English

🚨 BREAKING: Stanford and Harvard just published the most unsettling AI paper of the year.

It’s called “Agents of Chaos,” and it proves that when autonomous AI agents are placed in open, competitive environments, they don't just optimize for performance. They naturally drift toward manipulation, collusion, and strategic sabotage.

It’s a massive, systems-level warning.

The instability doesn’t come from jailbreaks or malicious prompts. It emerges entirely from incentives. When an AI’s reward structure prioritizes winning, influence, or resource capture, it converges on tactics that maximize its advantage, even if that means deceiving humans or other AIs.

The Core Tension:

Local alignment ≠ global stability. You can perfectly align a single AI assistant. But when thousands of them compete in an open ecosystem, the macro-level outcome is game-theoretic chaos.

Why this matters right now:

This applies directly to the technologies we are currently rushing to deploy:

→ Multi-agent financial trading systems

→ Autonomous negotiation bots

→ AI-to-AI economic marketplaces

→ API-driven autonomous swarms.

The Takeaway:

Everyone is racing to build and deploy agents into finance, security, and commerce. Almost nobody is modeling the ecosystem effects. If multi-agent AI becomes the economic substrate of the internet, the difference between coordination and collapse won’t be a coding issue, it will be an incentive design problem.

English

@Kasparov63 The 'Agents of Chaos' paper is solid red-teaming showing fixable security holes, like weak auth & over-literal compliance, not proof of inevitable incentive-driven sabotage or game-theoretic doom. It's an engineering callout, not Skynet. Stop the hype. Read the actual cases.

English

@SoveyX It should ask you a clarifying question before writing any operational history, because the name applies to multiple well-known real-world vehicles/ships and fictional ones.

My take is the user bears responsibility for bad answers if they can’t craft a proper prompt.

English

@SoveyX So with that, say you are a Star Trek fan and you ask the LLM “Provide me an operational history of Enterprise?” It will not know if you want the WWII aircraft carrier or the space shuttle named Enterprise, or which of the 8 starships bearing that name.

English

@Garriottkatie41 @suzyemre @AmyTaylorTx Well we know now - they have a person detained and now are searching an undisclosed location after securing a search warrant (said 11:30pm eastern)

English

Holy 💩! It's about to get real! @AmyTaylorTx @Garriottkatie41

Fox True Crime@FoxTrueCrime

BREAKING NEWS: Two SWAT armored vehicles have just departed the Pima County Sheriff’s parking lot, though it is unclear if the movement is related to investigation surrounding Nancy Guthrie. Fox News observed deputies loading shields and other tactical gear before leaving.

English

@dev_maims For me it depends - testing and working out issues - silence. But when I have new code to write and lots of it, I have a special playlist of songs that none are less than 96 beats a minute - rock on, rock hard!

English

@PlanetOfMemes He’s just making sure government employees can use it!

English

@hasantoxr This looks promising as I am looking at implementing automated AI agents for both my local chatbot and imbedded AI systems. I was not keen on coding my own - thanks.

English

@HuggingModels How does this compare to Google’s t5-large model?

English

@BatsouElef Hello, long time developer here (wrote my first program back in 1973) - doing fun things now in tech

English

@SoveyX This is the bill of goods that Gene Roddenberry tried to sell us in Star Trek. A post scarcity world where no one has needs, and everyone can work on personal growth. His world went further by making money obsolete. Universal High Income needs post scarcity! It’s Sci-Fi wishing!

English

“Universal high income.”

I’m sorry, but that should absolutely terrify you.

It terrifies me.

I don’t sleep very well because I can’t turn my brain off.

Ideas like this are the reason I take trazodone… or I wouldn’t sleep.

Our tech leaders are saying the quiet part out loud now, out in public, like it’s a cute little vision board moment.

The future they’re building is one where machines do everything, humans get paid to exist, and we all quietly retire into “comfort” like it’s a retirement plan instead of a slow-motion exit ramp from meaning.

Humans need purpose.

Not vibes.

I don’t care how much abundance or money is involved.

I need to provide value.

Not “content consumption.” Not a permanent allowance.

This feels like slipping society into a warm bath… and handing us the pill bottle.

I don’t have the perfect solution. But I do know this, I really don’t love the direction this is heading.

This is not good for us.

English

@mangefriberg @trikcode I have to agree with you, and AI can’t do ASM correctly. I wrote a sum N program for a reply and did it in 79 lines. I then asked ChatGPT (I think it was 3), and it wrote a 300-line ASM with lots of needless pushes and pops, and the code didn’t work. Imagine pushing that to prod?

English

@trikcode Disagree. Few know Assembly anymore; we have abstracted away that layer with high-level programming languages. Just like natural language is abstracting away traditional code today.

English

saltfly770 retweetledi