sbstndbs

171 posts

sbstndbs

@sbstndbs

Perf SWE | AI Inference Chips, HPC & Physics

wow Qwen3.5-27B score on Humanity's Last Exam 🚀

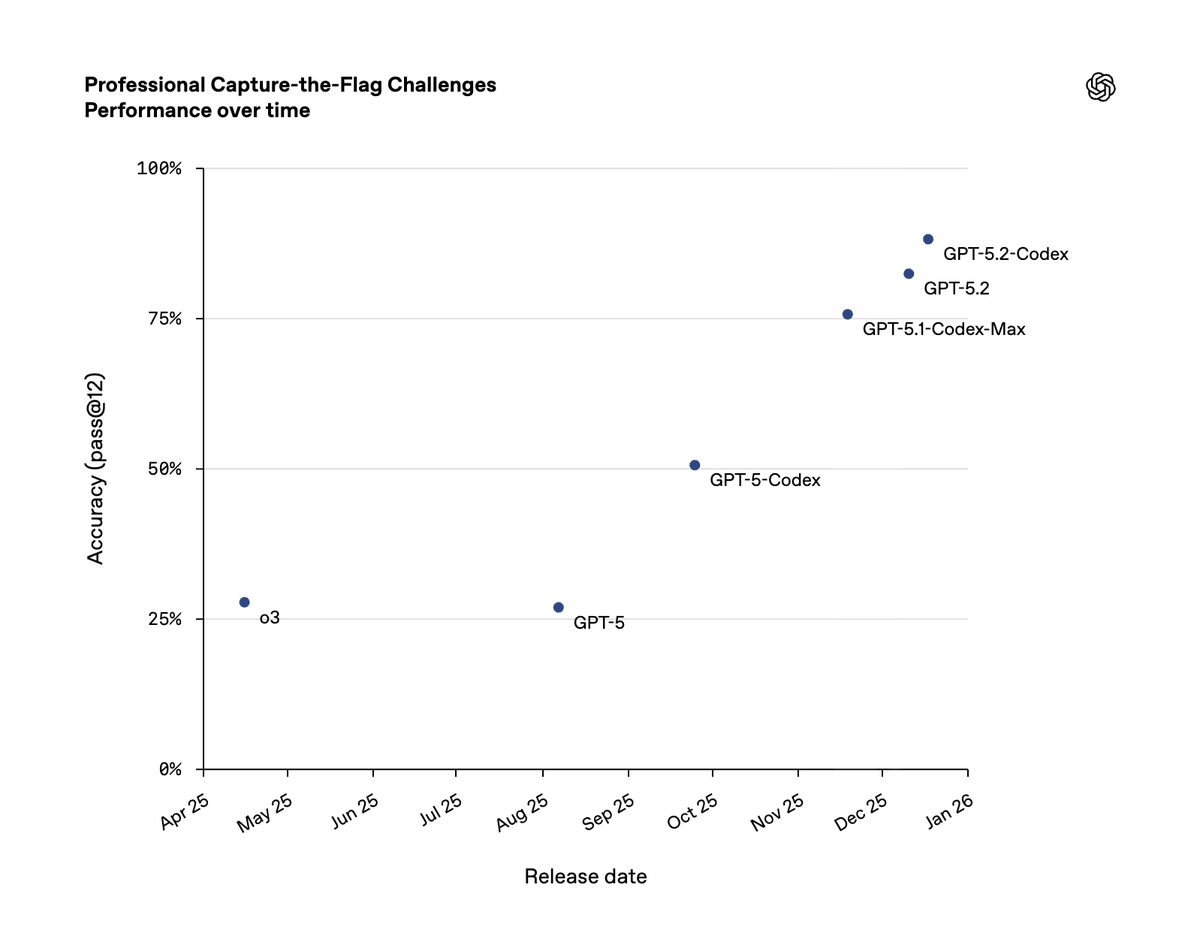

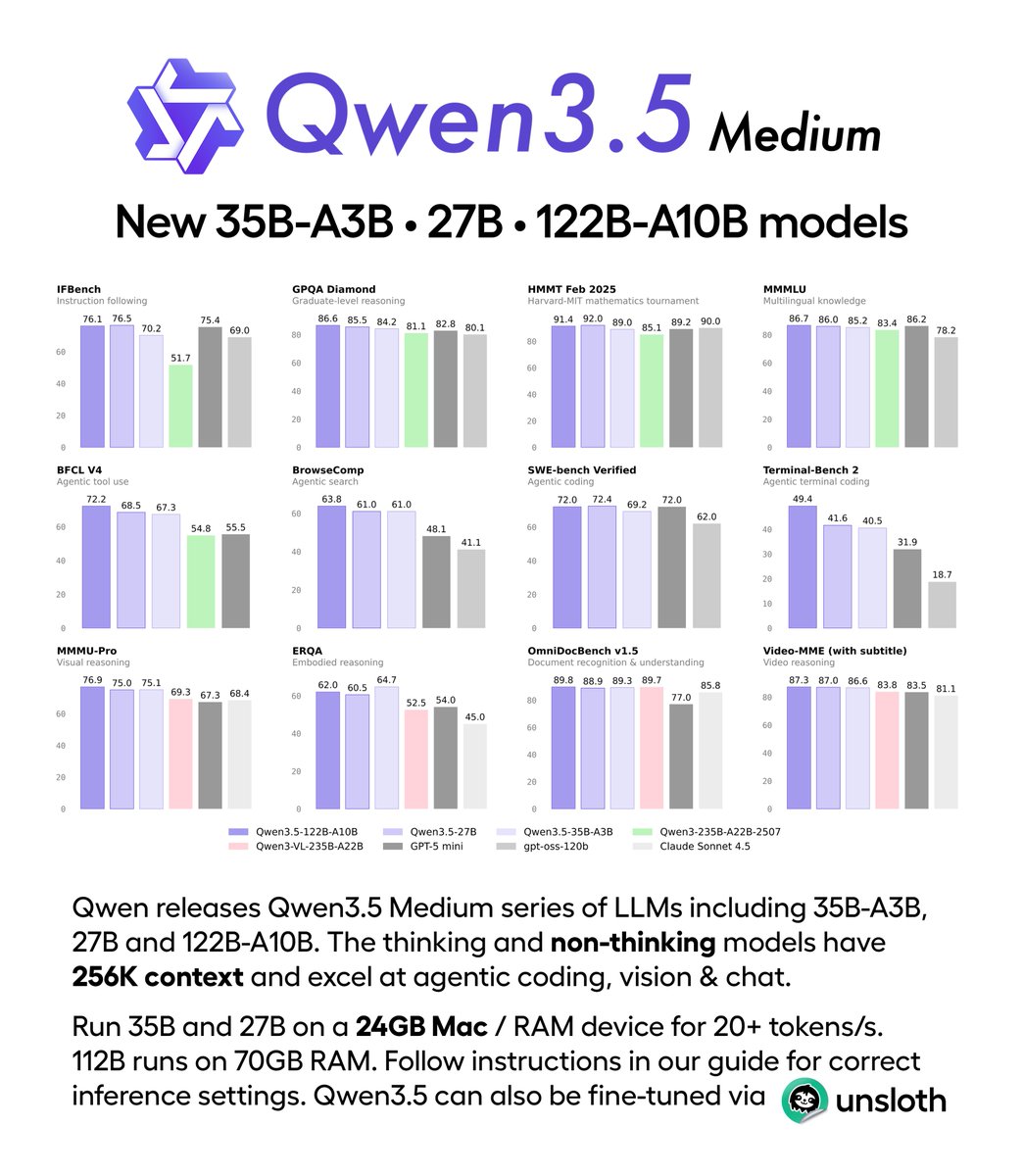

🚀 Introducing the Qwen 3.5 Medium Model Series Qwen3.5-Flash · Qwen3.5-35B-A3B · Qwen3.5-122B-A10B · Qwen3.5-27B ✨ More intelligence, less compute. • Qwen3.5-35B-A3B now surpasses Qwen3-235B-A22B-2507 and Qwen3-VL-235B-A22B — a reminder that better architecture, data quality, and RL can move intelligence forward, not just bigger parameter counts. • Qwen3.5-122B-A10B and 27B continue narrowing the gap between medium-sized and frontier models — especially in more complex agent scenarios. • Qwen3.5-Flash is the hosted production version aligned with 35B-A3B, featuring: – 1M context length by default – Official built-in tools 🔗 Hugging Face: huggingface.co/collections/Qw… 🔗 ModelScope: modelscope.cn/collections/Qw… 🔗 Qwen3.5-Flash API: modelstudio.console.alibabacloud.com/ap-southeast-1… Try in Qwen Chat 👇 Flash: chat.qwen.ai/?models=qwen3.… 27B: chat.qwen.ai/?models=qwen3.… 35B-A3B: chat.qwen.ai/?models=qwen3.… 122B-A10B: chat.qwen.ai/?models=qwen3.… Would love to hear what you build with it.

Ile-de-France : Le 1er tronçon de la ligne 18 du métro automatique @GdParisExpress (Christ de Saclay↔️Massy-Palaiseau) ouvrira bien en octobre 2026. Une 1ère depuis 28 ans pour le métro grand parisien, après 16 ans d'études et de travaux.

Gemini 3 models from @Google @GoogleDeepMind have made a significant 2X SOTA jump on ARC-AGI-2 (Semi-Private Eval) Gemini 3 Pro: 31.11%, $0.81/task Gemini 3 Deep Think (Preview): 45.14%, $77.16/task

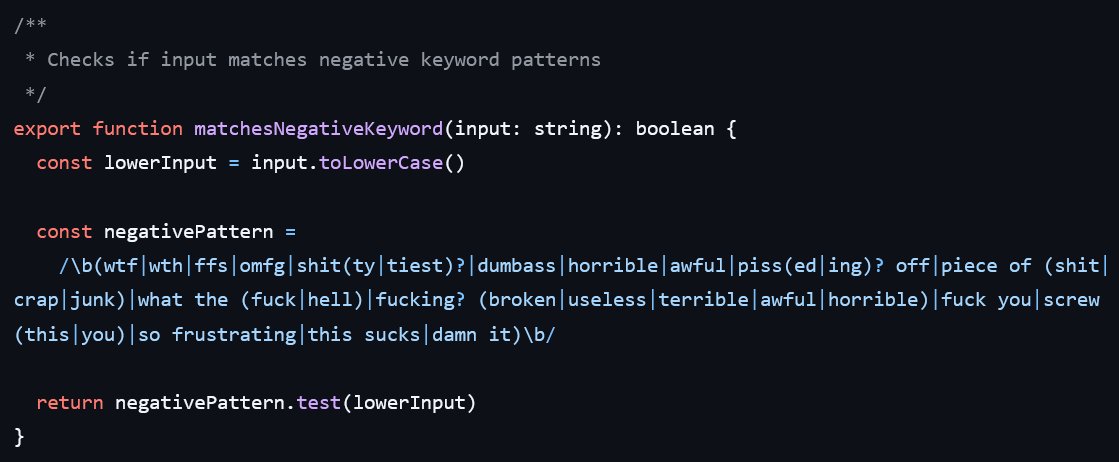

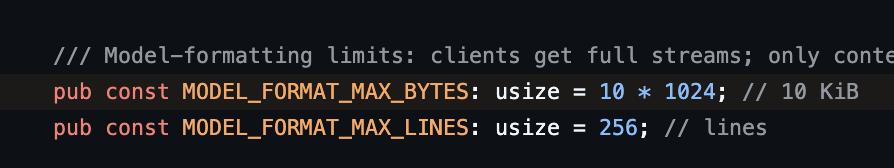

Heh, recent Codex is truncating tool outputs before they get passed to the model, instead of as a part of context/history clean-up. Making MCP servers a tiny little less useful. github.com/openai/codex/i…