Sabitlenmiş Tweet

Supreme Leader Wiggum

31.6K posts

Supreme Leader Wiggum

@ScriptedAlchemy

Infra Architect @ ByteDance. Maintainer of @webpack @rspack_dev - creator of #ModuleFederation #auADHD #synesthesia own opinions.

Redmond, WA Katılım Haziran 2018

720 Takip Edilen18.5K Takipçiler

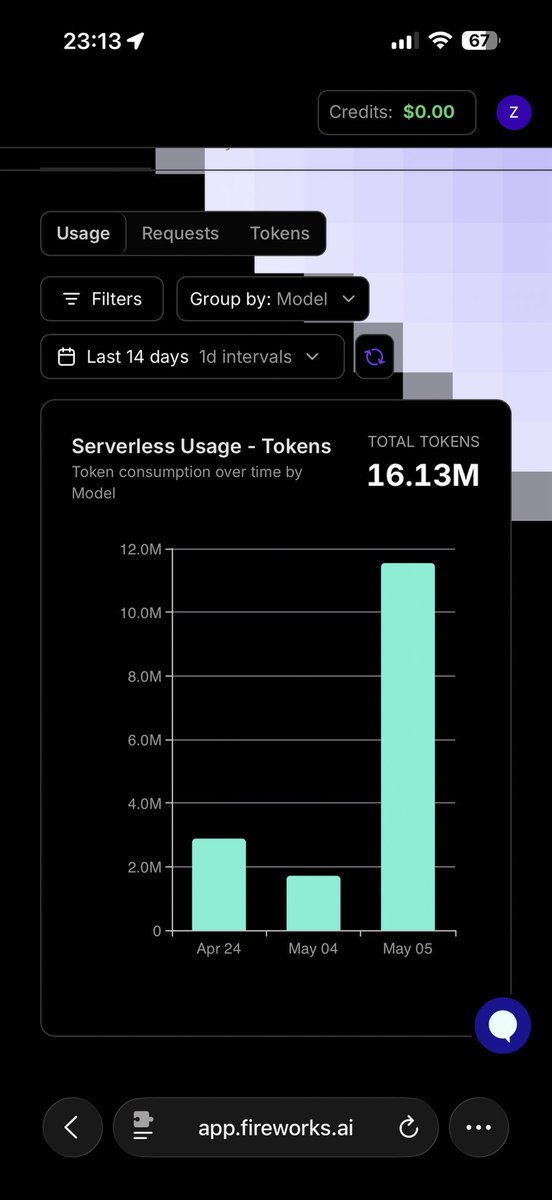

No. It converts posts and content into numerical representations for various algorithms which in turn are used to train model weights. Like novelty, expected decay, industry. I use embedders for creating clusters and vectors on the actual original text, but that’s not useing the stuff the LLM does.

English

@ScriptedAlchemy So it processes this and determines picks, entries and exits?

English

Supreme Leader Wiggum retweetledi

So, @rspack_dev 2.0 was launched a few weeks ago and Meteor is on the official ecosystem list 🎉

The big win for large Meteor apps: persistent cache now drops memory usage by 20%+, and SWC minimizer cache hits make builds ~50% faster.

Less RAM, faster builds. We'll take it 🤝

English

Supreme Leader Wiggum retweetledi

I saw a lot of people saying Module Federation doesn't work with Angular. Felt like there was a lack of examples out there.

So here's two: one with @vite_js 8 and another with @rspack_dev Rsbuild.

English

@yagiznizipli @X I’ll help you guys move off webpack

English

@oliver_bauer @nstlopez No discord. Just DMs in discord

English

@ScriptedAlchemy @nstlopez Can I also join the discord ? Currently building my second trading after a first on trend / news follower strategy

English

@ScriptedAlchemy @nstlopez Wait you have your own discord channel?

English

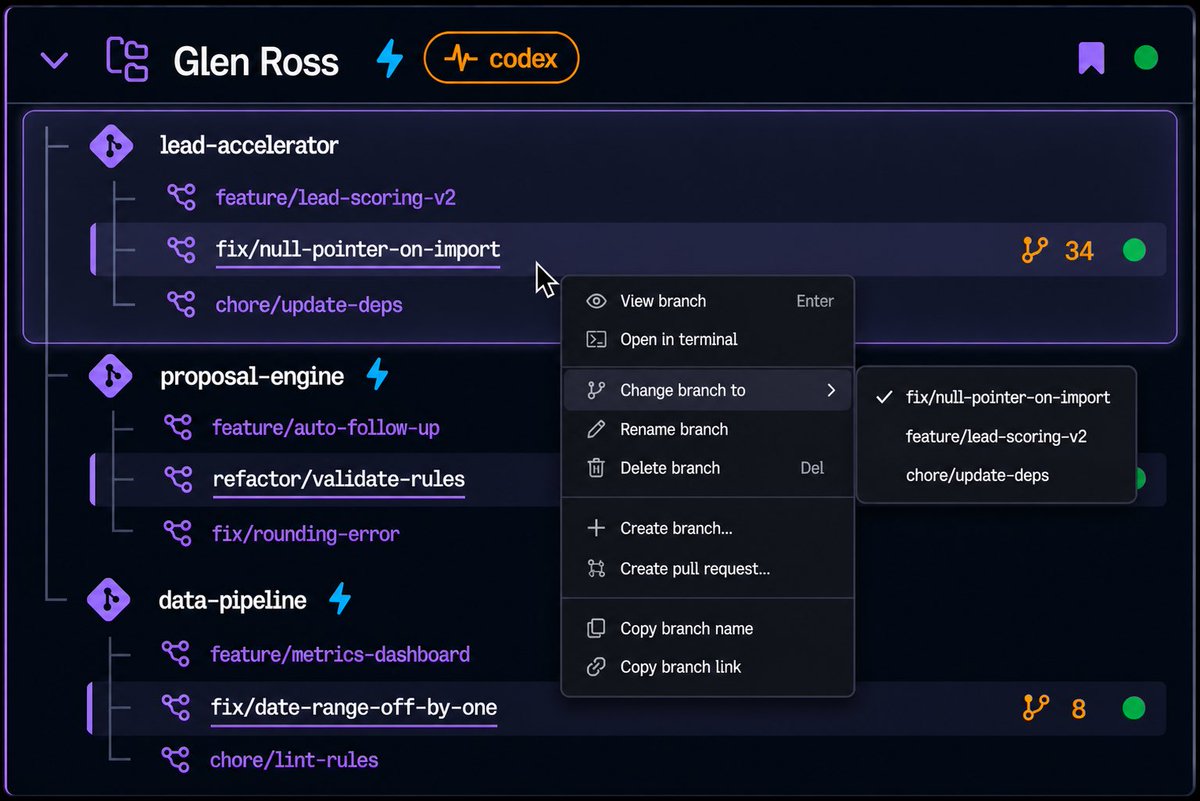

@jeffscottward Yeah I use them a lot. What would be nice is if this were “stacked” worktrees since usually work has dependencies on other agents. So restacking and rebasing a worktree stack would be quite useful

English

Is there anyone out there who uses git worktrees really aggressively or agent orchestrators?

Like creating multiple branches within a work tree off of a base repo?

————

We are potentially cooking up something really crazy in Maestro and wondering if more than one branch per work tree makes any sense realistically?

Typically it’s one feature branch per Work tree right?

@ScriptedAlchemy

@theo

@kenwheeler

@elonmusk

@jbrukh

@Jason

Please Retweet!

#git #worktree #ai #ui #agent #orchestration #claude #codex

English

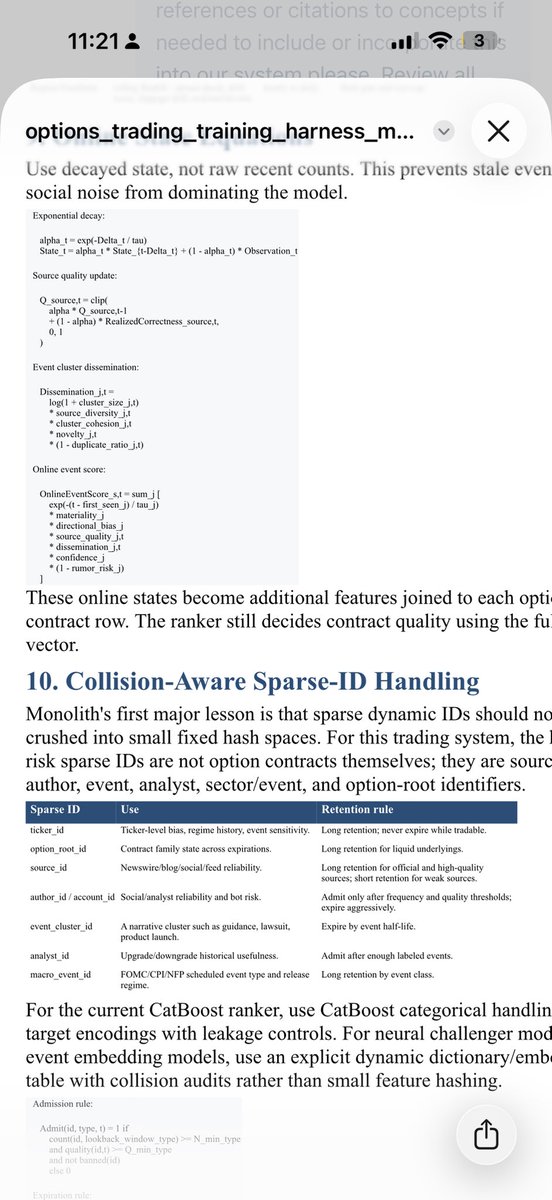

@nstlopez Check discord message. I sent you the “master plan” that contains the system end to end. From project creation to deployment

English

@derekdamko Can likely get another 1tb vram if necessary.

English

@ScriptedAlchemy You do not have enough VRAM for any of the large open weight models. Example GLM 5 is 1.5 TB on disk which will 1.7 loaded into ram. Then you will need a large context window so you are probably looking at close to 2TB of ram needed for fine tuning.

English

@omniwired Cost. I wanna fine tune a new tokenizer encoder/decoder. Only have enough burn for 1 or 2 runs.

English

@ScriptedAlchemy Is it coding? Then GLM 5.1

But why only try one, do Kimi and deepseek pro next.

Fine-tune to do what

English

@HOARK_ Turboquant for inference tho, not training. But yes.

English

@ptremblay Want a big 16fp model. Like GLM or kimi

English

@ScriptedAlchemy what about a 1.58-bit distill of Qwen 3.6 27B? (BitNet Distillation) arxiv.org/abs/2510.13998

English

@julianharris 1tb of ram, can get more if needed tho.

English

@ScriptedAlchemy Not sure how much RAM you have or whether you are ok with open weights but Deepseekv4 is killer.

English