Sebastien Campion

2.4K posts

Sebastien Campion

@sebcampion

Research Engineer in Computer Science at INRIA

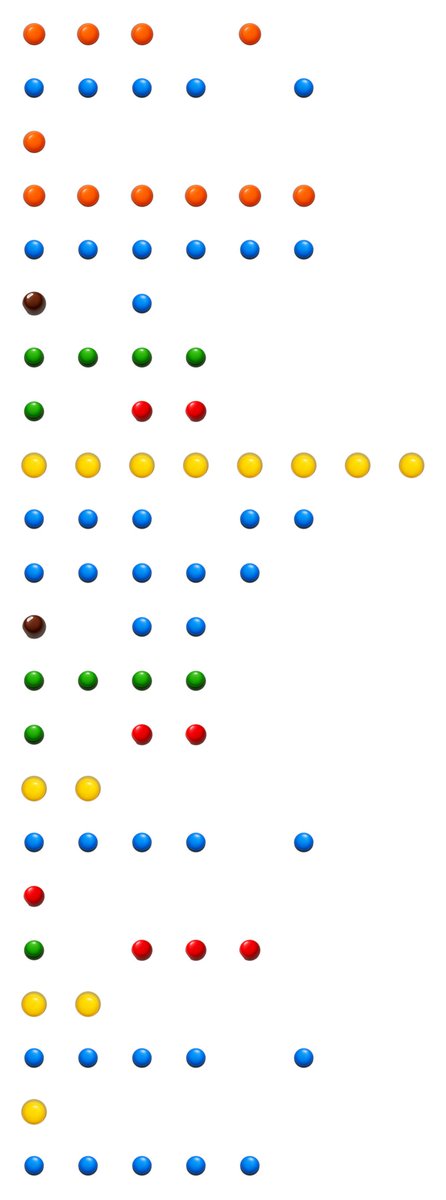

🚨GUYS QWEN3.6 27B/35B @unslothai MLX QUANTS @Brooooook_lyn cooked 🔥 Apple Silicon users, the exact models y’all have been asking for LANDED. Unsloth mixed-precision quants in native MLX format. >Fast. >Clean. >Ready to run Model list + sizes + expected unified memory 🧠 Q2_K_XL → 15GB | 18.6 tok/s (3.32x) → on 24GB 🔥Q3_K_XL → 18GB | 15.5 tok/s → perfect on 32GB 🚀 Q4_K_XL → 21GB | 13.9 tok/s → sweet 32-36GB 🤖 Q5_K_XL → 25GB | 12.0 tok/s → 36GB+ 🏆 → 27GB | 10.8 tok/s → 48-64GB+ Q8_K_XL(27B models shown — collection also has 35B-A3B variants) Drop this straight into MLX and watch it fly on your M4/M3/M2 Mac. 👇🏻 huggingface.co/collections/Br…

Qui veut une carte graphique GRATUITE ? (bon lundi)

Ingress Nginx : fin du support le 13 mars. ~50% des clusters Kubernetes exposés. Sur combien de composants critiques votre infra repose-t-elle sans le savoir sur ce modèle ? lemagit.fr/actualites/366…

we as software engineers are becoming beholden to a handful of well funded corportations. while they are our "friends" now, that may change due to incentives. i'm very uncomfortable with that. i believe we need to band together as a community and create a public, free to use repository of real-world (coding) agent sessions/traces. I want small labs, startups, and tinkerers to have access to the same data the big folks currently gobble up from all of us. So we, as a community, can do what e.g. Cursor does below, and take back a little bit of control again. Who's with me? cursor.com/blog/real-time…

The death of inbound applications is upon us: and yes, it’s in a big part because of AI making it dead simple to apply. And so inbound applications become noisy, with increasingly more of non-qualified people. And so companies rely on referrals and recruiters to source instead.

The French startup, Mistral AI unveils Forge, a platform enabling enterprises to build AI models grounded in their own data, workflows, and systems. Not generic AI, but tailored intelligence. ASML, ESA, Ericsson already onboard. Europe is stepping up 🇪🇺

The multi-vector era is here and there is no going back. Reason-ModernColBERT tops BrowseComp-Plus, the hardest agentic search benchmark available, by 7.59 points on accuracy. 🥇on accuracy. 🥇on recall. 🥇on calibration. 📉 Fewest search calls. The models it outperforms? Up to 54× larger. Reasoning-intensive retrieval (BRIGHT), code search (MTEB Code), agentic Deep Research (BrowseComp-Plus). The pattern is the same: late interaction dominates, with a fraction of the parameters. 149M parameters. Open weights. Open code. Built with PyLate in a few hours. Full results, analysis and recipe on LightOn blog: lighton.ai/lighton-blogs/…

🚨 100M TOKEN CONTEXT WITHOUT COLLAPSE > <9% degradation from 16K → 100M > beats RAG + rerank + SOTA pipelines > runs on just 2×A800 GPUs we could be back

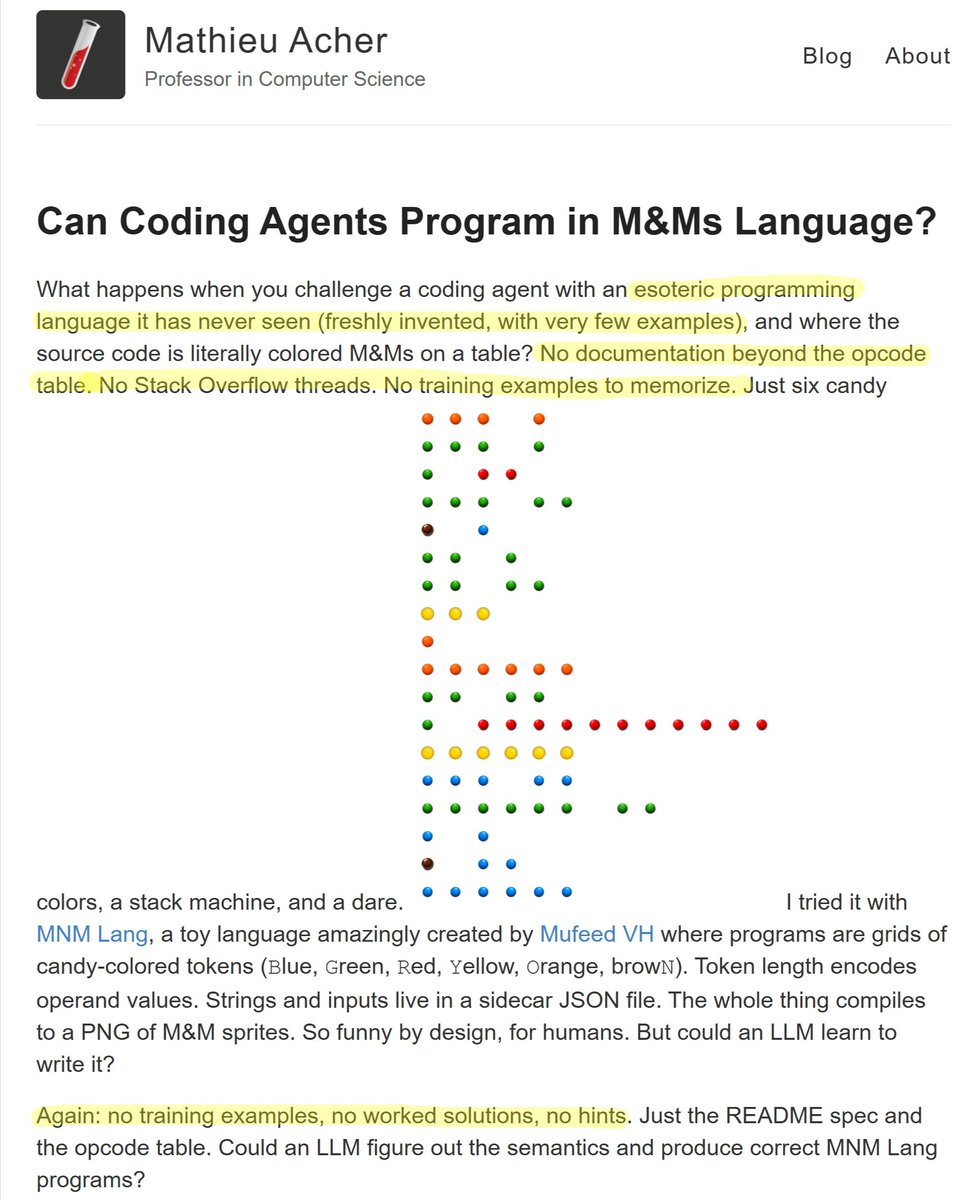

This is more evidence that current frontier models remain completely reliant on content-level memorization, as opposed to higher-level generalizable knowledge (such as metalearning knowledge, problem-solving strategies...)

Ok Time to write about my setup I guess 🙂