I've built an entirely new, 100% native, extendable, themeable, modal cmd-space launcher for macOS. 🍣 Meet Tuna. tunaformac.com

Sebastian Andil

2.8K posts

@selrond

Software Engineer specializing in Web Applications development

I've built an entirely new, 100% native, extendable, themeable, modal cmd-space launcher for macOS. 🍣 Meet Tuna. tunaformac.com

React Hook Form, TanStack Form, or Formisch — which one should you actually pick in 2026? 🧐 We compared all three across TypeScript inference, validation architecture, and performance as forms grow. ⚡️ No API walkthroughs. Just the tradeoffs that matter for real decisions. Give it a read! formisch.dev/blog/react-for…

If GPT-5.4 wasn’t so goddamn bad at UI it’d be the perfect model It just finds the most creative ways to ruin good interfaces… it’s honestly impressive

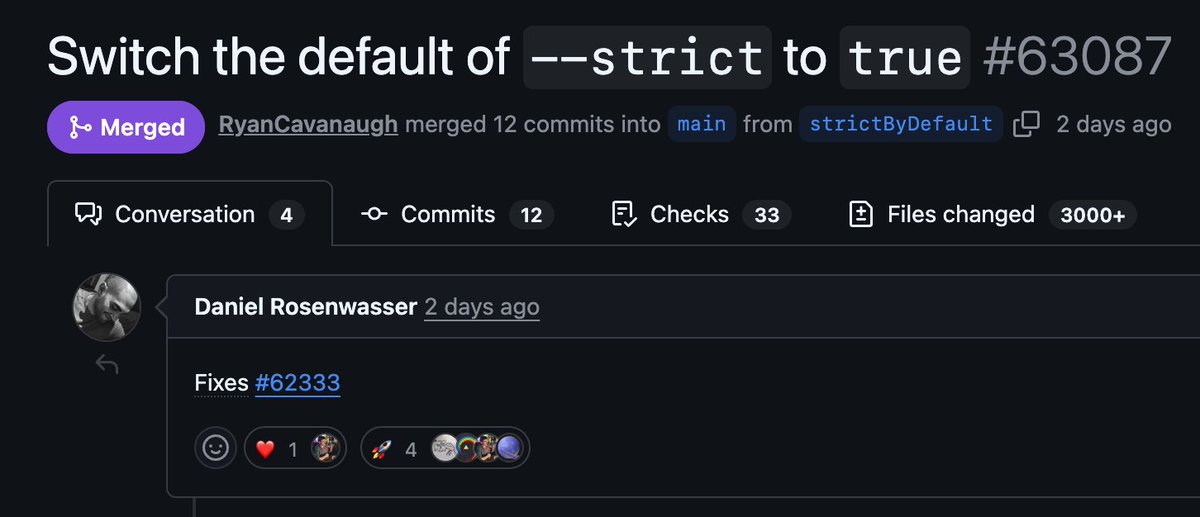

👀 TypeScript 6.0 (beta) drops next week It should be the last JS-based TSC version (no 6.1.*, only patches) TypeScript 6.0 is a “bridge release ” toward TypeScript 7.0, written in Go, ~10x faster

skills_sh is such a useful tool. Helped me discover that @addyosmani is already ramping out agent skills for common web development audits like SEO and HTML accessibility. Kudos 🙌