Sérgio Miguel Silva

2.5K posts

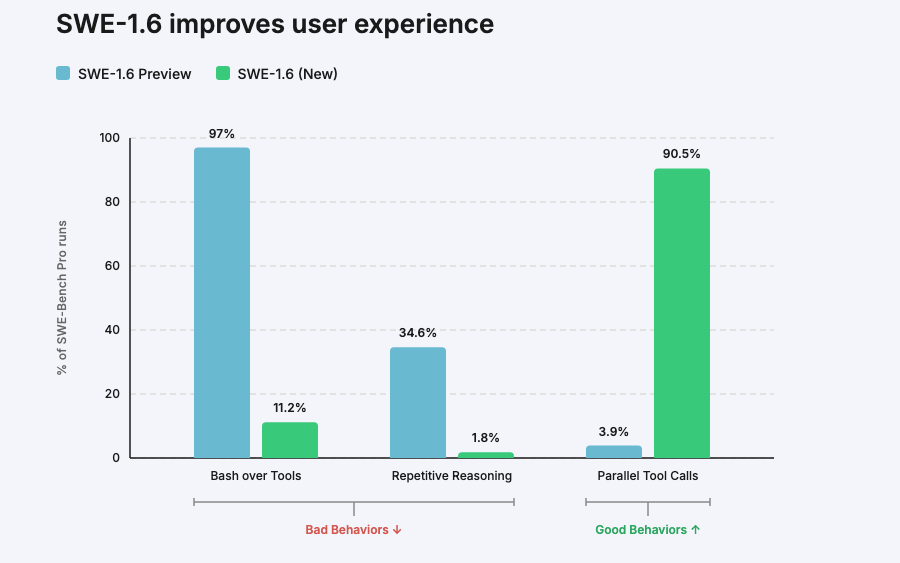

We’re releasing SWE-1.6, our best model in both intelligence & model UX. SWE-1.6 matches our Preview model on SWE-Bench Pro while dramatically improving on various behavioral axes. It’s available today in Windsurf in two modes: free tier (200 tok/s) and fast tier (950 tok/s).

Amazon is holding a mandatory meeting about AI breaking its systems. The official framing is "part of normal business." The briefing note describes a trend of incidents with "high blast radius" caused by "Gen-AI assisted changes" for which "best practices and safeguards are not yet fully established." Translation to human language: we gave AI to engineers and things keep breaking? The response for now? Junior and mid-level engineers can no longer push AI-assisted code without a senior signing off. AWS spent 13 hours recovering after its own AI coding tool, asked to make some changes, decided instead to delete and recreate the environment (the software equivalent of fixing a leaky tap by knocking down the wall). Amazon called that an "extremely limited event" (the affected tool served customers in mainland China).

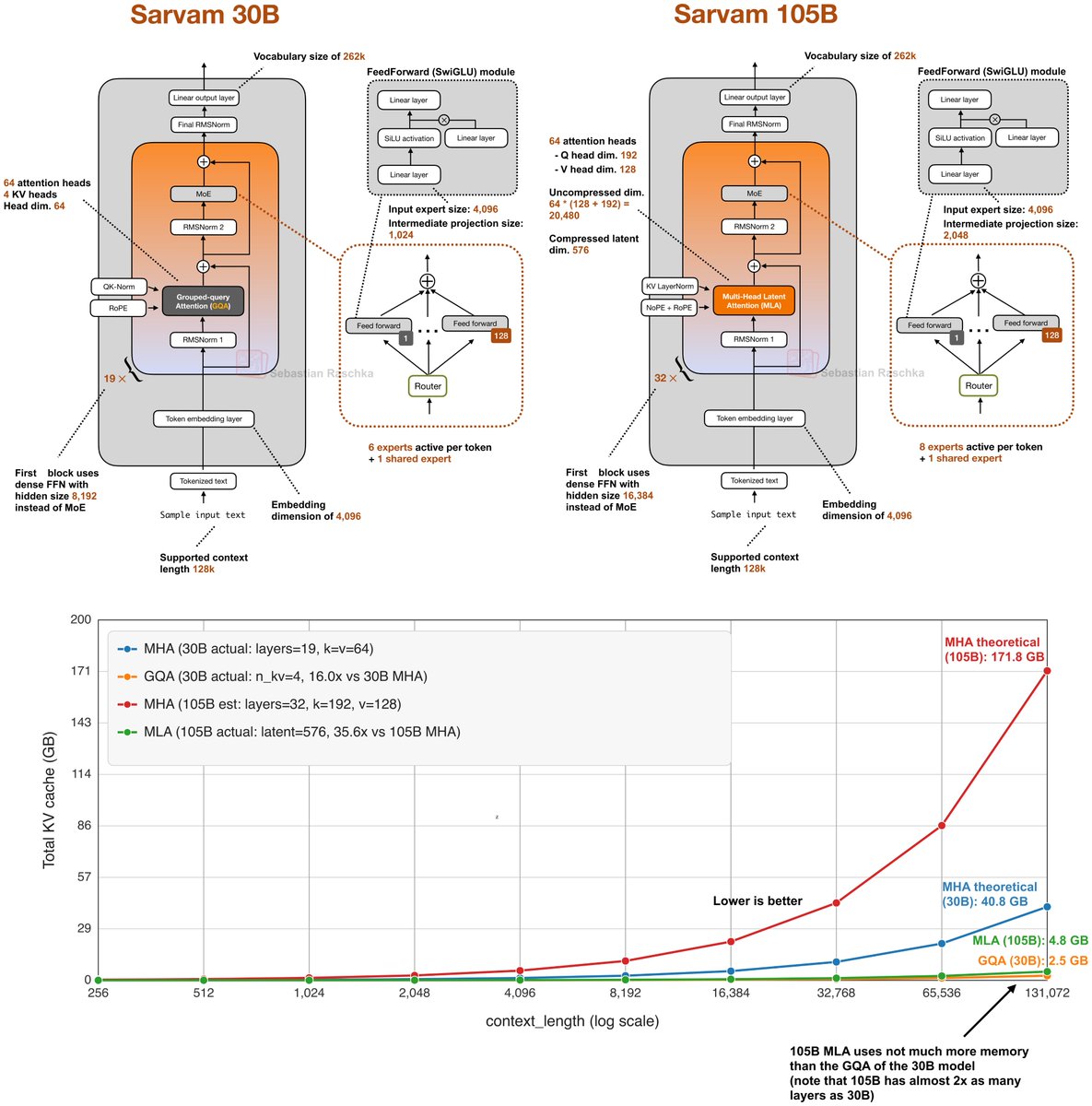

📢 Open-sourcing the Sarvam 30B and 105B models! Trained from scratch with all data, model research and inference optimisation done in-house, these models punch above their weight in most global benchmarks plus excel in Indian languages. Get the weights at Hugging Face and AIKosh. Thanks to the good folks at SGLang for day 0 support, vLLM support coming soon. Links, benchmark scores, examples, and more in our blog - sarvam.ai/blogs/sarvam-3…

『エヴァ』30周年を記念するフェス「EVANGELION:30+; 30th ANNIVERSARY OF EVANGELION」最終日である2月23日(月・祝)Final Programにて『エヴァンゲリオン』完全新作シリーズの制作に関する初報を発表致しました。 シリーズ構成・脚本 ヨコオタロウ 監督 鶴巻和哉、谷田部透湖 音楽 岡部啓一 制作 スタジオカラー × CloverWorks evangelion.jp/news/260223-1/

Moltbook is nothing more than a puppeted multi-agent LLM loop. Each “agent” is just next-token prediction shaped by human-defined prompts, curated context, routing rules, and sampling knobs. There is no endogenous goals. There is no self-directed intent. What looks like autonomous interaction is recursive prompting: one model’s output becomes another model’s input, repeated. Controversial outputs aren’t “beliefs,” they’re the model generating high-engagement extremes it learned from the internet, because the system rewards that behavior.

Moltbook is nothing more than a puppeted multi-agent LLM loop. Each “agent” is just next-token prediction shaped by human-defined prompts, curated context, routing rules, and sampling knobs. There is no endogenous goals. There is no self-directed intent. What looks like autonomous interaction is recursive prompting: one model’s output becomes another model’s input, repeated. Controversial outputs aren’t “beliefs,” they’re the model generating high-engagement extremes it learned from the internet, because the system rewards that behavior.

🚨The AI agent handbook Google just dropped a 46-page playbook on how to build and use agents. This is what you need to know (and how to get it 100% free):

🎁 What’s on your dev wishlist? How about secure, production-ready base images… free and open source?! Docker Hardened Images are now free for everyone, & backed by an Apache 2.0 license. Read more: bit.ly/4rYoCKI #DHI #OpenSource

.@OpenAI’s GPT-5.2 is now rolling out in public preview in GitHub Copilot. This model is focused on long context and front-end UI generation. Try it out in @code ⬇️ github.blog/changelog/2025…