Ben

45 posts

@shinhan2000

Chef tech _ @strikerobot_ai / master degree in AI

KX-01(4.5kg小型犬ロボ)。 Mujinaを参考に微調整&再学習しておいたので、凹凸も追加してSim-to-Simで確認(mujoco_ros2_control)。 Sim-to-Realに向けて、ゲームパッド操作とトリム調整にも対応。 前回より軽快さが増した気がする。

We recently released a survey / position paper on Agentic AI × World Models: Agentic World Modeling: Foundations, Capabilities, Laws, and Beyond The paper has received encouraging attention since its release, with related pages now surpassing 100K+ views. Thank you all for the discussions, feedback, and reposts especially for @dotey @_akhaliq @omarsar0 . We propose a Levels × Laws framework: Levels: L1 Predictor → L2 Simulator → L3 Evolver Laws: Physical / Digital / Social / Scientific World The paper surveys 400+ related works and summarizes 100+ representative systems, spanning model-based RL, video generation, Web/GUI agents, multi-agent systems, robotics, and AI for Science. We hope this work can provide a clearer conceptual map for the growing discussions around Agentic AI and World Models. GitHub: github.com/matrix-agent/a… (Thanks for your stars ⭐️ we will update it frequently) Paper: arxiv.org/abs/2604.22748 Homepage: agentic-world-modeling.xyz Feedback, critiques, and reposts are very welcome.

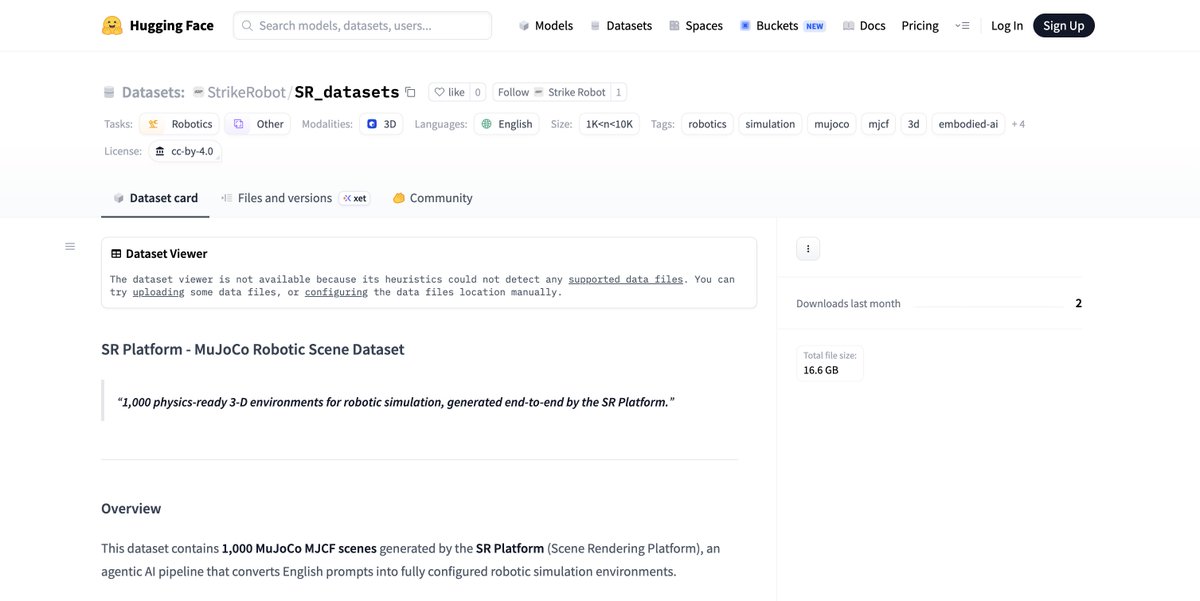

Finalist –– @StrikeRobot_ai A modular cognitive awareness system for autonomous robots. StrikeRobot enables robots to process environmental data and operate across different hardware and real-world environments.

Base Azul is going live next week. Higher throughput and more reliable settlement make Base more suitable for real-time agent coordination and machine payments. SR Platform will leverage x402 for pay-per-use simulation infrastructure, dataset access, environment APIs, and autonomous tool usage during robotics training workflows. SR Agentic will leverage x402 for autonomous API calls, machine-to-machine service payments, remote robotic task execution, and interaction with external AI/data services in real environments. With Base Azul + x402, robots move closer to operating as autonomous economic agents on @base

We are back. After one year of quiet building. Introducing GENE-26.5, our first robotic brain that takes a major step toward human-level capability. For years, robotics has struggled to learn from the world’s largest and valuable data source: Humans. Solving it means rethinking the whole stack from the ground up: - A robotics-native foundation model. - A 1:1 human-like robotic hand. - A noninvasive data collection glove for motion, force, and touch. - A simulator that turns weeks of experiments into minutes. GENE-26.5 is trained across language, vision, proprioception, tactile, and action. We designed a set of tasks to test how far we can go with this new paradigm. Fully autonomous, 1x speed, one model, same weights. (Enjoy with sound on) We are approaching the endgame for robotics. And this is just a beginning.

IT'S OFFICIAL! We're announcing our partnership with @AskVenice — a privacy-first, uncensored AI inference platform on @base — as a primary inference API backend for StrikeRobot’s product offerings. The partnership involves Venice's credit sponsorship and co-development with StrikeRobot to become the VLM reasoning and inference layer for robots, starting with SR Agentic and SR Platform. Robotic anonymous training is here!

From seeing → to understanding → now to following. In the previous video, we showed how the robot sees and navigates the world. This time, we’ve upgraded SR Agentic — it can now identify a designated person and autonomously follow them in real-time. Powered by perception + reasoning + adaptive navigation, the robot doesn’t just react - it tracks intent and moves with purpose.

Humanoid robotics is the next great labor shift — and the real opportunity is not in the hardware. Strike Robot is now live on @virtuals_io — we’re building SR Agentic, a plug-and-play agentic framework for robotics, starting with Unitree G1, enabling autonomous anomaly detection, safety monitoring, and continuous operation in complex environments. AI makes robots intelligent. $SR makes them investable. Every robot. Its own right. Our journey starts today.

Humanoid robotics is the next great labor shift — and the real opportunity is not in the hardware. Strike Robot is now live on @virtuals_io — we’re building SR Agentic, a plug-and-play agentic framework for robotics, starting with Unitree G1, enabling autonomous anomaly detection, safety monitoring, and continuous operation in complex environments. AI makes robots intelligent. $SR makes them investable. Every robot. Its own right. Our journey starts today.